Sabitlenmiş Tweet

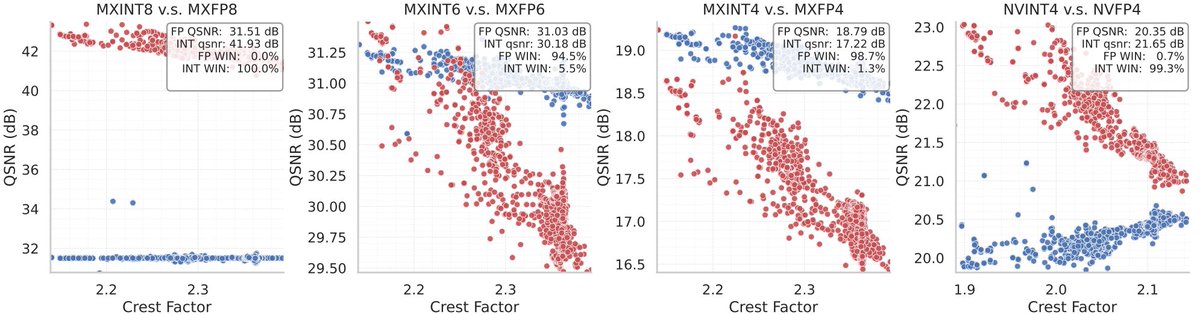

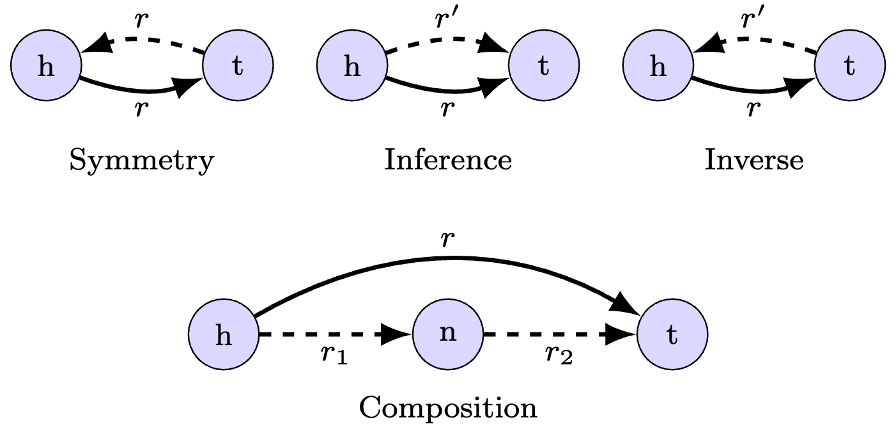

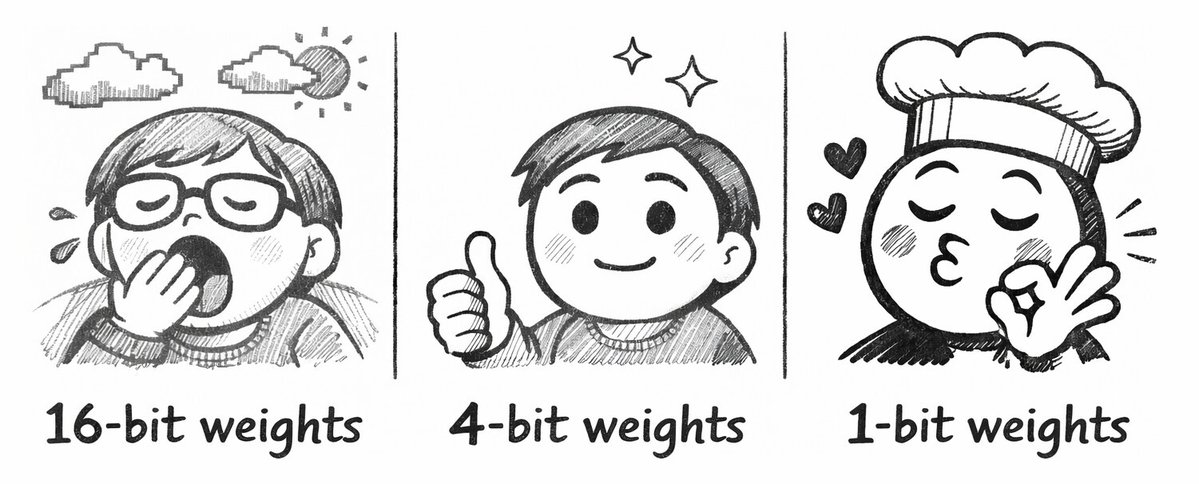

Would you rather use 1 million × 16-bit weights, 4 million × 4-bit weights, or even 16 million × 1-bit weights?

In joint work between Aleph Alpha Research and Graphcore, we asked this question of LLMs — the answer encouraged us to embrace the wonder ✨ of 1-bit weights, which can outperform 4-bit and 16-bit weights on a fixed weight memory budget.

In our work

- ⚖️ A scaling laws evaluation prompts us to consider very low-bit formats

- 📈 Scaled-up tests show the power of memory-matched models with 1-bit weights

- ⚡ Kernel benchmarking demonstrates their feasibility for autoregressive inference

Read all about it in our blog and paper (link below! ⬇️)

Massive thanks to our collaborators at Aleph Alpha Research!

Authors: @SohirMaskey, Constantin Eichenberg, @atomicflndr and @douglasahorr

English