Ximing Lu

200 posts

Ximing Lu

@GXiming

PhD @uwcse @uwnlp.

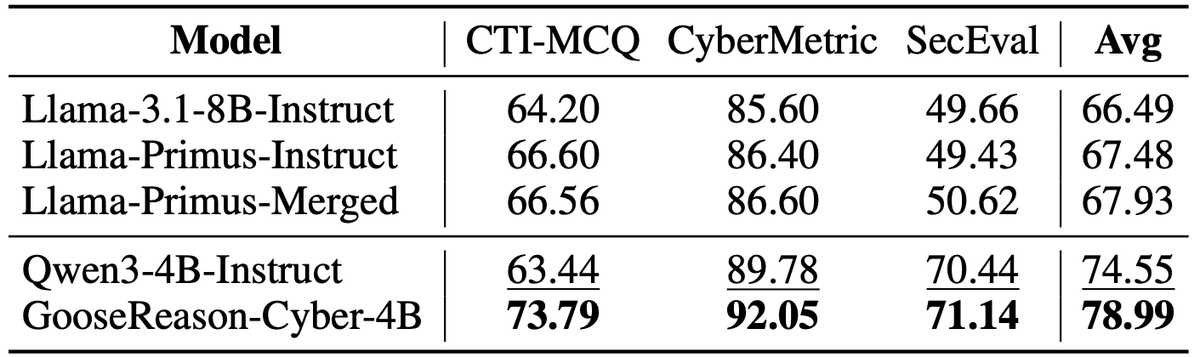

There’s growing excitement around scaling up RLVR to get continuous gains with more compute. But in practice, improvements saturate on finite training data. 😱 Introducing Golden Goose 🦢✨, a simple trick to synthesize unlimited RLVR tasks 😎 from unverifiable internet text. 🌐

We’re open-sourcing the data and model behind Golden Goose 🦢✨. Check them out and see how we turn unverifiable internet text 🌐 into large-scale RLVR tasks 😎. 📊 GooseReason-0.7M: huggingface.co/datasets/nvidi… 🤖 GooseReason-4B-Instruct: huggingface.co/nvidia/Nemotro…

There’s growing excitement around scaling up RLVR to get continuous gains with more compute. But in practice, improvements saturate on finite training data. 😱 Introducing Golden Goose 🦢✨, a simple trick to synthesize unlimited RLVR tasks 😎 from unverifiable internet text. 🌐

There’s growing excitement around scaling up RLVR to get continuous gains with more compute. But in practice, improvements saturate on finite training data. 😱 Introducing Golden Goose 🦢✨, a simple trick to synthesize unlimited RLVR tasks 😎 from unverifiable internet text. 🌐

There’s growing excitement around scaling up RLVR to get continuous gains with more compute. But in practice, improvements saturate on finite training data. 😱 Introducing Golden Goose 🦢✨, a simple trick to synthesize unlimited RLVR tasks 😎 from unverifiable internet text. 🌐

There’s growing excitement around scaling up RLVR to get continuous gains with more compute. But in practice, improvements saturate on finite training data. 😱 Introducing Golden Goose 🦢✨, a simple trick to synthesize unlimited RLVR tasks 😎 from unverifiable internet text. 🌐