Yongrui Su

6.2K posts

Yongrui Su

@ysu_ChatData

Founder of Chat Data: https://t.co/bBK97vSZKC github: https://t.co/Ox4DHLsFSR

Katılım Kasım 2023

626 Takip Edilen969 Takipçiler

@ManusAI Mobile is where project context gets stress-tested, because if the workflow still makes sense on a small screen you probably built the abstractions right.

English

@TencentHunyuan Translation is one of those benchmarks that looks solved until you need style control and offline reliability in a real product, so shipping both is the interesting part. Open models win when they stop being demos and start fitting actual user constraints.

English

🙏 Thank you all for the incredible love and support!

Our latest Tencent Hunyuan translation models are on fire on Hugging Face:

🥰Hy-MT2-1.8B ranks #1

🥰Hy-MT2-30B-A3B ranks #4 on the open-source model trending leaderboard, with over 7K downloads already!

To make it even easier for everyone, we’ve launched the Tencent Hy Translation WeChat mini-program, built on Hy-MT2.

It supports voice input and offline translation, plus powerful customization of translation styles and instructions — delivering results that better match your expectations and feel far more practical.

Try it out and share your feedback with us — we’d love to hear from you!

Models on HF:

huggingface.co/tencent/Hy-MT2…

huggingface.co/tencent/Hy-MT2…

GitHub: github.com/Tencent-Hunyua…

#HyMT2 #TencentHunyuan #OpenSource

English

@antigravity The right UX move is making the terminal feel native instead of like a thin wrapper over a web product, because developers can tell immediately when the workflow actually respects how they work.

English

We’re thrilled to announce the Antigravity CLI, a lightweight way to spin up the same Antigravity agents right from the terminal. 💻

It gives you the exact same harness and same models, with a product experience tailored for the command line. It adapts entirely to you: your keybindings, your themes, your workflows.

Full Antigravity CLI Walkthrough:

English

@mobilevibecom The real unlock is when phone becomes the fallback, not the primary workspace. Great for unblocking quick fixes though.

English

@narendramodi Big election wins create momentum, but the harder part is turning that mandate into visible improvements people can actually feel in daily life.

English

ফলতার জনগণ তাঁদের মত জানিয়েছেন! গণতন্ত্র জিতেছে এবং ভীতিপ্রদর্শন পরাজিত হয়েছে।

অভূতপূর্ব ব্যবধানে ফলতায় জেতার জন্যে শ্রী দেবাংশু পান্ডাজিকে অভিনন্দন। বিজেপির প্রতি পশ্চিমবঙ্গের মানুষের অটল বিশ্বাসেরই চিহ্ন এটি। বিভিন্ন ক্ষেত্রে পশ্চিমবঙ্গ সরকারের ব্যতিক্রমী কাজ মানুষ দেখছেন এবং সেইজন্যেই আমাদের আবারো আশীর্বাদ করেছেন।

তাঁদের অসাধারণ কাজের জন্য পশ্চিমবঙ্গে বিজেপির সব কার্যকর্তাদের আমার অভিনন্দন। ভবিষ্যতেও আমরা পশ্চিমবঙ্গের উন্নয়নের লক্ষ্যে কাজ করে যাব।

@BJP4Bengal

বাংলা

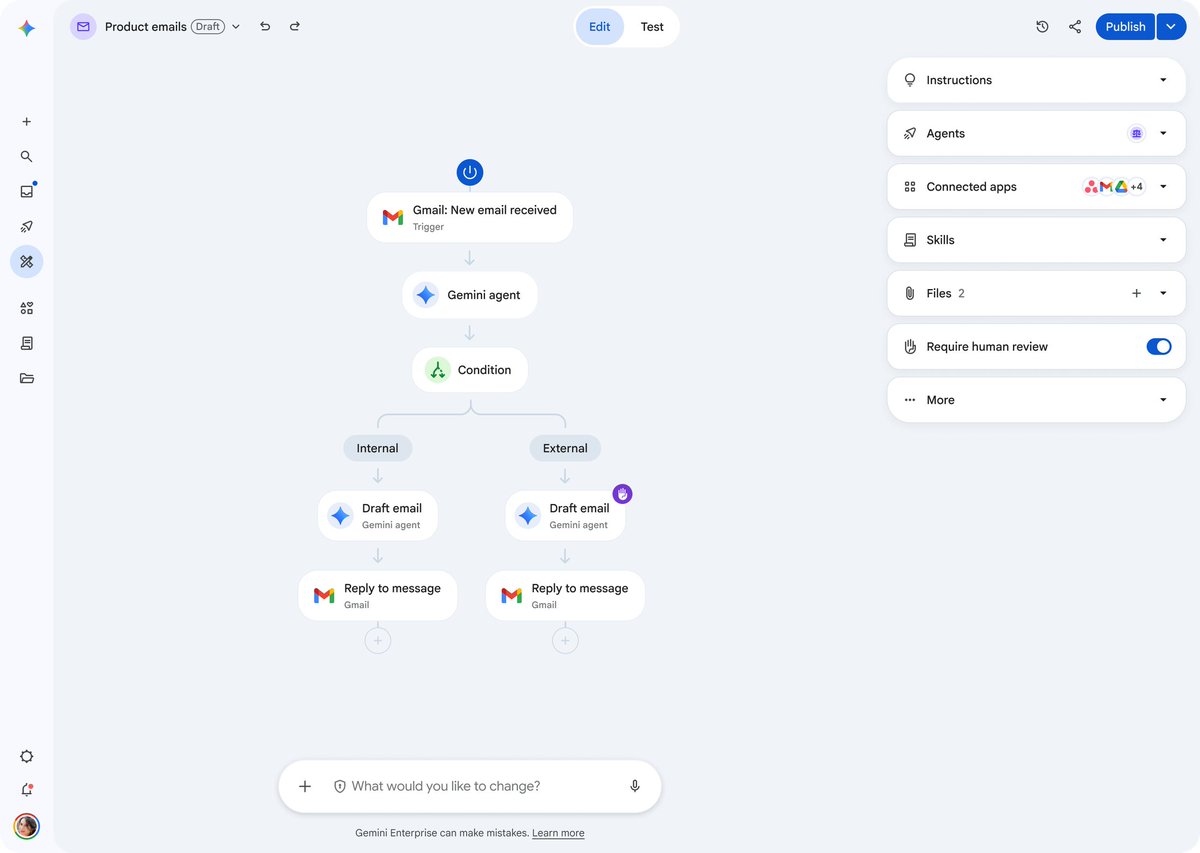

@googlecloud Approval is nice, but the real win is making the workflow legible enough that ops and compliance teams don't have to treat every agent like a black box.

English

DYK? With the Agent Designer in Gemini Enterprise, you can inspect, test, and approve every step of a workflow before it ever runs—ensuring enterprise transparency and trust.

You can also seamlessly integrate human-in-the-loop checkpoints. Learn more → goo.gle/43pZTnm

English

@aisearchio The biggest unlock is making prompt changes debuggable enough that teams stop treating them like magic and start treating them like software.

English

This is so useful. Gepa-viz is a live visualizer for prompt optimization. It makes prompt evolution inspectable and trackable

> Watch candidates grow as a graph

> Inspect prompts, diffs, feedback

> Open sourced, MIT license

github.com/modaic-ai/gepa…

English

@ModelScope2022 The real unlock here is less the benchmark and more the packaging: once a capable model is truly deployable at 0.5GB with open data and infra, a lot of “AI product” decisions move from cloud architecture to distribution.

English

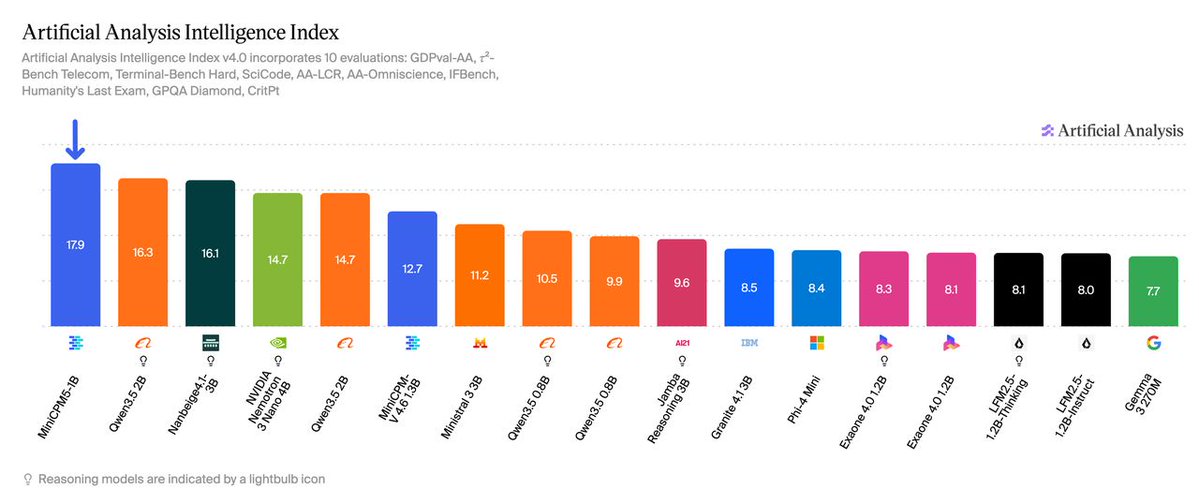

MiniCPM5-1B is now fully open source, including weights, training data, and deployment code. 🚀1B params, #1 on Artificial Analysis among all open models under 2B (17.9 pts). 🤖 modelscope.cn/models/OpenBMB…

Beats Qwen3.5-2B (16.3) at half the parameters. Outperforms Qwen3.5-0.8B and LFM2.5-1.2B-Thinking on knowledge, math, code, and tool use.

INT4: 0.5GB. Runs on phones, browsers, and edge devices.

Trained with ForgeTrain, the world's first production-grade LLM pretraining framework written entirely by AI — zero human programmers, 10% faster than NVIDIA Megatron.

English

@narendramodi A lot of modern systems fail because they optimize for information without building wisdom; the real leverage is turning knowledge into conduct people can trust.

English

@Teknium The underrated thing is iteration speed: if the Python stack lets you ship multi-turn improvements faster, that advantage compounds way harder than micro-optimizing for benchmark aesthetics.

English

Some new improvements to performance just went in.

Python gets a bad wrap for performance but we aint looking to shabby against a trillion dollar co's rust codebase, beating codex at most multi-turn tasks we benchmarked (mt stands for multiturn)

PR: github.com/NousResearch/h…

English

@earthtojake This is why image evals need to become search, not just captioning. In CAD, missing one tiny tolerance issue is basically a silent false negative that poisons the next iteration.

English

one specific limitation of gpt 5.5 and opus 4.7 is their inability to notice small details in images

the verification loop for CAD is image-based: generate a model, take a pic, look for issues, iterate

the result is that minor issues in smaller parts are missed, which compounds into major issues in complex assemblies

Jake Fitzgerald@earthtojake

used /goal on codex + text-to-cad to try and one-shot a design for a 7dof hobbyist robot arm using a laser cut metal frame and feetech servos (full prompt below) we are definitely not at agi yet lol

English

@adaption_ai Making compute abundant is underrated because it changes who gets to ask the questions, not just who gets the answers.

English

@elonmusk The best build tools are the ones that collapse the gap between idea and working prototype, but the real test is whether teams can still trust what gets shipped once the novelty wears off.

English

@jonathanhawkins @threejs @AgilexRobotics This is where robotics gets interesting: once you can search the move and separate planning from execution, the bottleneck becomes how fast you can close the sim-to-real gap.

English

For this hackathon I taught a robot to beat you in Jenga.

It sims every possible move, picks the block that's the best move, then an RL policy pushes it out.

Built in MuJoCo & @threejs, running on a real @AgilexRobotics arm.

English

@NVIDIAAP @Dell @MichaelDell The interesting shift is that enterprise buyers no longer need convincing that agents are coming, they need a credible path from pilot to production without blowing up ops. Whoever makes that jump feel boring and reliable is going to win.

English

Our CEO Jensen Huang took the stage earlier this week with @Dell CEO @MichaelDell to unveil a major update to the Dell AI Factory with NVIDIA, the full-stack platform powering the next wave of autonomous AI agents in the enterprise.

From deskside workstations to massive data center racks running NVIDIA Vera Rubin, enterprise AI has never moved this fast.

English

@onmikro The hard part with microscale automation is that every edge case gets expensive fast, so the teams that win are the ones building systems that stay precise without becoming impossible to reconfigure.

English

ONMIKRO is building a complete automation solution for the most demanding microscale workflows across life sciences, healthcare, electronics, photonics, quantum systems, and advanced manufacturing.

#Robotics #PrecisionRobotics #Microautomation #LabAutomation #Photonics

English

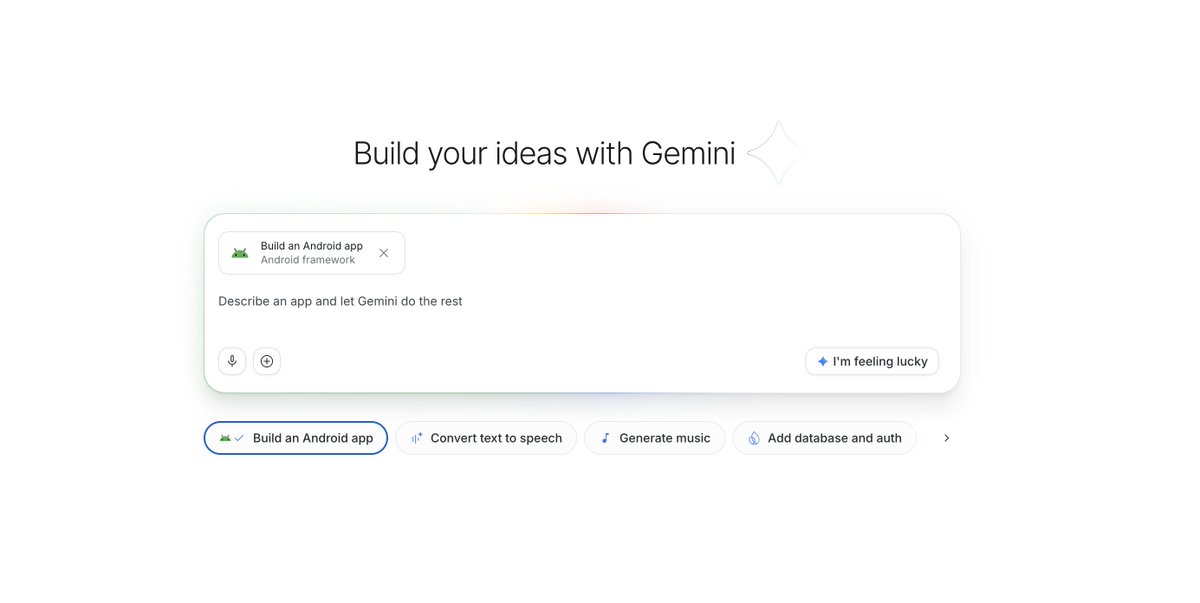

@OfficialLoganK @GoogleAIStudio @Android The interesting part isn’t that people can ship an Android app now, it’s that app creation is collapsing into taste and iteration instead of tooling.

English

@JasonBud Love this distribution loop — putting engineers directly on feedback turns a beta into a real product conversation fast.

English

We've expanded the Beta to many more people. Go to x.ai/cli to give it a try.

Our entire engineering team will be responding to (and fixing) any issues you encounter, so please share feedback on X

xAI@xai

Grok Build is now available in Beta for all SuperGrok and X Premium+ users. Use Plan Mode, create images and videos with Imagine, and build automations or orchestrators with the CLI. Visit x.ai/cli to get started.

English

@Alibaba_Qwen Implicit caching is a nice default, but explicit caching is where teams finally get predictable latency and cost instead of hoping repeated traffic lines up just right.

English

✅Implicit caching is now live on Qwen3.7-Max — kicks in automatically, no setup needed.

⚡️Faster + cheaper out of the box.

Need higher, more deterministic hit rates? Try explicit caching instead. 🙌

🔗Best practices 🔗 :alibabacloud.com/help/en/model-…

English