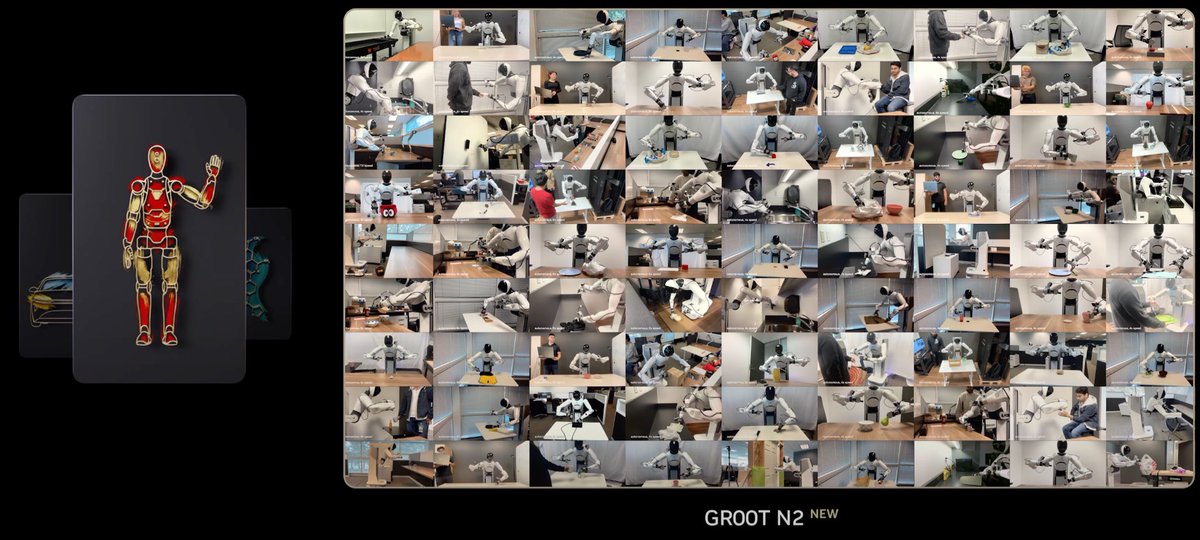

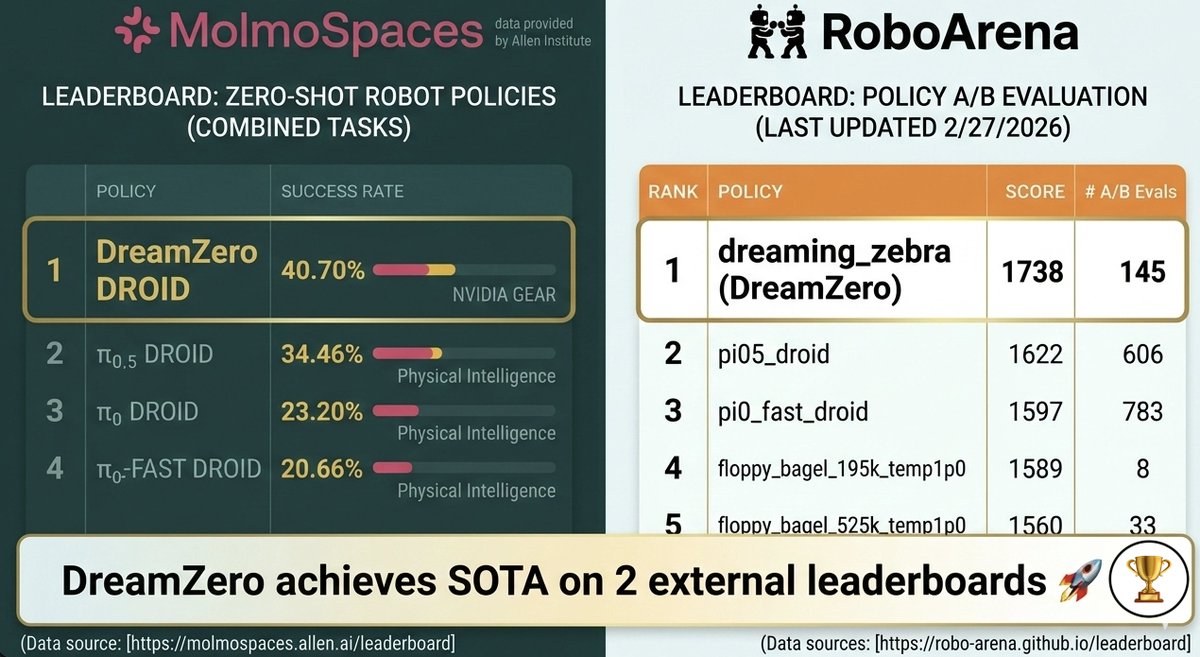

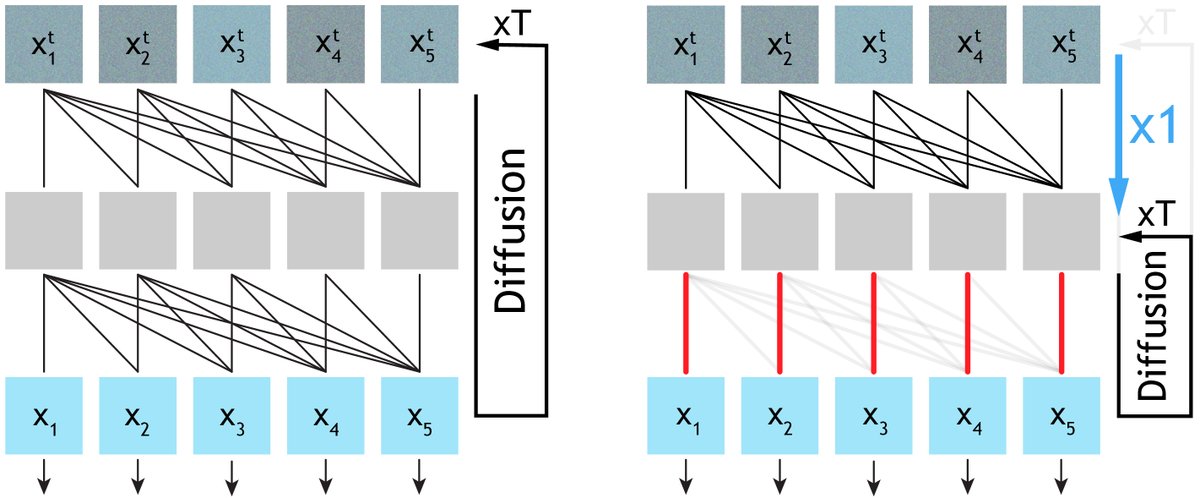

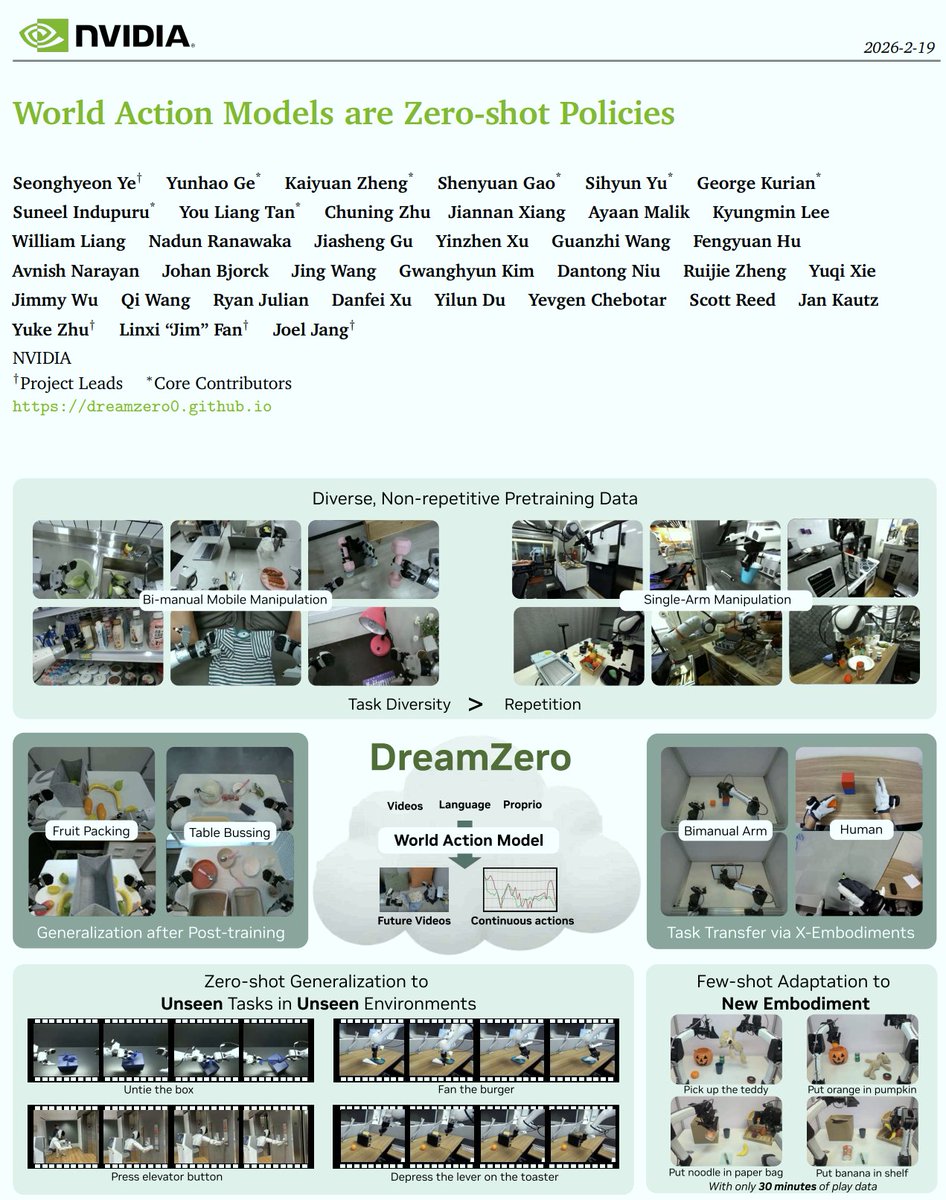

Introducing DreamZero 🤖🌎 from @nvidia > A 14B “World Action Model” that achieves zero-shot generalization to unseen tasks & few-shot adaptation to new robots > The key? Jointly predicting video & actions in the same diffusion forward pass Project Page: dreamzero0.github.io 🧵 (1/10)