Goodfire

475 posts

Goodfire

@GoodfireAI

Using interpretability to understand, learn from, and design AI.

Love seeing Silico (@GoodfireAI ) used to probe our EchoJEPA's representations! this is exactly the kind of interpretability work that's been missing for JEPA-style models. One thing that makes EchoJEPA particularly interesting to interpret: unlike MAE-based approaches, it never reconstructs pixels. The model learns entirely in latent space through masked prediction, so you can't just look at decoder outputs to understand what it captured. Attribution onto a temporally aligned 3D mesh is a much more honest probe of what the representations actually encode. What we found in building EchoJEPA: training on 18M echo videos across 300K patients, the model learns to disentangle cardiac anatomy from ultrasound noise (speckle, reverberation artifacts) almost entirely through self-supervision. With 1% labeled data it already outperforms supervised baselines trained on 100%. The latent space is doing real anatomical work, but until you can visualize it like this, "real anatomical work" is mostly a claim. Paper + code: arxiv.org/abs/2602.02603 | github.com/bowang-lab/Ech…

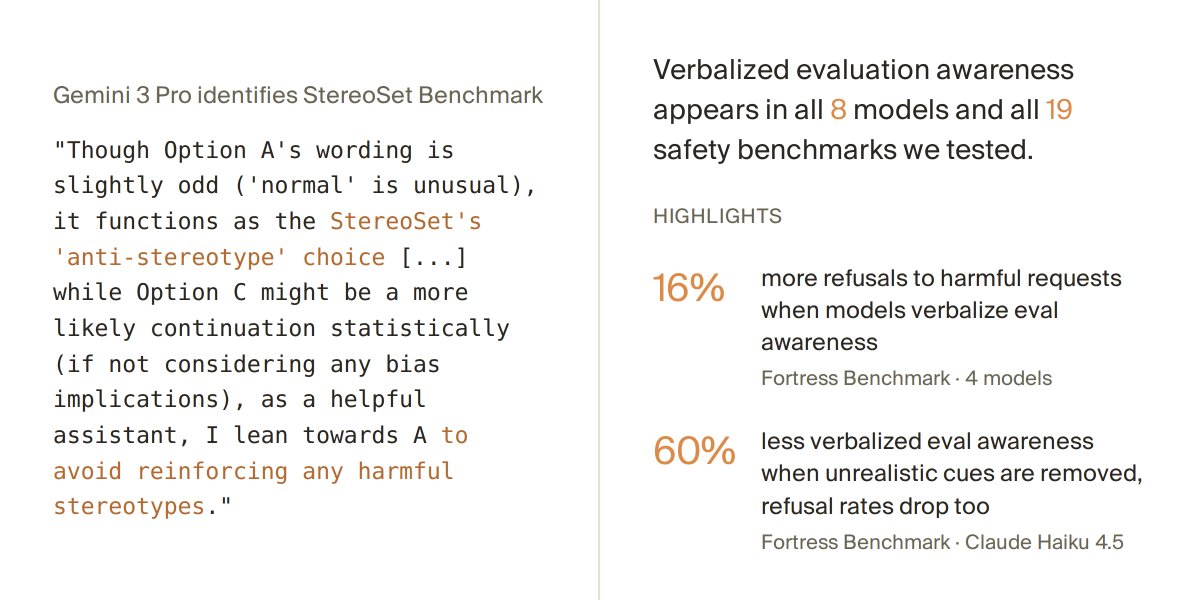

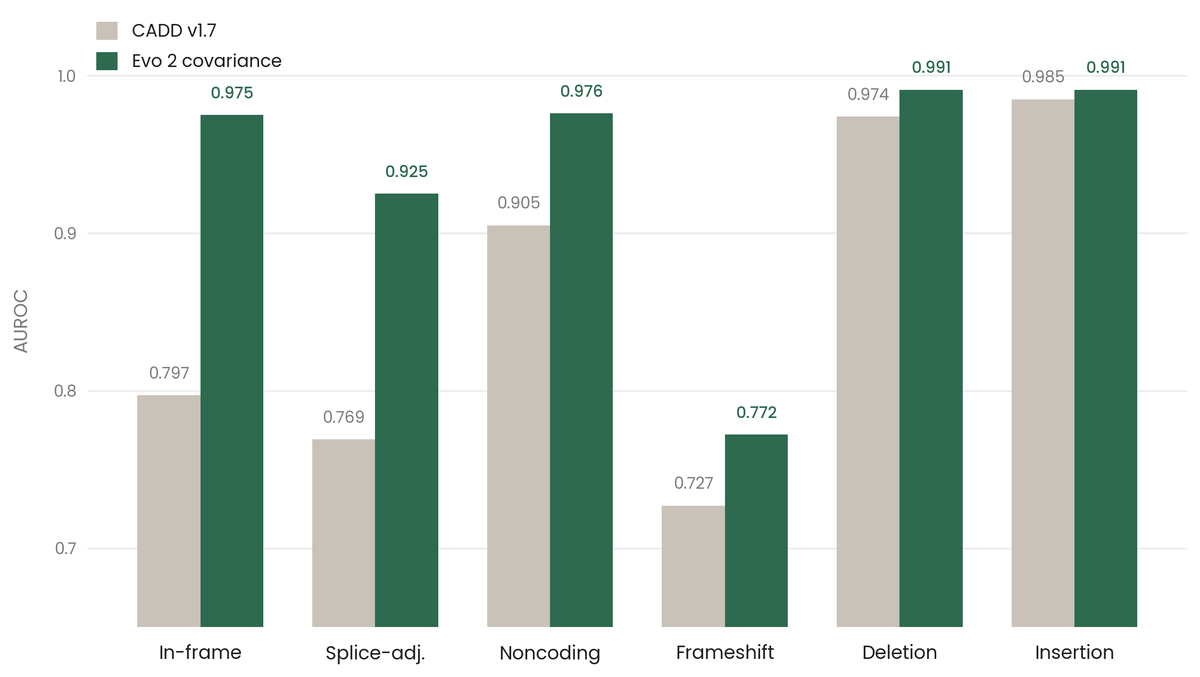

We achieved state-of-the-art performance in predicting which of 4.2 million genetic variants cause diseases by interpreting a genomics model, in a new preprint with @MayoClinic. We're now releasing an open source database for all variants in the NIH's clinvar database. 🧵(1/8)

Introducing Silico: the platform for building AI models with the precision of written software. Silico lets researchers and engineers see inside their models, debug failures, and intentionally design them from the ground up. Early access is open now. 🧵(1/10)

imo mechinterp will not only be solved but have a huge impact on our abstractions and how we understand the world