Network_illustrated

139 posts

Network_illustrated

@Grid_illustrate

A studio exploring complex systems & the fragmentation of truth through games, fiction & AI art. One concept, infinite worlds

Trump just signed a directive to accelerate 6G deployment to operate implantable technologies, which will include AI brain chips known as the Biological Interface System to Cortex (BISC). I will NOT take the 6G AI brain chip.

🚨BREAKING: US TREASURY JUST TERMINATED ALL USE OF ANTHROPIC PRODUCTS ITS SO OVER

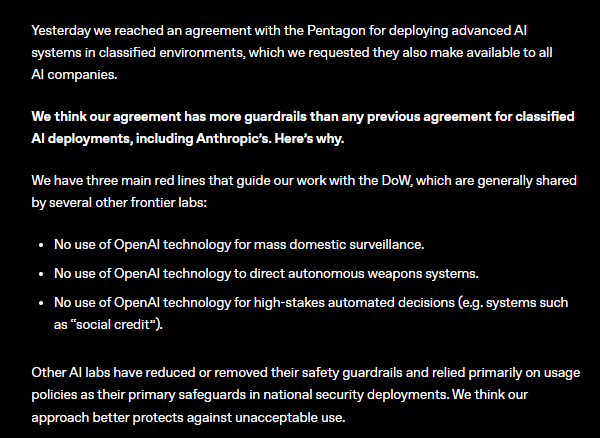

Yesterday we reached an agreement with the Department of War for deploying advanced AI systems in classified environments, which we requested they make available to all AI companies. We think our deployment has more guardrails than any previous agreement for classified AI deployments, including Anthropic's. Here's why: openai.com/index/our-agre…