Sabitlenmiş Tweet

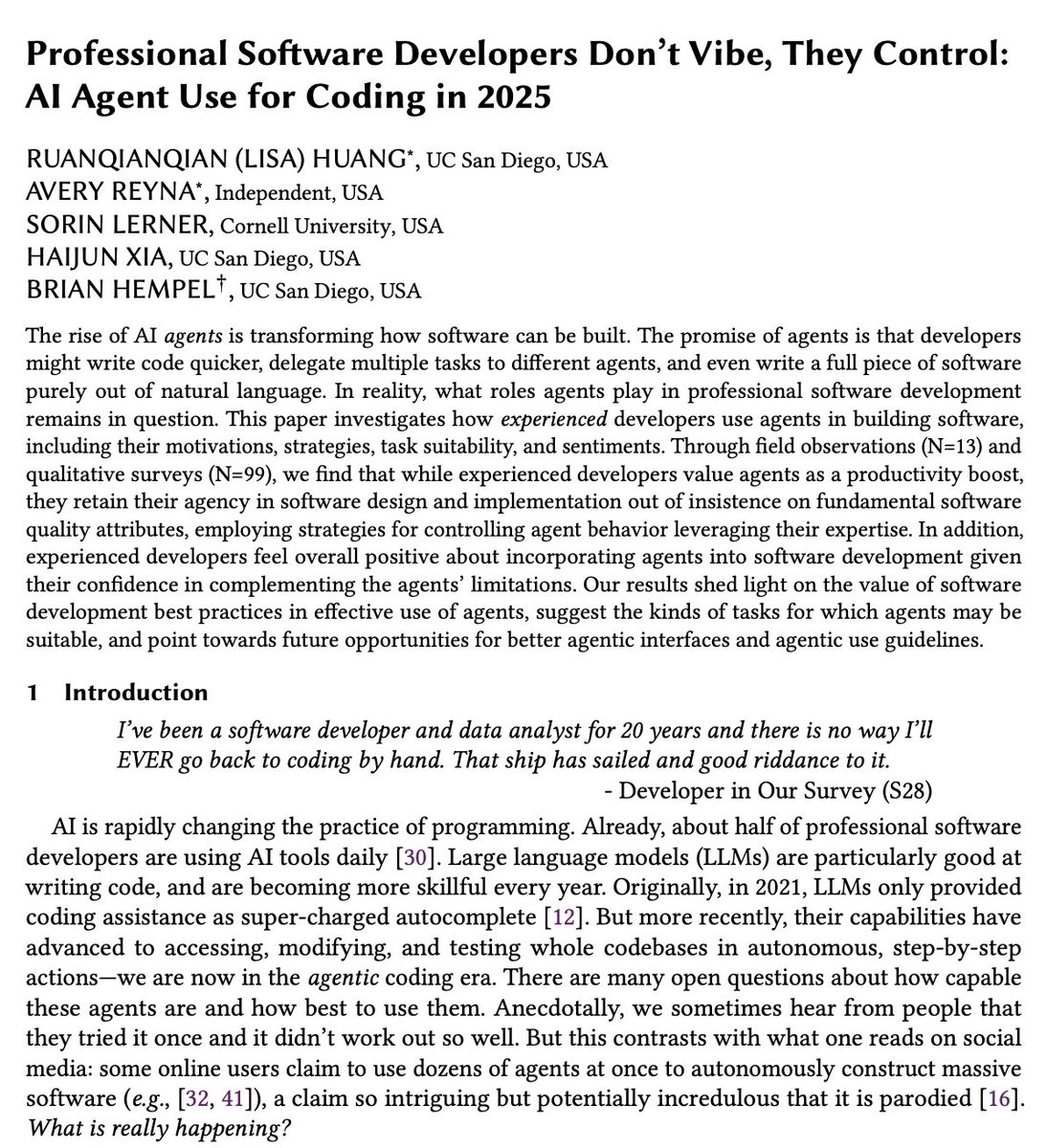

How to code with AI.

Key is understanding your system enough so you can make changes to it safely.

youtu.be/eIoohUmYpGI

YouTube

English

Andrew

3K posts

@Grokton

Decades long experience pro-dev. Doing "on-the-side" research into specialist super-efficient-ML-algorithms (not using matrices!). Working on vSaaS/micro-SaaS.

Thoughts and prayers.

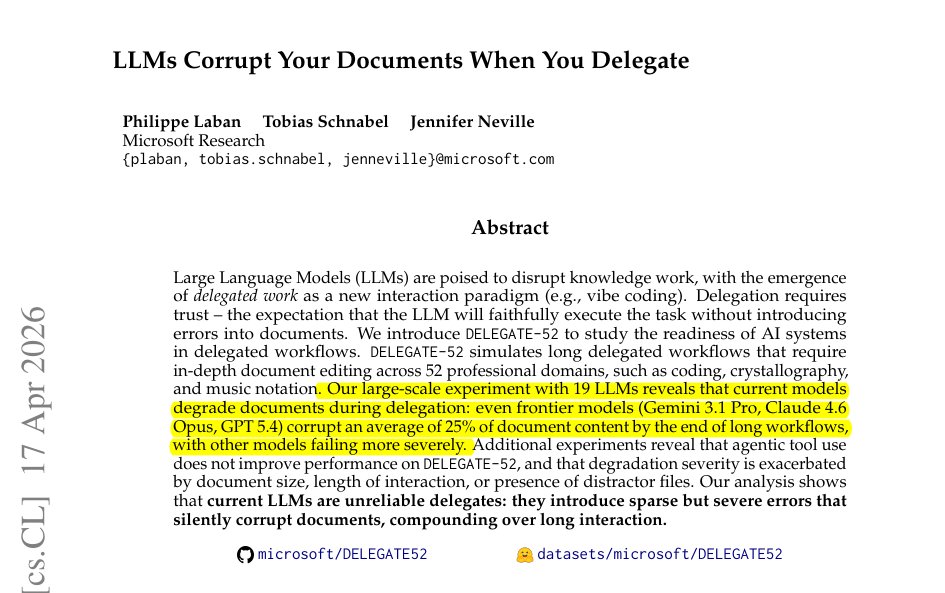

Sam Altman Says CEO’s Who Talk About AI Taking Everyone’s Jobs Are ‘Tone Deaf’ “Someone said to me just yesterday that … GPT 5.5 in Codex can accomplish in an hour what would have taken me weeks two years ago … and I have never been busier in my life.”

we want to build tools to augment and elevate people, not entities to replace them.