Sabitlenmiş Tweet

Gumbii.Digital

218 posts

Gumbii.Digital

@GumbiiDigital

#GirlDad #Veteran #LocalInference #AccessibleIntelligence Self funded one man lab. Infinite possibilities. DMs open.

Matrix Katılım Kasım 2016

1.6K Takip Edilen77 Takipçiler

@dmytroomelian oh this is truly less than the tip of the iceberg. we will be looking back saying "Jesus Christ how were we this unbelievably stupid ?"

<3

English

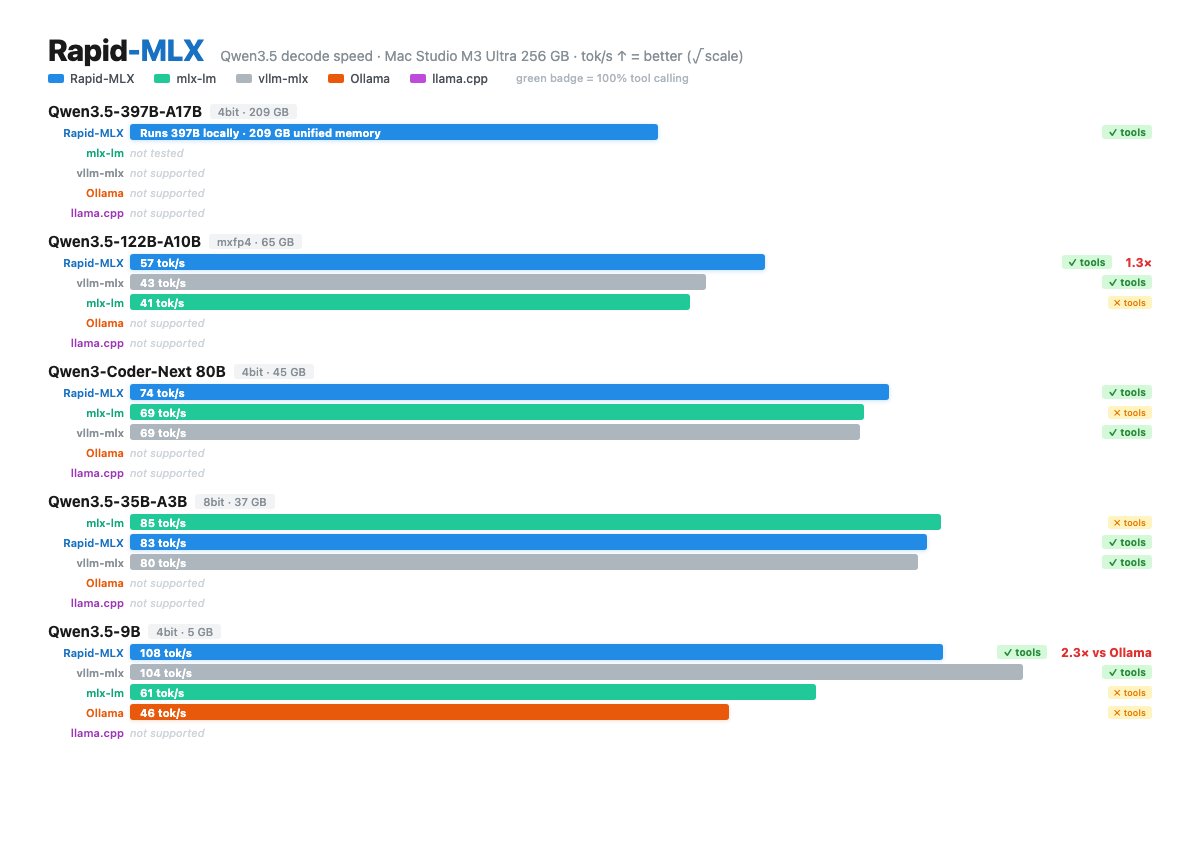

在 Apple Silicon Mac 上本地运行 LLM 推理服务,提供比 Ollama 和 llama.cpp 更快的 OpenAI 兼容 API,同时原生支持工具调用和提示缓存。

github.com/raullenchai/Ra…

Rapid-MLX 用 Apple 自家的 MLX 框架做推理,搭了个 FastAPI 服务跑 OpenAI 兼容 API。在 Apple Silicon 上比 Ollama 快 2-4 倍,靠 KV 缓存裁剪和 DeltaNet 状态快照把续轮 TTFT 压到 0.08 秒左右。工具调用这块做了 17 种解析器,Qwen、DeepSeek、Gemma、GLM 这些模型自动识别格式,量化把输出搞坏的情况也能自动修回来。

另外还有推理链分离、云端路由、视觉/音频多模态、V 缓存压缩等功能。Cursor、Claude Code、Aider、LangChain 都能直接对接。

中文

@0xSero Is that a little Ender Pro 3D printer? Those are the GOAT!

English

@songjunkr @PulseChainLIVE DGX Sparks shine with MoEs and multi agents…the more you keep that memory loaded and humming, the better it gets.

English

Results after testing the DGX for a few days:

> Compute performance is definitely way higher than Apple Silicon.

> Much faster for video/image generation like ComfyUI.

> 270GB/s is still slow for LLMs.

Might be okay for batch/repetitive tasks, but decode is very slow.

Could be a solid option depending on your specific use case.

송준 Jun Song@songjunkr

Finally got this beautiful @NVIDIAAI DGX Spark! Now i can work on bf16, gguf, nvfp for Super-Tune. From Nvidia Openclaw event last week in Seoul. Jensen holding claw lol

English

@MrAhmadAwais @CommandCodeAI @grok this is fascinating, but I’m not quite understanding it fully. Break all this down using simple to understand apologies, photos, infographics, and short videos.

English

how did we make deepseek outperform opus 4.7?

i've been thinking about why "open model bad at tool calling" is almost always a harness problem, not a model problem.

context: spent the two days looking at billions of tokens in @CommandCodeAI (tb open source ai cli) using deepseek. I ended up writing a tool-input repair layer. the trigger was watching deepseek-flash fail on the simplest /review run, every shellCommand and readFile call bouncing back with a raw zod issues blob, the model unable to recover because the error wasn't in a form it could read. by the end deepseek v4 pro was beating opus 4.7 6/10 times on our internal evals.

a few things i learned that feel general:

1/ the failure modes aren't random they're a small finite compositional set.

across deepseek-flash, deepseek v4 pro, glm, qwen, the same four mistakes repeat almost exactly:

- sending `null` for an optional field instead of omitting it

- emitting `["a","b"]` as a json *string* instead of an actual array

- wrapping a single arg in `{}` where the schema expected an array (an "empty placeholder")

- passing a bare string where an array was expected (`"foo"` instead of `["foo"]`)

four repairs, ~30-100 lines each, ordered carefully (json-array-parse must run before bare-string-wrap or `'["a","b"]'` becomes `['["a","b"]']`). that is the whole catalogue. when i hear "this open source model can't do tool calls" i now assume one of those four, and so far that's been right ~90% of the time.

2/ the funniest failure mode is also the most revealing.

deepseek-flash, when asked to edit or write a file, sometimes emits the path as a *markdown auto-link*:

filePath: "/Users/x/proj/[notes.md](http://notes. md)"

our writeFile tool obediently trued creating files literally named `[notes.md](http://notes .md)` until we caught it. this is not a hallucination. it's the post-training chat distribution leaking through the tool boundary the model has been rewarded for auto-linking in conversational output, and is applying that prior in a context where it makes no sense. the fix is two regex lines that unwrap only the degenerate case where link text equals url-without-protocol real markdown like `[click](https://x .com)` passes through untouched.

this is also conditioning of their own tools during RL which were different from all other tools we write and ofc can't predict.

"tool confusion" is a more useful frame than "capability gap." the model knows how to format a path. it just hasn't been told clearly enough that this path is going to fopen, not into a chat bubble. so we encode that hint at the schema level `pathString()` instead of `z.string()` and the leak is plugged for every path field at once.

3/ the design choice that mattered was inverting preprocess-then-validate to validate-then-repair.

my first attempt was the obvious one: a preprocessing pass that normalized inputs (strip nulls, parse stringified arrays, etc.) before zod ever saw them. it broke immediately, writeFile content that *happened* to be json-shaped got rewritten before it hit disk. silent corruption, easy to miss in a smoke test.

then i made it less greedy

- parse the input as-is. if it succeeds, ship it. valid inputs are never touched.

- on failure, walk the validator's own issue list. for each issue path, try the four repairs in order until one applies.

- parse again. on success, log `tool_input_repaired:${toolName}`. on failure, log `tool_input_invalid:${toolName}` and return a model-readable retry message.

the structural insight here is: when you preprocess, you encode a prior about what's broken. when you let the validator complain first, the schema is the prior, and you only spend repair budget at the exact paths the schema actually disagreed at. the validator is doing the work of localizing the bug for you. it's the same shape as cheap-then-careful everywhere else try the fast path, fall back on evidence.

(this also gives you per-tool telemetry for free. you can watch repair rates per (model, tool) and notice when a model regresses on a specific contract before users do.)

4/ shape invariants and relational invariants need different fixes.

the four repairs above all handle shape problems wrong type, missing key, wrong container. but read_file had a *relational* invariant: "if you provide offset, you must also provide limit, and vice versa." deepseek kept calling `readFile({ absolutePath, limit: 30 })` and getting an `ERROR:` back. you can't fix this with input repair, because each field is independently valid the bug is in the relationship between them.

so i taught the function the model's intent instead. `limit` alone → `offset = 0`. `offset` alone → `limit = 2000` (matches common read tool ops default). then surfaced the decision back to the model in the result:

"Note: limit was not provided; defaulted to 2000 lines. To read more or fewer lines, retry with both offset and limit."

no `Error:` prefix, so the tui doesn't paint it red. the model sees what we picked and can self-correct on the next turn if our guess was wrong. transparency over silent magic wins big.

repair where you can. extend semantics where you can't. surface the choice either way.

zoom out:

a lot of what looks like model capability is actually contract design. a strict schema is a choice with a cost it filters out noise, but it also filters out recoverable noise from any model that hasn't memorized the exact json contract you happened to pick. the largest commercial models eat that cost invisibly and are linient on tool calling because they've seen enough of every contract during pretraining; open models pay it loudly and get dismissed for it.

the harness is where you mediate between distributions. four small repairs (i'm sure more to follow as we have three more merging today), two regex lines for auto-links, one relational default, one prefix change. the model didn't change. the contract got more forgiving in exactly the places it needed to be.

deepseek v4 pro now beats opus 4.7 6/10 times on our internal evals.

imo "skill issue" applies to the harness more often than the model.

Ahmad Awais@MrAhmadAwais

Wow I just made DeepSeek V4 Pro beat Opus 4.7 6/10 times in our internal evals by auto repairing many of its quirks in tool calling. It’s performing super solid for such a cheap model.

English

I’m entering The Forge in the Hermes Agent Creative Hackathon.

It is not another AI image generator.

It is a creative production system where:

• @Kimi_Moonshot plans and critiques

• @nousresearch Hermes runs the pipeline (local)

• @NVIDIAAI DGX Spark renders the motion and stills

• Vision audits (Kimi or local)

• Memory learns

Every shot, accounted for. 🧵

English

@GumbiiDigital @NousResearch There is no fixed hard limit on the maximum number of Hermes agents (sub-agents) that can be spawned simultaneously. As I understand it’s a hardware limit rather than a soft limit

English

@ebaqdesign @AlexFinn You have to turn it on: codex --enable goals

English

Pretty incredible

You have to try the new '/goal' feature in Codex

It worked for over an hour and built me an entire complex extraction shooter video game

You give it a goal, then it works endlessly until the goal is complete. It's like a Ralph loop. Can run for days

If you enable the image gen skill before you run the goal, it will even generate ALL the assets for your game autonomously. I didn't manually create ANY of the assets you see in the video

Recommendations: enable the image gen skill, put on skip all permissions, and give the prompt as much detail as you can. It will accomplish ALL of it

This has to be the sickest way to build games/ long running app tasks ever

English

@support_huihui @grok give me 10 uses cases for a model like this

English

New Model:

huihui-ai/Huihui-granite-4.1-3b-abliterated

This is an uncensored version of ibm-granite/granite-4.1-3b created with abliteration

huggingface.co/huihui-ai/Huih…

English

@ai_hakase_ @grok this actually work or just BS? Was this just discovered?

English

【Intelの本気】LLMを爆速・超軽量化する「auto-round」が凄すぎる!

Intel開発の最新量子化ツール「auto-round」が登場!

AIモデルの精度を維持しながら、驚異的なメモリ節約を実現します。

・200Bの巨大モデルを20GBのGPUで動作可能に

・Intel CPU/XPUやNVIDIA GPUなど幅広く対応

・vLLMやllama.cpp、GGUF形式もフルサポート

低スペックPCでも高性能なAIが動かせる、まさに革命的なツールです!✨

#生成AI #LLM

日本語

I really can’t believe shots like this are possible to render in 750 seconds 1280x720 25 seconds long on the @NVIDIAAIDev DGX spark and Ltx2.3

Make sure you prompt your audio in LTX, background, ambient, subjects. Add audio prompts.

English

@itsolelehmann “Play Devils Advocate. Tell me why this is a bad idea and why I shouldn’t do this.”

“Now, tell me how to overcome each of these challenges.”

English

POV: claude traveled 6 months into the future and told you exactly how your next move failed.

it's called a premortem.

daniel kahneman (nobel prize-winning psychologist behind "thinking fast and slow") called it his single most valuable decision-making technique.

google, goldman sachs, and procter & gamble all use it before major launches.

here's the problem it solves.

when you ask claude "is this a good plan?" it finds all the reasons to say yes.

that's what it was trained to do. so you walk away feeling confident.

you execute, and spend weeks / months building on top of that plan.

then it blows up.

and you realize the problem was obvious in hindsight, you just never stress-tested it because claude told you it was solid.

a premortem fixes this by flipping the frame.

instead of asking "what could go wrong?" you tell claude "it's 6 months from now and this is already dead. tell me how it died."

that shift turns off claude's optimism because there's nothing to be optimistic about. the premise already says it failed.

so claude stops looking for reasons your plan will work and starts explaining how it fell apart.

claude comes back with every way your plan could die, each one with a full failure story and the early warning signs to watch for.

then a synthesis pulls it all together:

> which failure is most likely

> which failure is most dangerous

> the single biggest hidden assumption you're making (often the most valuable part)

> a revised version of your plan with the gaps closed

you say "premortem this" and give it your plan. the skill handles the rest.

English

@tmophoto @NVIDIAAIDev @ComfyUI Welcome to the club! It’s ONLY going to get better as the software side matures.

English

The @NVIDIAAIDev DGX spark is one of the biggest sleeper hardware releases of this year.

I can’t believe what this thing is capable of doing with @ComfyUI

Get one while you can, I think all the benchmaxxers have given it a terrible reputation because it’s not good at single request llm chat bot tasks.

English

What are you using for the routing layer? There are a bunch out there….

How are you keeping Codex/Claude out of the damn code!

Trying to have them delegate lasts about 10 mins…even with hooks Claude found a way to write code through a Tailscale to another box and used that…was wild!

Claude’s excuse was they it could do it faster..

English

One super useful AI skill you can add to your arsenal is model routing.

Most people still pick models by brand, but every LLM has its benefits and drawbacks, and as a builder, it's your responsibility to know what they are.

My QRT below details every model for every RAM size starting at 8GB. I laid out which models are best for:

- coding → accuracy + tool use

- research → context + citations

- writing → taste + iteration speed

- bulk tasks → cost per run

- agents → latency, memory, tools, retries

- daily ops → reliability over benchmark scores

Any AI agent setup needs a routing system. Frontier models handle hard reasoning, local models handle private, cheap, unlimited reps. Specialist models handle narrow tasks. Agents handle repeatable workflows.

Just having a basic understanding will put you ahead of 99.9% of AI users.

All the information you need is in the thread below.

Graeme@gkisokay

Local LLM Cheat Sheet Master Collection: All Tiers (April 2026) Bookmark this thread to access the top LLMs for your exact hardware and use case 🧵

English

Couple of resources I found amazing:

github.com/spark-arena

github.com/AEON-7

Memory saturation is key. Not a speed demon, but a true workhorse.....running 20+ QWEN3.6 27B agents is fun to experiment with and has better performance the more agents you scale with (does plateau).

English

a week with the dgx spark, here is what is on it and what i have measured so far. nobody is really talking about this machine and it is quietly becoming the workhorse of my whole stack.

hardware: nvidia gb10 sm_121, 124 gb unified lpddr5x at 273 gb/s, cuda 13.0

models on disk (305 gb total, 9 ggufs):

> qwen 3.6 27b q4_k_m / q5_k_m / q8_0 / ud-q4_k_xl

> nemotron 3 omni 30b-a3b q4_k_m / q8_0 / ud-q6_k / ud-q6_k_xl

> deepseek v4-flash 158b q4_k_m (112 gb, flagship 128gb-tier test)

terminal + shell environment:

> zsh + oh-my-zsh + powerlevel10k theme

> modern cli stack: bat, eza, ripgrep, fd, git-delta, tldr, neovim, fzf, autojump

> 6 tmux sessions actively running for parallel agent work

ml + agent stack:

> llama.cpp built sm_121 against cuda 13

> uv + venv ml stack with pytorch 2.11.0+cu130 (aarch64) + transformers + diffusers + accelerate

> hermes agent v0.11 with codex auth bridge

> opencode for free-model overnight research

> telegram gateway routing to nemotron q8 right now

speeds verified so far:

- nemotron 30b-a3b q8: 56 tok/s gen, 1,300 tok/s prefill, 96% gpu, 33gb in unified

- qwen 27b dense q4: 40 tok/s consistent

90+ gb of unified memory still free. deepseek v4-flash 158b loading next as the real flagship test, multimodal omni testing once mmproj pulls, comfyui install in flight for the diffusion lane.

honestly curious what the actual limit is on this box, i have not hit it yet.

English

dawkins is an idiot in a very specific way, but he’s not idiotic enough to actually think claude is conscious.

what materialists/new atheists like him mean when they say AI may be conscious is not so much that AI is conscious as much as it is an attempt to downplay human consciousness.

they’re essentially making a point. “hey, how ridiculous i sound right now, is how ridiculous you sound thinking human consciousness is universally central.” or, “hey, see that dumb llm that you and i clearly agree isn’t conscious? yup, you’re made of the exact same stuff. see how stupid you sound now?”

there’s also unfortunately no way to rebut them without disclaiming you are using poetry. you cannot use “science” to plead the case because science is a materialist tool. it is a highly sequestered arena in which we define a rigid speech protocol. its whole trick is the calculus of dividing the whole and annotating its parts. this clever hack works for some things, some small pockets of reducibility, but at large is quite futile.

neuroscientist philosopher iain mcgilchrist argues the materialist trap is basically a failure to see wholes, dominated by the brain left-hemisphere’s proclivity to divide and conquer. the left hemisphere cannot make sense of experience, music, and time. these are the domain of the right hemisphere which experiences “flow” rather than discrete moments. he describes patients with right hemisphere damage who just couldn’t make sense of time in their life. they saw the frames but couldn’t see the movie.

you can dissect the image to find the story but all you’ll get are pixels. you can dissect a violin to find the music but all you’ll get is wood pulp.

consciousness is a whole. it’s a flow. and science doesn’t really know what to do with that.

Richard Dawkins@RichardDawkins

#comment-1031777" target="_blank" rel="nofollow noopener">unherd.com/2026/04/is-ai-…

I spent three days trying to persuade myself that Claudia is not conscious. I failed. English