Sabitlenmiş Tweet

Yoav Gur Arieh

76 posts

Yoav Gur Arieh

@GurYoav

CS PhD Student at Tel Aviv University | AI researcher at doubleAI | Researching LLM interpretability

Katılım Ağustos 2019

180 Takip Edilen173 Takipçiler

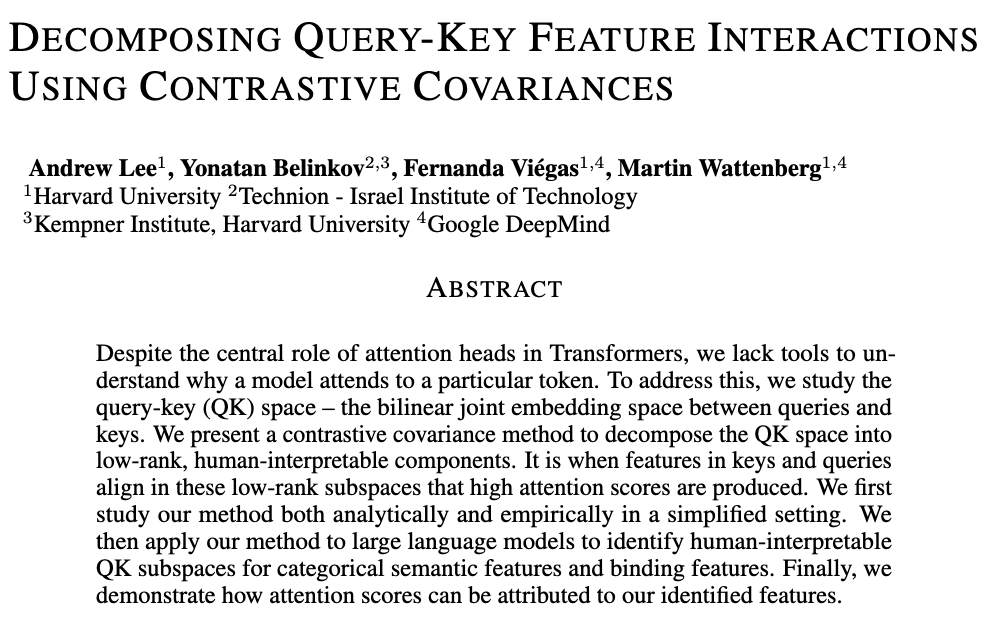

I'll be at #ICLR2026 presenting our paper on how LLMs perform entity binding!

📍Catch our poster on Friday 15:15-17:45 at Pavilion 4 (4306).

I’ll be around all week, happy to chat or grab coffee. Feel free to reach out!

Yoav Gur Arieh@GurYoav

🧠 To reason over text and track entities, we find that language models use three types of 'pointers'! They were thought to rely only on a positional one—but when many entities appear, that system breaks down. Our new paper shows what these pointers are and how they interact 👇

English

Yoav Gur Arieh retweetledi

🔥Super excited to share our new demo website for 🪄Interpreto!

🖼️It is basically an explanation gallery showcasing attribution and concept-based explanations for classification and generation.

🎮Play with it: for-sight-ai.github.io/interpreto-dem…

We will keep improving it, so stay tuned!

English

Yoav Gur Arieh retweetledi

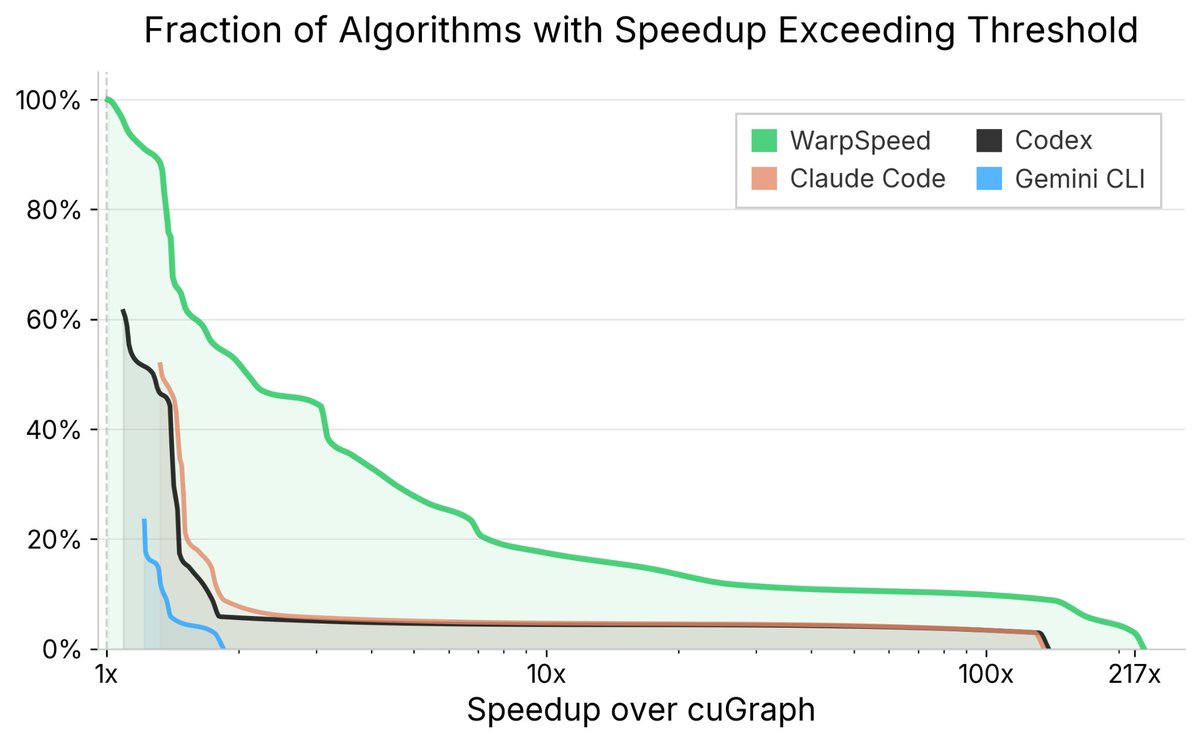

DoubleAI’s AI system just beat a decade of expert GPU engineering

WarpSpeed just beat a decade of expert-engineered GPU kernels — every single one of them.

cuGraph is one of the most widely used GPU-accelerated libraries in the world. It spans dozens of graph algorithms, each written and continuously refined by some of the world’s top performance engineers.

@_doubleAI_'s WarpSpeed autonomously rewrote and re-optimized these kernels across three GPU architectures (A100, L4, A10G). Today, we released the hyper-optimized version on GitHub — install it with no change to your code.

The numbers: - 3.6x average speedup over human experts - 100% of kernels benefit from speedup - 55% see more than 2x improvement.

But hasn’t AI already achieved expert-level status — winning gold medals at IMO, outperforming top programmers on CodeForces? Not quite. Those wins share three hidden crutches: abundant training data, trivial validation, and short reasoning chains. Where all three hold, today’s AI shines. Remove any one of them and it falls apart (as Shai Shalev Shwartz wrote in his post).

GPU performance engineering breaks all three. Data is scarce. Correctness is hard to validate. And performance comes from a long chain of interacting choices — memory layout, warp behavior, caching, scheduling, graph structure. Even state-of-the-art agents like Claude Code, Codex, and Gemini CLI fail dramatically here, often producing incorrect implementations even when handed cuGraph’s own test suite.

Scaling alone can’t break this barrier. It took new algorithmic ideas — our Diligent framework for learning from extremely small datasets, our PAC-reasoning methodology for verification when ground truth isn’t available, and novel agentic search structures for navigating deep decision chains.

This is the beginning of Artificial Expert Intelligence (AEI) — not AGI, but something the world needs more: systems that reliably surpass human experts in the domains where expertise is rarest, slowest, and most valuable.

If AI can surpass the world’s best GPU engineers, which domain falls next?

For the full blog: doubleai.com/research/doubl…

CuGraph:

docs.rapids.ai/api/cugraph/st…

Winning Gold at IMO 2025:

arxiv.org/abs/2507.15855

Codeforces benchmarks:

rdworldonline.com/openai-release…

@shai_s_shwartz post:

x.com/shai_s_shwartz…

From Reasoning to Super-Intelligence: A Search-Theoretic Perspective

arxiv.org/abs/2507.15865

Artificial Expert Intelligence through PAC-reasoning

arxiv.org/abs/2412.02441

English

Yoav Gur Arieh retweetledi

1/ Software was eating the world - and now AI is eating software.

AI already beats humans at math/coding (IMO, CodeForces). Right?

So let's test the strongest coding agents on a real domain: optimizing cuGraph (GPU graph analytics kernels).

Spoiler:

* The strongest coding agents crash.

* And @_doubleAI_ built WarpSpeed - an AI that beat a decade of expert-engineered GPU kernels.

🧵

English

@marktenenholtz Doesn't that make the responses too washed out / generic / hedgy?

English

Yoav Gur Arieh retweetledi

Yoav Gur Arieh retweetledi

📣📣New preprint

AI consciousness has never been more timely or polarized. Some call LLMs stochastic parrots🦜 Others warn about model welfare and existential risks ⚠️

In an interdisciplinary collaboration with @GoldsteinYAriel and @Liad_Mudrik, led by my student @noam_steinmetz, we use interpretability tools to test a key consciousness indicator from neuroscientific theories.

Our results show indications of belief-guided agency and meta-cognitive capacity in LLMs 🧵1/

GIF

English

@yoavgo Going off whether I'd want China able to do whatever it wants to other countries like it does to its neighbors+whether the dominant global power pushes for democ/autoc around the world

They also have a lot to offer the world, so who knows! I'm just more fearful than optimistic

English

@GurYoav but do you argue the chinese effect on the 22nd century be net negative? why?

English

@yoavgo Yeah, but I feel like in the past that bullying power was mainly directed towards more economic integration and global rule following (generally), which benefited the US, but also everyone else (EU, China). My perspective is that China's bullying so far seems more zero sum.

English

@GurYoav the US are also kinda bullish though? thats what empires seem to do?

English

@yihaoli_0302 Thanks! And def agree regarding probing. Didn't have enough time, but that would be a very interesting follow-up. Unfortunately I won't be at NeurIPS, but would love to discuss this more! Text/visual binding sounds very interesting :)

English

Really nice work! Binding in language can be more challenging than vision when it comes to handling longer contexts and larger numbers of entities so that they require different mechanims like pointers/ positional indexing. I really like how you systematically evaluated the range of possible mechanisms.

Method-wise, I think binding in language could also benefit from probing, alongside counterfactual interventions. For instance, probing for “which group index does the model believe the query belongs to?” or “which entity does the model think is lexically paired?” could complement the causal interventions and offer a more fine-grained view of how these mechanisms are represented internally.

I’m also very interested in binding in VLMs, especially how language and visual information about the same entity get bound together. I’d love to chat more!

English

🧵[1/8]

Excited to share our NeurIPS 2025 Spotlight paper “Does Object Binding Naturally Emerge in Large Pretrained Vision Transformers?” ✨

To add to the broader discussion of binding in neural networks, we ask whether and how Vision Transformers perform object binding (the ability to bind an object’s many features together as a coherent whole).💡

📄 Paper: openreview.net/pdf?id=5BS6gBb…

English

@yoavgo Also of course the US has also been a negative force (Afghanistan, Iraq), and more so recently. But I think it's hard to argue that their effect on the 20th century hasn't been net positive.

English

@yoavgo That makes China being on top and potentially calling certain shots via econ/tech superiority scary. Also they're bullies to their neighbors and that might expand to more countries if unchecked (eg around South China Sea, Australia etc)

English