HPC-AI Lab

22 posts

HPC-AI Lab

@HPCAILab

HPC-AI Lab @NUSingapore. High Performance Computing, ML Systems, AI Applications (e.g. CV, NLP, Biology)

🚀Serving MoE models made EASY and CHEAP!! We built EaaS — think of experts not as layers in a model, but as microservices you can spin up, replicate, or kill independently. No all-to-all. No static process groups. No system-wide crash when one GPU dies. Just: ⚙️ Clients (attention) ↔ Servers (experts) 🧠 Stateless → easy replication 📡 Asymmetric async P2P (no CPU involved!) 🧱 Fine-grained scaling without restarting and save real 💰! Monolithic inference is over. Serving is becoming cloud-native. Preprint here → arxiv.org/abs/2509.17863

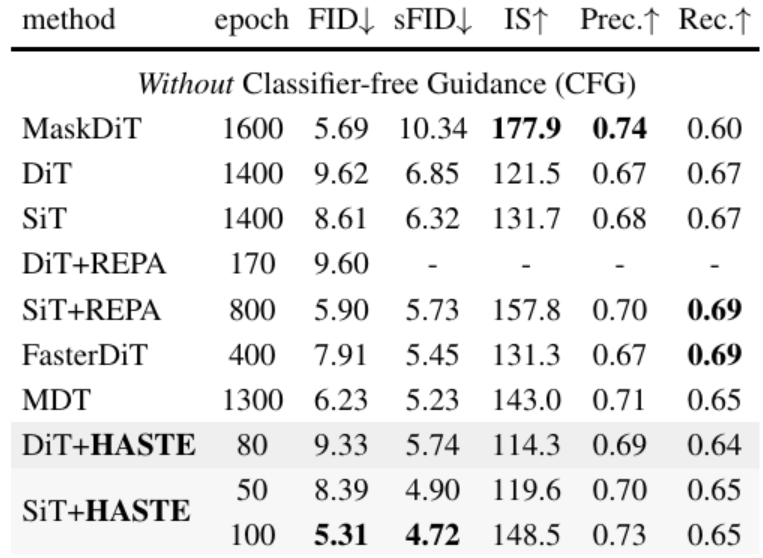

🚀Towards efficient Diffusion Transformers! 😆We are happy to introduce RAS, the first diffusion sampling strategy that allows for regional variability in sampling ratios, achieving up to 2x+ speedup! 🔌Training-free, plug and play! 💪Nice work with @MSFTResearch @YangYou1991 @Yif_Yang et al. 📜Paper: huggingface.co/papers/2502.10… 📖Blog: aka.ms/ras-dit ⌨️Code: github.com/microsoft/RAS (1/5)