Hao Su Lab

144 posts

Hao Su Lab

@HaoSuLabUCSD

Researching at the frontier of AI on topics of Computer Vision, Computer Graphics, Robotics, Embodied AI, and Reinforcement Learning @UCSanDiego @haosu_twitr

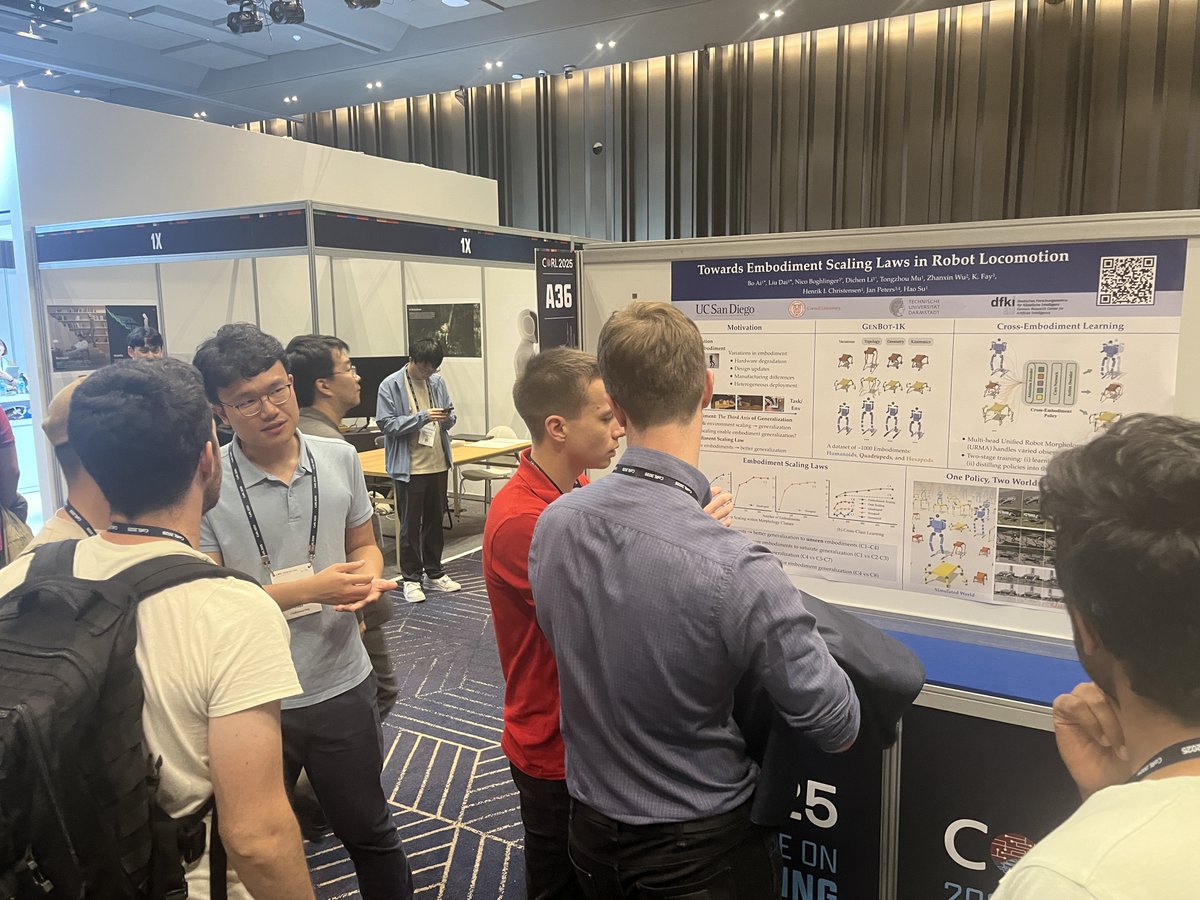

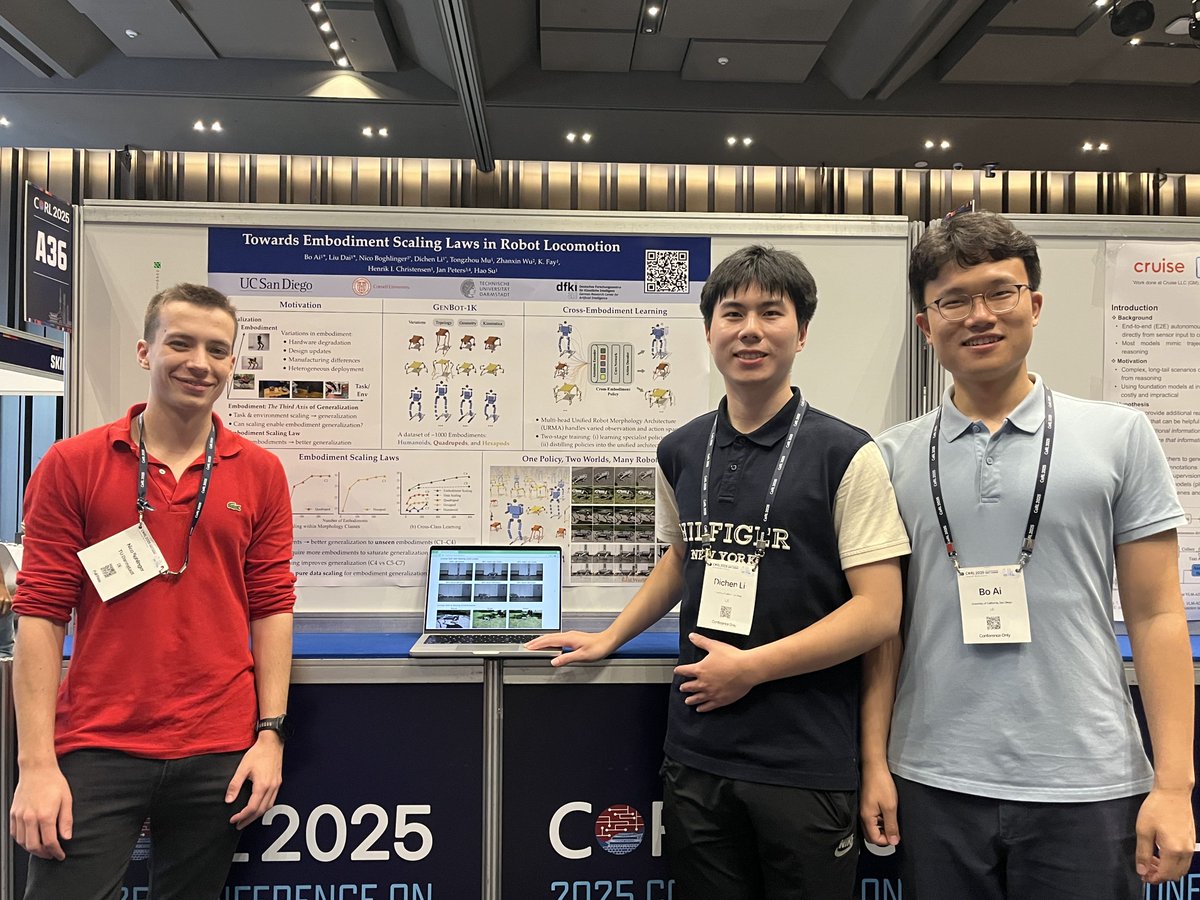

🧠 Can a single robot policy control many, even unseen, robot bodies? We scaled training to 1000+ embodiments and found: More training bodies → better generalization to unseen ones. We call it: Embodiment Scaling Laws. A new axis for scaling. 🔗 embodiment-scaling-laws.github.io 🧵👇

How to capture complex environment dynamics accurately with partial observations for world modeling? 🧐 Thrilled to share our recent work on world models for robotic manipulation - UniClothDiff: Diffusion Dynamics Models with Generative State Estimation for Cloth Manipulation, accepted to #CoRL2025 🎉. We target on the challenging task of cloth manipulation, which involves partial observability due to severe self-occlusion, a high-dimensional state space, and highly non-linear dynamics. We enable robots, like humans, imagine the state of cloth through a mental model 🧠 and foresee its future state during folding! 🔗 uniclothdiff.github.io 📜 arxiv.org/abs/2503.11999

Scalable, reproducible, and reliable robotic evaluation remains an open challenge, especially in the age of generalist robot foundation models. Can *simulation* effectively predict *real-world* robot policy performance & behavior? Presenting SIMPLER!👇 simpler-env.github.io

for anyone using ManiSkill 3 in their research / upcoming conference deadlines, we will have a v1 preprint out some time week so you cite it! A v2 version with more experiments (mostly just running baselines + data collection atm) will come out later

Hand-eye calibration is critical for sim2real in robotics. We propose EasyHeC, a differentiable-rendering-based hand-eye calibration system that is highly accurate, automatic, & convenient, thus significantly reducing sim2real gap in object manipulation! ootts.github.io/easyhec/

Scalable, reproducible, and reliable robotic evaluation remains an open challenge, especially in the age of generalist robot foundation models. Can *simulation* effectively predict *real-world* robot policy performance & behavior? Presenting SIMPLER!👇 simpler-env.github.io

LeRobot also features the Diffusion Policy, a powerful imitation learning algorithm, and TDMPC, a reinforcement learning method that includes a world model, continuously learning from its interactions with the environment. diffusion-policy.cs.columbia.edu yunhaifeng.com/FOWM

Want to obtain time-consistent dynamic mesh from monocular videos? Introducing: Dynamic Gaussians Mesh: Consistent Mesh Reconstruction from Monocular Videos liuisabella.com/DG-Mesh/ We reconstruct meshes with flexible topology change and build the corresp. across meshes. 🧵(1/n)

Check our MeshLRM 🌟, which achieves state-of-the-art mesh reconstruction from sparse-view inputs within 1 second! 🚀🚀🚀 Paper: arxiv.org/abs/2404.12385 Project page: sarahweiii.github.io/meshlrm/