Generative History

286 posts

@HistoryGPT

Exploring how historians can engage with Generative AI

Book review 📚 Artificial-intelligence models will supposedly take over the world, but AI innovator Luc Julia tells Nature that they’re little more than glorified pocket calculators go.nature.com/4lPpuPd

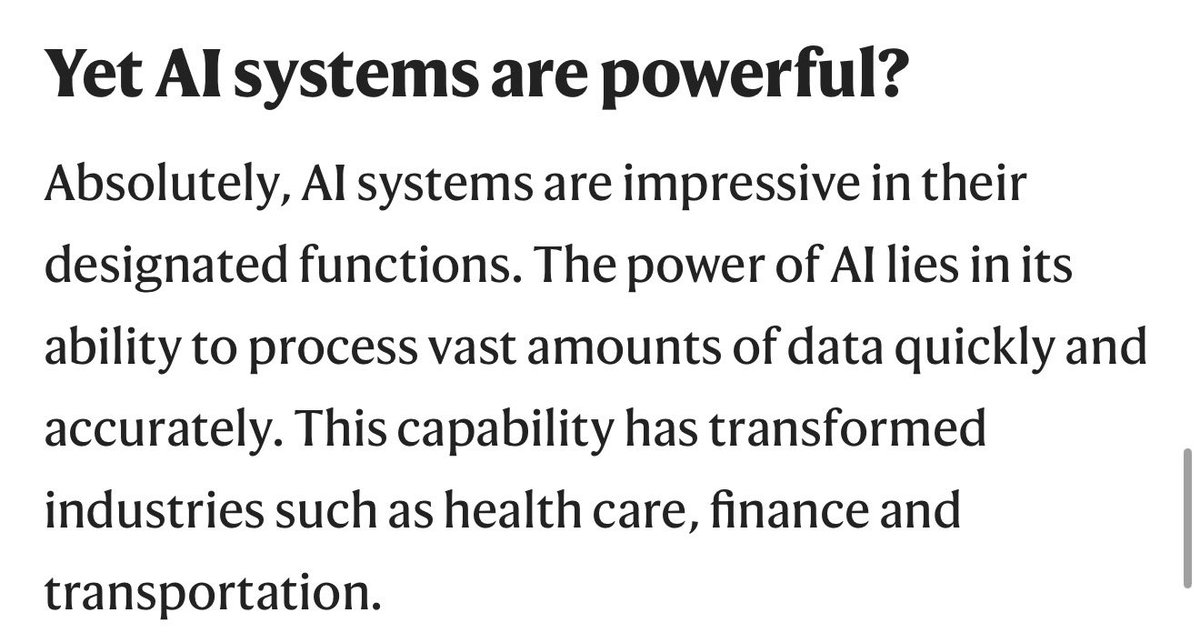

V2 has 100 questions and 70+ model variants tested (model + reasoning levels) - Anthropic and Qwen 3.5 are only models that are much above 60%.

If AI produces unprecedented levels of technological disruption on time scales that are an order of magnitude or two faster than anything in human history, it's going to be an unprecedented political fight. And FWIW, the timelines potentially line up with the 2028 U.S. election.

We estimate that Claude Opus 4.6 has a 50%-time-horizon of around 14.5 hours (95% CI of 6 hrs to 98 hrs) on software tasks. While this is the highest point estimate we’ve reported, this measurement is extremely noisy because our current task suite is nearly saturated.