Intelligent Robotics and Vision Lab @ UTDallas

77 posts

Intelligent Robotics and Vision Lab @ UTDallas

@IRVLUTD

Welcome to the X page of the Intelligent Robotics and Vision Lab @UT_Dallas

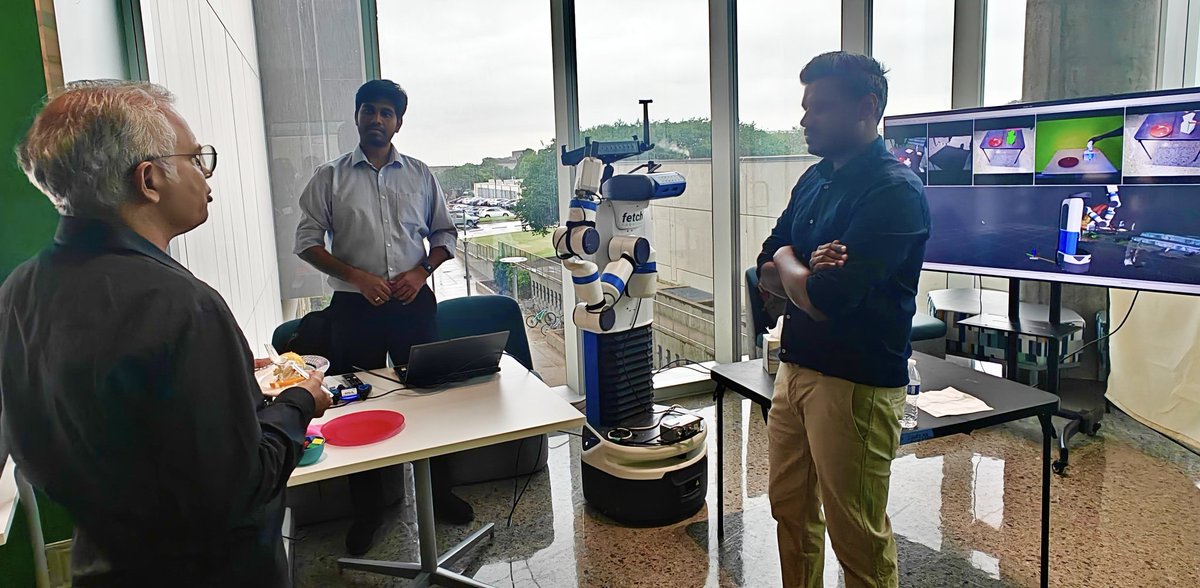

Stepping out of the lab and into the real world. Today at #ECSAIDays2026, we’ll be presenting a live demo of #HRT1 — a system that transfers human demonstrations to robot actions for mobile manipulation. 🔗 irvlutd.github.io/HRT1/ This short timelapse is a behind-the-scenes glimpse of what it actually takes. From moving the robot across campus, navigating buildings, setting up hardware, testing repeatedly, to making everything work outside controlled environments — a lot goes into what eventually looks like a “simple demo.” Especially in smaller labs, it’s all hands-on, end-to-end effort. Also, when it’s just two of us running a two-person setup and trying to film it, the camera doesn’t always cooperate 😄 — but the live demo will be much more fun. Grateful to be building this with @saihaneesh_allu at the @IRVLUTD If you’re around, drop by and see it live. 📍 ECSW, UT Dallas ⏳ Apr 30, 2026 Thanks to @Sriraam_UTD, @VibhavGogate, @YuXiang_IRVL and Tyler Summers for the opportunity and support. @UT_Dallas @UTDCompSci @UTDResearch

A group photo in the end @IRVLUTD

Attending the Texas Regional Robotics Symposium at UT Arlington today!

DINOv3 seems very good at matching objects across environments as well

A reward model that works, zero-shot, across robots, tasks, and scenes? Introducing Robometer: Scaling general-purpose robotic reward models with 1M+ trajectories. Enables zero-shot: online/offline/model-based RL, data retrieval + IL, automatic failure detection, and more! 🧵 (1/12)

Thrilled to have won the Louis Beecherl, Jr. Graduate Fellowship for 2025–2026 from the Erik Jonsson School at @UT_Dallas ! This prestigious merit-based award has been a game-changer for my PhD in Computer Science and research at the Intelligent Robotics and Vision Lab—real financial support and recognition. Grateful to the committee! Current/future UTD grad students: Apply for the 2026-2027 Graduate fellowships! It eases the journey and rewards excellence. Don't miss out. 🚀 #UTDallas #Fellowship

If you want to learn ROS2 with MoveIt2 and Gazebo simulation, check out the five homework in my robotics course: labs.utdallas.edu/irvl/courses/f… By the end, you'll have a Fetch robot picking up a cracker box. Everything runs in Docker on Windows or Mac. #robotics #ROS2