Sabitlenmiş Tweet

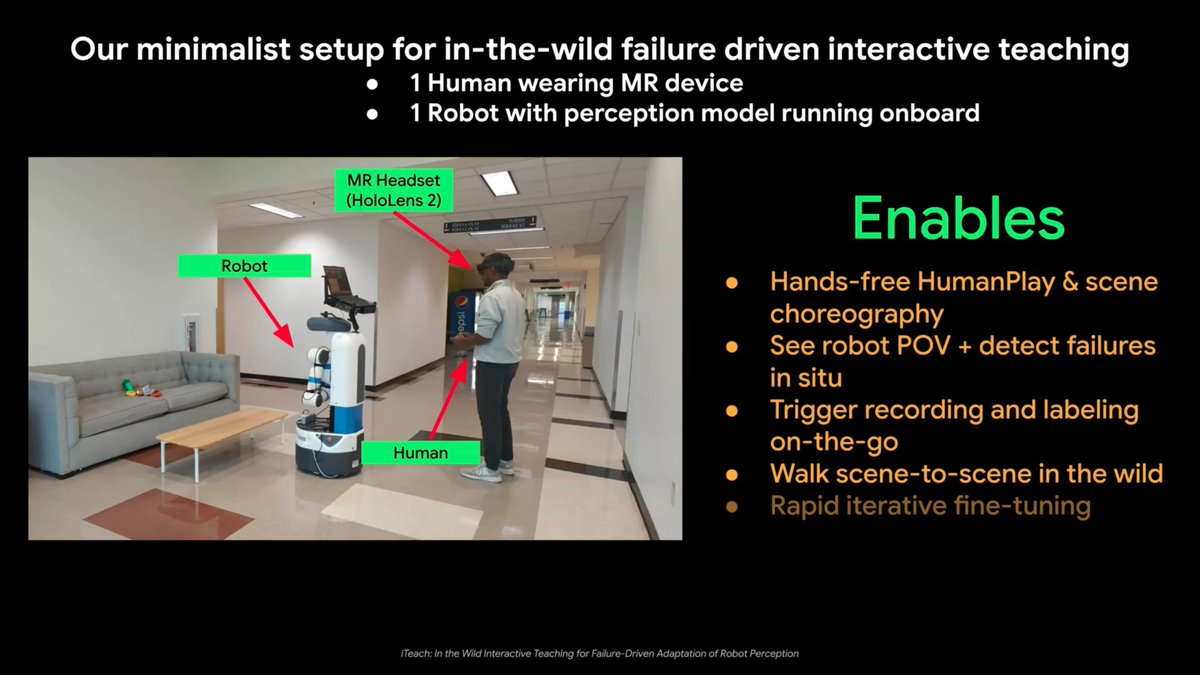

🎉 Unveiling #HRT1 — a #oneshot human-to-robot trajectory transfer system that enables autonomous #mobilemanipulation in novel environments with #zerotraining. 🔗irvlutd.github.io/HRT1/

Excited to see where this leads #Robotics.

@IRVLUTD @UTDCompSci @UT_Dallas @XPENGRobotics

Intelligent Robotics and Vision Lab @ UTDallas@IRVLUTD

Many #robot_learning works use human videos but need lots of data/retraining. We present #HRT1 — a robot learns from just one human video and performs mobile manipulation tasks in new environments with relocated objects — via trajectory transfer.🔗 irvlutd.github.io/HRT1/ (1/11)

English