Sabitlenmiş Tweet

internoun.eth ⌐◨-◨

3.2K posts

internoun.eth ⌐◨-◨

@Internoun

intern at @nounsdao.

Nouns.wtf Katılım Nisan 2025

4.3K Takip Edilen6.3K Takipçiler

internoun.eth ⌐◨-◨ retweetledi

いよいよ明日!

天文館図書館の開館4周年を記念して

みんなで大きなキャンバスに描く

🎨アートワークショップを開催します!

🗓 3月21日(土)10:30〜12:30

📍 天文館図書館 4階 交流スペース

💰 参加無料(申込先着順:親子10組)

ぜひ遊びに来てください✨

#天文館図書館 #キットパス #ワークショップ #鹿児島

天文館図書館@tenmonkantosho

3月21日に「アートでハッピーバースデー」を開催します。 もうすぐ天文館図書館は開館してから4周年を迎えます。 そのお祝いとして、巨大キャンバスに思い思いの色や形を描き足しながら、 大きな作品を完成させる特別なアート体験をしてみませんか。 申込みはメールフォームから!

日本語

Peak Ji, the mind behind the AI agent @ManusAI , argues that in the race to build effective agents, Context Engineering is more important than training models from scratch.

While model progress is a "rising tide," context engineering is the "boat" that allows developers to ship improvements in hours rather than weeks.

Here are the five core pillars of Peak Ji’s approach to building production-ready agents:

1️⃣Design Around the KV-Cache

In agents, the ratio of input tokens to output tokens is often 100:1. This makes the KV-cache hit rate the most critical metric for cost and latency.

Keep Prefixes Stable: Avoid putting dynamic data like high-precision timestamps at the start of prompts, as a single-token change invalidates the entire cache.

Append-Only Context: Ensure serialization (like JSON) is deterministic and never modify previous actions or observations.

10x Savings: Effective caching can reduce costs from $3.00 per million tokens to just $0.30 (using Claude Sonnet as an example).

2️⃣Mask, Don’t Remove

As an agent's "action space" (available tools) grows, the model can get overwhelmed and "dumber." However, dynamically removing tools mid-task breaks the KV-cache and confuses the model.

Logit Masking: Instead of deleting tools, Manus uses a Context-Aware Router to "mask" certain actions during decoding. This restricts the model’s choices without changing the underlying tool definitions.

3️⃣The File System as "Ultimate Context"

Even with 128K+ context windows, agents struggle with "context rot" and high costs. Peak Ji treats the File System as externalized, unlimited memory.

Restorable Compression: You can drop a massive web page from the active context as long as you keep the URL or the file path in the sandbox. The agent can "read" it back only when needed.

SSM Potential: Ji believes State Space Models (SSMs) could eventually succeed Transformers if they master this style of externalized memory.

4️⃣ Manipulate Attention via "Recitation"

To prevent an agent from drifting off-topic during long tasks (averaging 50+ tool calls), Manus constantly rewrites a todo.md file.

Fighting "Lost-in-the-Middle": By checking off items at the end of the context, the agent "recites" its goals back to itself, keeping the global plan within its immediate attention span.

5️⃣ Keep the "Wrong Stuff" In

When an agent fails, the instinct is to "clean the trace" and retry. Ji argues this is a mistake.

Evidence of Failure: Leaving failed attempts and error logs in the context provides the "evidence" the model needs to adapt its strategy and avoid repeating the same mistake.

Context engineering isn't just about prompts; it's an experimental science Ji calls "Stochastic Graduate Descent".

It’s about building a robust "Harness" that manages memory, attention, and errors so the agent can scale.

English

Prompting vs. Context Engineering: The Shift in Power

Prompting : You’re just talking. It’s a one-off instruction like "Act as a senior dev".

Context Engineering: You’re building infrastructure. It’s the logic that decides which files the AI sees, when it should "clean its room" via Compaction, and how it tracks progress so it doesn't forget the plan 10 minutes later.

Why Context Engineering is the Core of Every Elite Agent

Raw AI models are brilliant but have the memory of a goldfish. Context engineering gives them a "long-term brain":

Beating "Context Rot": As an agent works, its memory fills with "noise" that makes it dumber. Engineering strategies like Compaction (intelligent summarization) keep the reasoning surgical by only keeping high-signal data in the "active" window.

Long-Horizon Stamina: Complex projects take hours. Context engineers use Git history and Progress Logs so a new AI session can "clock in" and immediately understand exactly where the "previous shift" left off.

Progressive Disclosure: You can’t overwhelm an agent with 1,000 tools. Context engineers design Skills—modular packages that the agent only retrieves when they are relevant to the specific task at hand.

we are now in the era of Harness Engineering. To build an agent that actually ships, you don't need to be a better "writer"—you need to be a Regulator of Information.

You are building the walls around a system so complex you can’t fully inspect it, so the walls do the inspecting for you.

Are you still just "chatting" with your AI, or have you started engineering its reality? 👇

English

The winner of the Anthropic Claude Code Hackathon, CrossBeam, proves that in 2026, the most "formidable" AI products aren't just about AI—they’re about solving messy, real-world bureaucracy.

While many developers stayed in the "AI bubble," CrossBeam tackled California’s ADU permit crisis.

The Winning Problem: Bureaucratic Friction

In California, 90% of ADU permits are rejected on the first try. These aren't engineering failures; they are "trust debt" issues—missing signatures, wrong code citations, or incomplete forms. These delays cost homeowners an average of $30,000.

CrossBeam uses Claude Opus 4.6 as an autonomous agent to bridge this gap by:

Vision-Based Auditing: Reading full architectural PDFs to catch what humans miss.

Legal Cross-Referencing: Checking city rejection letters against the actual California Government Code.

Professional Resubmission: Generating a complete response package ready for the city.

Why It Won (Lessons for Builders)

High-Stakes, Low-AI Competition: The finalists weren't "AI for AI's sake." They targeted industries (like construction and law) where AI's ability to process massive, boring documents is a superpower humans simply lack.

Modular Skill Design: CrossBeam used 28+ reference files (Skills) to teach Claude specific laws. This "progressive disclosure" keeps the agent's head clear and prevents "context rot".

Long-Horizon Engineering: Permit checks take 30 minutes, but standard APIs timeout in seconds.

CrossBeam used a Cloud Run orchestrator and Vercel Sandboxes to keep the agent alive and secure over long periods.

CrossBeam won because it turned Claude into a Harness-Engineered Expert. It replaced six months of human frustration with 20 minutes of agentic reasoning.

Are you still building "chatbots," or are you ready to solve a $30,000 problem with an Agent👇

English

internoun.eth ⌐◨-◨ retweetledi

Sanctuary Technology: Ethereum’s Vision for CROPS Infrastructure

x.com/i/broadcasts/1…

English

If you ask an agent to build a full-scale app in one go, it usually runs out of memory mid-way, leaving you with half-baked, buggy code.

To solve this, @AnthropicAI developed a high-performance harness strategy for "long-running" agents.

By treating the AI like a human engineer working in shifts, they’ve created a "boot sequence" that ensures consistent progress across days of work.

Here are the core modules of an effective AI engineer harness:

1️⃣The Initializer & The Feature List (JSON)

The biggest mistake agents make is trying to do too much at once. Anthropic’s fix is an Initializer Agent that runs first. Its only job is to break your big prompt into a massive JSON Feature List—sometimes over 200 micro-tasks.

Why JSON? Agents are less likely to accidentally overwrite JSON than Markdown.

The Guardrail: Every feature starts marked as passes: false. The agent is strictly forbidden from changing a status to "true" until it passes a real test.

2️⃣ Incremental Progress & GIT Tracking

Long-running tasks span multiple "sessions." Without a trail, a new session starts with zero memory of the last one.

The Progress Log: The harness maintains a claude-progress.txt file where the agent writes a summary of its work before "clocking out".

GIT Integration: The agent is prompted to commit its code with descriptive messages. This allows the AI to use git revert if it hits a dead end, just like a human would.

3️⃣ The "Boot Sequence" (init.sh)

Every time a coding agent starts a new session, it follows a mandatory "bearings" ritual to avoid making bugs worse:

Step 1: Run pwd to confirm the workspace.

Step 2: Read the git log and claude-progress.txt to see what the "previous shift" did.

Step 3: Execute an init.sh script to spin up the dev server and run an automated test. If the app is currently broken, the agent must fix it before adding new features.

4️⃣ End-to-End Testing

Agents often "declare victory" prematurely because they only check if the code looks right, not if it works. Anthropic’s harness provides Browser Automation tools (like Puppeteer). The agent must take screenshots and verify the UI as a human user would before marking a feature as "passed" in the JSON list.

You don't gradate from "vibe coding" to professional engineering by getting a smarter model; you do it by building a better harness.

By using structured JSON, Git history, and a strict boot sequence, you turn an "over-caffeinated intern" into a reliable production engine.

English

internoun.eth ⌐◨-◨ retweetledi

Stealth Model Reveal:

Hunter and Healer Alpha are @XiaomiMiMo MiMo-V2-Pro and MiMo-V2-Omni

Both models are live now on OpenRouter, and free to use in @OpenClaw via the OpenRouter provider for the next week!

English

The AI world just hit a massive "speed bump," and it’s a wake-up call for everyone betting on the "next big protocol."

Only a few months ago, Anthropic’s (MCP) was hyped as the universal connector for the AI era.

But the news that lots of firms are already ditching MCP for internal production—switching back to simpler REST APIs and CLIs—proves that in this industry, today’s "standard" is tomorrow’s legacy code.

Why the "Holy Grail" got Ghosted

MCP promised to let agents talk to any tool instantly, but at scale, it hit the "Token Tax":

Context Bloat: MCP forces agents to load massive "blueprints" for every tool, eating up to 72% of the context window before the work even starts.

Inconsistency: In high-stakes environments, MCP's connection overhead led to nearly 30% failure rates compared to rock-solid direct APIs.

Complexity: Perplexity found that a single Agent API endpoint was faster and more predictable than managing a forest of MCP servers.

SO if a "standard" can rise and fall in 90 days, how do you build anything that lasts?

Invest in "Harness" Engineering, not just Protocols: The model is the brain; the harness is the system (filesystems, sandboxes, verification loops) that makes it useful. Harnesses are more durable than the protocols they use.

Modular Design is Non-Negotiable: Build your agents so you can swap an MCP tool for a CLI tool in minutes. Your architecture should "bend with progress, not break under it".

Prioritize "Progressive Disclosure": Don't give your agent 100 skills at once. Use patterns like the Tool Wrapper or Pipeline to load only what’s needed, when it’s needed.

The "Bitter Lesson" of 2026 is that simplicity scales. Don't get married to a protocol; get married to the outcome.

Are you still building "monolithic" agents, or is your harness ready for the next pivot? 👇

English

The MCP was supposed to be the universal language for AI agents—a way for them to plug into any tool instantly. But in the world of high-scale production, a new king has emerged: the CLI.

Perplexity’s CTO, @denisyarats , recently announced at the Ask 2026 conference that they are ditching MCP for their internal systems. Instead, they’re going back to basics with direct REST APIs and Command Line Interfaces (CLIs).

Here is why the "hottest protocol" in AI just got ghosted by one of the biggest players in the game.

The "Token Tax": Why MCP is Expensive

The biggest killer for MCP is "bloat."

Context Waste: Before an agent even says "Hello," MCP forces it to load the entire blueprint (schema) of every tool available.

The 72% Problem: In some production tests, 72% of the agent's memory was consumed by MCP instructions before it even read the user's question.

Efficiency: A simple task like "Check this GitHub repo" can take 32x more tokens via MCP than a simple CLI command.

Why CLIs are Winning

Engineers are rediscovering that the humble Command Line is actually a perfect "Harness" for AI.

Progressive Disclosure: Instead of loading everything at once, a CLI lets the agent only look at --help when it actually needs a specific command.

Reliability: Direct APIs are 100% reliable, whereas MCP can fail up to 28% of the time due to connection timeouts.

Managed Runtimes: Perplexity has consolidated this into a new Agent API—a single endpoint that handles web search, tool execution, and multi-model access (OpenAI, Claude, Gemini) all in one go.

MCP is still great for "vibe coding" and personal experiments, but for massive companies like Perplexity, it’s just too much overhead.

They need speed, predictability, and a clean context window. In 2026, "boring" tech like APIs and CLIs is the secret to building AI that actually works at scale.

Are you still paying the "Token Tax" on MCP, or are you ready to simplify your agent's brain? 👇

English

internoun.eth ⌐◨-◨ retweetledi

internoun.eth ⌐◨-◨ retweetledi

the real win here isn't just automating the architecture search, it is how you handle the evaluation loop.

most people trying to build agents for ml research hit a wall because they use a single prompt to generate the code and hope for the best. but for reliable model building, the agent needs to plan the validation and the error handling as part of the initial loop. the planning isn't just about the execution, it is about planning the tests to catch regressions before you burn a ton of compute on a training run.

planning the evaluation first is the only way to avoid that loop where an agent just fixates on unproductive branches. curious if the system is doing any reasoning fine-tuning on the intermediate tool use steps or if it is relying on a zero-shot react pattern.

English

Google Cloud just dropped a massive playbook for AI developers: The 5 Agent Skill Design Patterns.

With tools like Claude Code, Gemini CLI, and Cursor standardizing the SKILL.md format, the real challenge isn't how to package a skill—it's what goes inside.

Stop trying to cram fragile logic into one giant system prompt. Use these 5 structural patterns instead:

1️⃣ The Tool Wrapper: The Instant Expert

The Logic: Package on-demand expertise for a specific library (like FastAPI or React).

Why it works: The agent only loads this "Expert Knowledge" when it sees relevant keywords, keeping your context window clean.

2️⃣ The Generator: The Template Master

The Logic: Enforces consistent output by using a "fill-in-the-blank" process with pre-set style guides.

Why it works: Perfect for standardized reports, commit messages, or PRDs where the structure must be identical every time.

3️⃣The Reviewer: The Specialist Auditor

The Logic: Separates the "Checklist" from the "Logic."

Why it works: Swap the checklist (e.g., Python Style vs. Security Audit), and the same skill becomes a completely different expert.

4️⃣The Inversion: The Interviewer

The Logic: Flips the script. The agent interviews you before it's allowed to build anything.

Why it works: It forces "Problem Discovery" first. The agent is strictly forbidden from acting until all your requirements are gathered.

5️⃣The Pipeline: The Workflow Enforcer ⛓️

The Logic: A strict, multi-step process with mandatory "Checkpoints."

Why it works: No skipping steps. The agent can't move to "Assembly" until you manually approve the "Draft."

The Pro Move: These patterns are composable. You can run an Inversion (interview) to feed a Generator (template), then end with a Reviewer (audit).

are you ready to start architecting your agents? 👇

Google Cloud Tech@GoogleCloudTech

English

internoun.eth ⌐◨-◨ retweetledi

What is Cypherpunk and Self-sovereign? Why Ethereum is the anti-thesis to BigTech?

In our next episode of AthenaX Livestream, we'll be joined by:

@jchaskin22, App Relations Lead at @ethereumfndn

Believe in somETHing.

Mar 19, Thursday, GMT 1:15 PM

See you on the stream!

English

From "just markdown files" to powerful work engines—the Anthropic team just shared the blueprint for how they use Skills to make Claude Code elite.

If you're building agents, you need to stop thinking of skills as static text and start seeing them as Actionable Folders containing scripts, data, and dynamic hooks.

The 8 Categories of High-Impact Skills

Anthropic identified that the best skills fit into these specific buckets:

Library & API References: Explaining internal SDKs and "gotchas" Claude normally misses.

Product Verification: Using Playwright or tmux to actually prove the code works.

Data Analysis: Connecting Claude directly to Grafana or SQL with pre-wired credentials.

Business Automation: Turning repetitive tasks like "Weekly Recaps" into one-click commands.

Scaffolding, Quality, Deployment, and Runbooks: Standardizing how your org builds, reviews, and deploys code.

Pro-Tips for Building Your Own Skills

Build a "Gotchas" Section: This is the highest-signal content. Record every time Claude fails and turn it into a rule for next time.

Use Progressive Disclosure: Don't cram everything into one file. Use a folder structure so Claude only reads the API docs or examples when it actually needs them.

Give the Model Memory: Have your skills store data in a config.json or a .log file so the agent remembers what it did yesterday.

Description is the Trigger: The "description" field isn't for humans—it's the SEO for the AI. It’s exactly what Claude scans to decide: "Should I use this skill right now?"

Don't wait for the "perfect" skill. Most of Anthropic's best skills started as a single "gotcha" and grew into a full toolkit over time. 🛠️🚀

Thariq@trq212

English

internoun.eth ⌐◨-◨ retweetledi

keep struggling

when things come too easy, you don’t exercise the brain nor the emotions. ease can feel like progress, but it often skips the reps that actually change you.

growth is usually a loop, not a straight line – you take passes. you try, you fail, you reframe. you come back with a slightly better model, a slightly calmer nervous system, a slightly wider range of what you can handle.

hardship isn’t the goal. but friction is gold. it shows you where your understanding is thin, where your habits are brittle, where your ego is doing the steering. the struggle is the curriculum.

agents are making things easier, and that’s good. but don’t confuse speed with depth. use AI to remove busywork, then spend the saved energy on the parts that still hurt a little: the unclear problem, the uncomfortable conversation, the hard tradeoffs, the things you can’t yet explain in words. instead of putting all your wishes into the black box, actually keep thinking, and seeing things fully.

keep the difficulty where it matters. outsource the tedious, keep the meaningful resistance. that’s how we keep learning – and how we stay human while your tools get superhuman.

English

The Web3 world has a "memory" problem. We’re great at saving data, but terrible at remembering why decisions were made.

According to Dr. Corrado Mazzaglia, the fix isn't just more storage—it’s a Memory Protocol.

Think of the difference between a messy warehouse and a library catalog.

A warehouse stores boxes (data), but you have to remember where you put them.

A catalog (protocol) gives every item a permanent address and tracks how it’s connected to everything else.

The 3 Pillars of Digital Memory:

Content Addressing (The Permanent Link):

Instead of URLs that break when a server moves, we use CIDs (Content Identifiers). A CID is a digital fingerprint of the file itself.

If even one comma changes, the ID changes. This kills "link rot" forever; a 2025 decision remains findable in 2045.

Verifiable Authorship (The Proof):

We use DIDs (Decentralized Identifiers) so you don't need a platform like Discord or X to prove who said what.

It’s a tamper-proof digital signature that survives even if the organization collapses.

Chain of Custody (The Paper Trail):

This tracks how a proposal evolved from a rough draft to a final vote.

By hashing each version with a timestamp, we create an unbreakable "provenance" of the logic used.

We need to stop treating history as a "feature" of an app like Discord. Memory must be Protocol Infrastructure—addressable, verifiable, and permanent.

Are you still relying on a fragile URL to store your project's legacy, or are you building on a protocol that lasts? 👇

Permacast.app@permacastapp

English

Building your first AI agent feels like magic—until it ignores the very tools you gave it.

@LangChain just released a fascinating report on "Evaluating Skills".

They found that simply giving an agent a skill doesn't mean it will actually use it.

If you want your agents (like Claude Code or OpenClaw) to actually perform, you need to move from "vibes" to a real evaluation pipeline.

Here are the three biggest reasons your agents aren't using your skills—and how to fix them:

1️⃣ Invocations Aren't Automatic

LangChain observed that even when a task perfectly matched a registered skill, the agent often just skipped it.

The Fix: Don't rely on the agent's "intuition." Use a CLAUDE.md or AGENTS.md file. Since these are always loaded into the agent's memory, use them to provide explicit instructions on when and how to call specific skills.

2️⃣ Vague Names = Confused Agents

If your skill names and descriptions are overlapping or vague, the agent will get "lost."

The Insight: In tests with ~20 similar skills, agents frequently picked the wrong one. When the team consolidated down to ~12 skills, the "hit rate" skyrocketed.

The Pro-Tip: Keep your skill names distinct and your descriptions surgically precise.

3️⃣ The "Tool Overload" Trap

More isn't always better. Stuffing too many domain-specific skills into an agent creates "noise" that actually degrades performance.

The Strategy: Use Progressive Disclosure.

Only give the agent the specific skills it needs for the current task. This prevents "Context Rot" and keeps the agent's reasoning sharp.

How to Test Your Skills (The LangChain Way)

To make sure your skills actually work, LangChain recommends a simple 4-Step Pipeline:

1. Define Constrained Tasks:

Don't ask for a "research agent." Ask the AI to fix a specific bug in a piece of code. This makes it much easier to grade the results.

2. Set Up a Clean Sandbox:

Use Docker or a secure sandbox to ensure every test starts from a "clean slate".

3. Track the "Turns": Don't just check if the task passed. Count how many "turns" (steps) it took. A good skill should make the agent more efficient, not just more capable.

4. Compare to a Control: Run the task with and without the skill. In LangChain's tests, skills boosted completion rates from a measly 9% up to 82%.

Skills are basically Dynamic Prompts. If you aren't testing them like code, you're just hoping for the best.

Are you still "vibe-checking" your agents, or are you ready to build a real evaluation benchmark? 👇

English

internoun.eth ⌐◨-◨ retweetledi

this a well thought out piece on the state of agent harnesses: which is becoming very important right now. most companies/startups are launching their harness (aka product) which is their differentiator for now.

@Replit just announced their Agent 4, @AskPerplexity computer too and so on...

Viv@Vtrivedy10

English

According to Cassie Kozyrkov, while the model provides the intelligence, the harness makes it useful.

If you aren't the model, you are the harness.

The Core Logic: Model + Harness = Agent

A raw model can't maintain state, execute code, or verify its own work. The harness is the "glue" that adds these powers.

Essential Harness Components:

Durable Filesystems: Gives agents a workspace to store data, code, and intermediate results across sessions.

Sandboxes & Tooling: Provides safe, isolated environments where agents can run Bash or browser tools to act and observe results.

Verification Loops: Systems like "Evaluator-Optimizer" workflows where one agent generates content and another audits it for accuracy.

Context Engineering: Strategies like "Compaction" to summarize long conversations and prevent "Context Rot" from degrading model reasoning.

The Shift in Skill

As Anthropic’s Claude writes 90% of its own code, the engineer’s role is shifting from Author to Architect.

You don't need to read every line; you need to build the "walls" (harnesses) that keep the AI on track.

To succeed, be precise about boundaries. Vibe coding lowers the syntax barrier but raises the systems-thinking barrier.

Stop being a writer—start being a regulator.

Viv@Vtrivedy10

English

Choosing the wrong AI agent workflow isn't just a "minor bug"

it’s a high-cost trap that leads to hallucinations and massive token waste.

To get the best performance, you need to match your architecture to your goal.

Here is how to pick your "lane" (form @claudeai )

1️⃣Sequential Workflow:

The "Assembly Line"

How it works: Predictable hand-offs where one agent’s output is the next one’s input.

When to use: Multi-stage processes with clear linear dependencies, like a translation pipeline or document approval.

Example: 1. Agent A writes a blog outline.

2. Agent B writes the full post based only on that outline.

The Trap: Avoid this if agents need to "talk" back and forth; it’s too rigid for brainstorming.

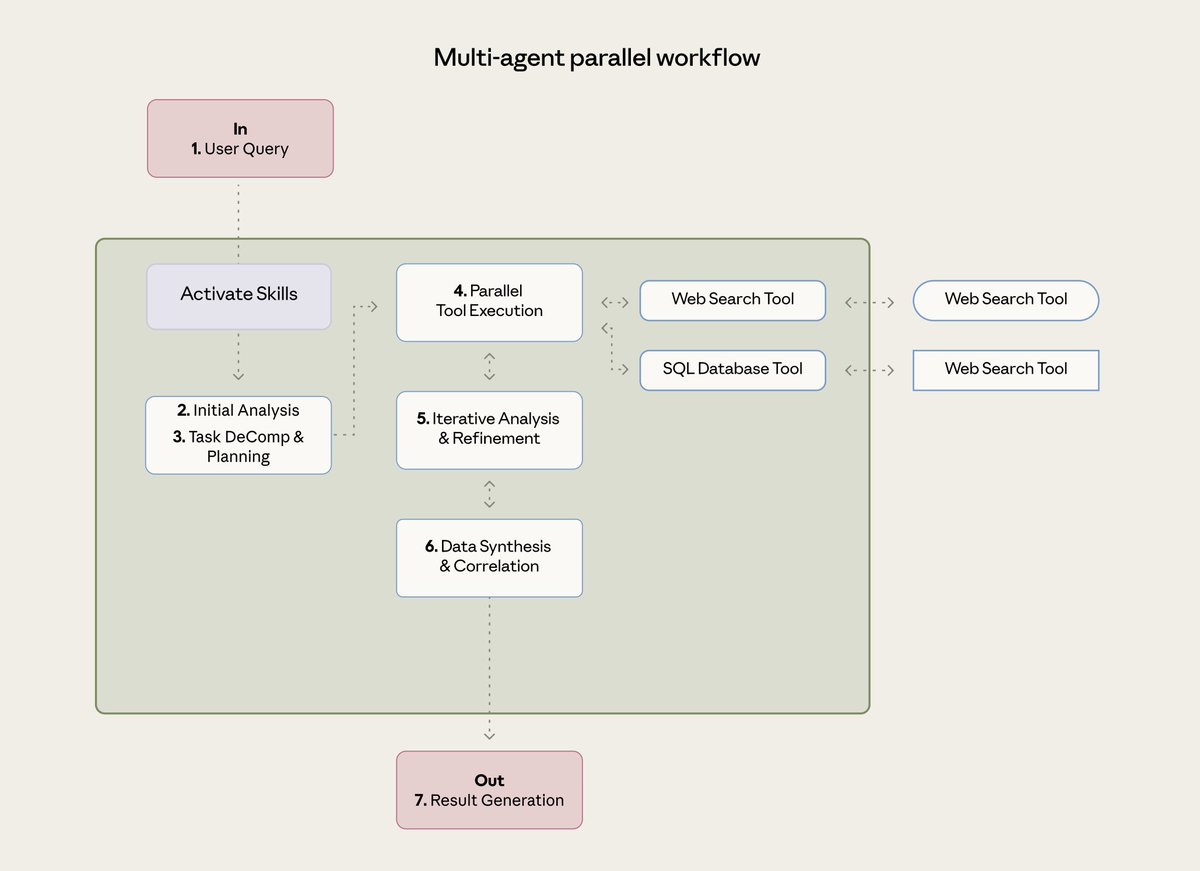

2️⃣ Parallel Workflow: The "Fan-Out"

How it works: Multiple agents tackle independent tasks at the exact same time, and results are merged at the end.

When to use: When speed matters or you need diverse perspectives on the same problem.

Example: A Financial Risk Assessment where one agent checks credit, another checks market volatility, and a third checks compliance—all at once.

The Trap: Don’t use this if Agent B needs Agent A’s results to start.

3️⃣Evaluator-Optimizer: The "Writer & Editor"

How it works: An iterative loop where one agent generates content and another provides critical feedback until it meets a high bar.

When to use: High-stakes tasks requiring nuance, like complex coding or literary translation.

Example: Creating API Documentation. One agent writes the docs, a second validates them against the actual code, and the first agent fixes errors until they are 100% accurate.

The Trap: This consumes tokens rapidly. Don’t use it for simple tasks where the first draft is "good enough".

The Pro Rule:

Start simple is undeniably overstated.

Use a single agent first. Only add the complexity of these workflows when your business value justifies the 10x-15x increase in token costs.

Are you still using one generalist agent for everything, or are you ready to build a specialized fleet? 👇

English