Nick James

31 posts

Nick James

@IsotropicPBNJ

math and comparative literature @princeton | cofounder of https://t.co/b8lRjGXJwO

@littmath i think you could build a harness today that could get 5.5 to do really unique curiosity driven research for what it’s worth! another idea: RL env where the model must conduct ~32 entirely novel explorations, present to an LLM judge, reinforce one deemed ‘most interesting’

I don’t think any of you have processed at any level how widespread and profound the ai water libel is

Can water intake prevent Alzheimer’s disease? No. This is fully AI-generated… but the data below could easily pass as real. The new ChatGPT image model is truly impressive, but I think it poses a real risk for scientific integrity in future. For example, I could just generate a dataset with a single prompt that appears to show something like water preventing Alzheimer’s disease. Ironically, we used to laugh at obvious “AI slop” (like those weird generated mice), but that’s changing pretty fast. If I were reviewing this fake figure today, I’m not sure I could reliably tell whether this figure is real or AI-generated? The bigger issue is that the usual signals we rely on e.g., how realistic or plausible something looks are no longer enough. I think we really need more comprehensive AI detection and, more importantly, stronger verification standards for scientific submissions going forward. We’ll probably also need better ways to digitize lab notebooks and ensure access to raw data, something closer to how code and version history are tracked...

Self-play led to superhuman Go performance, why hasn’t it for LLMs? In practice, long run self-play plateaus like RL. We study why this happens, and build a self-play algorithm that scales better. It solves as many problems with a 7B model as the pass@4 of a model 100x bigger.

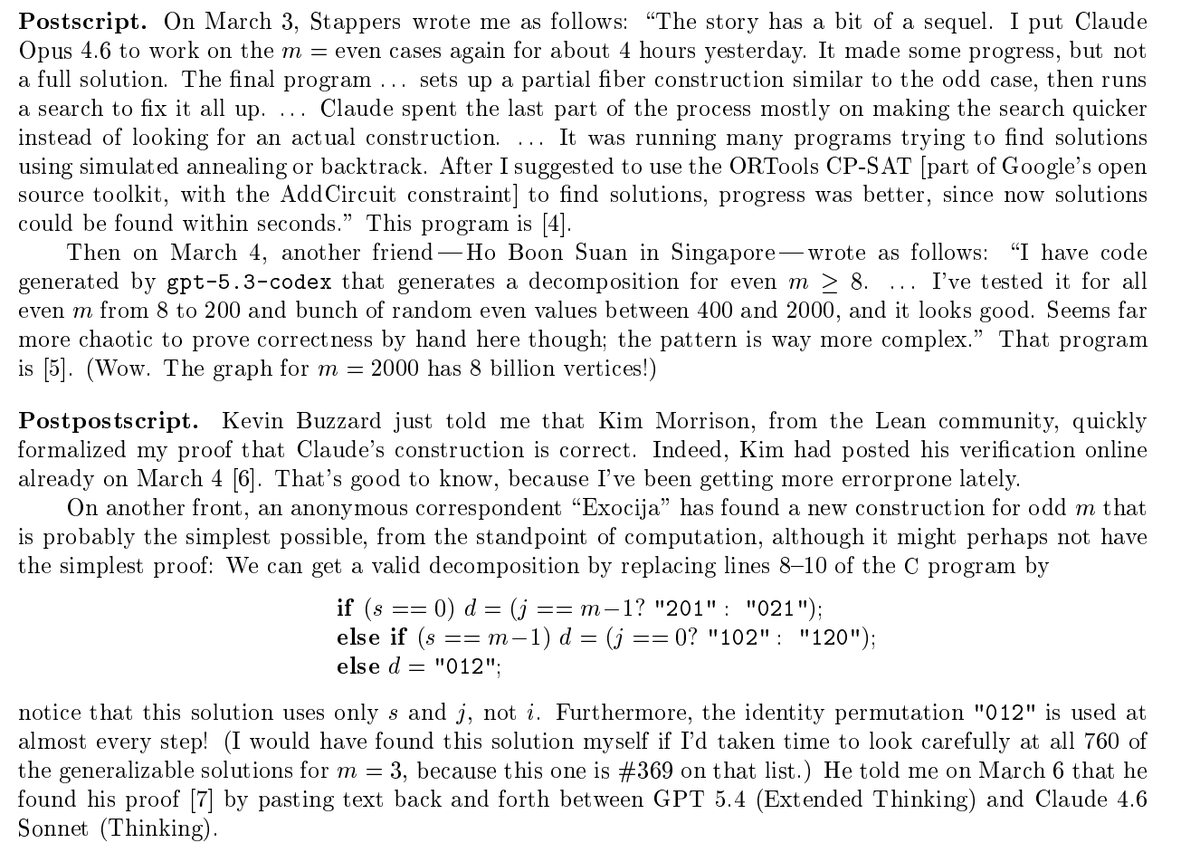

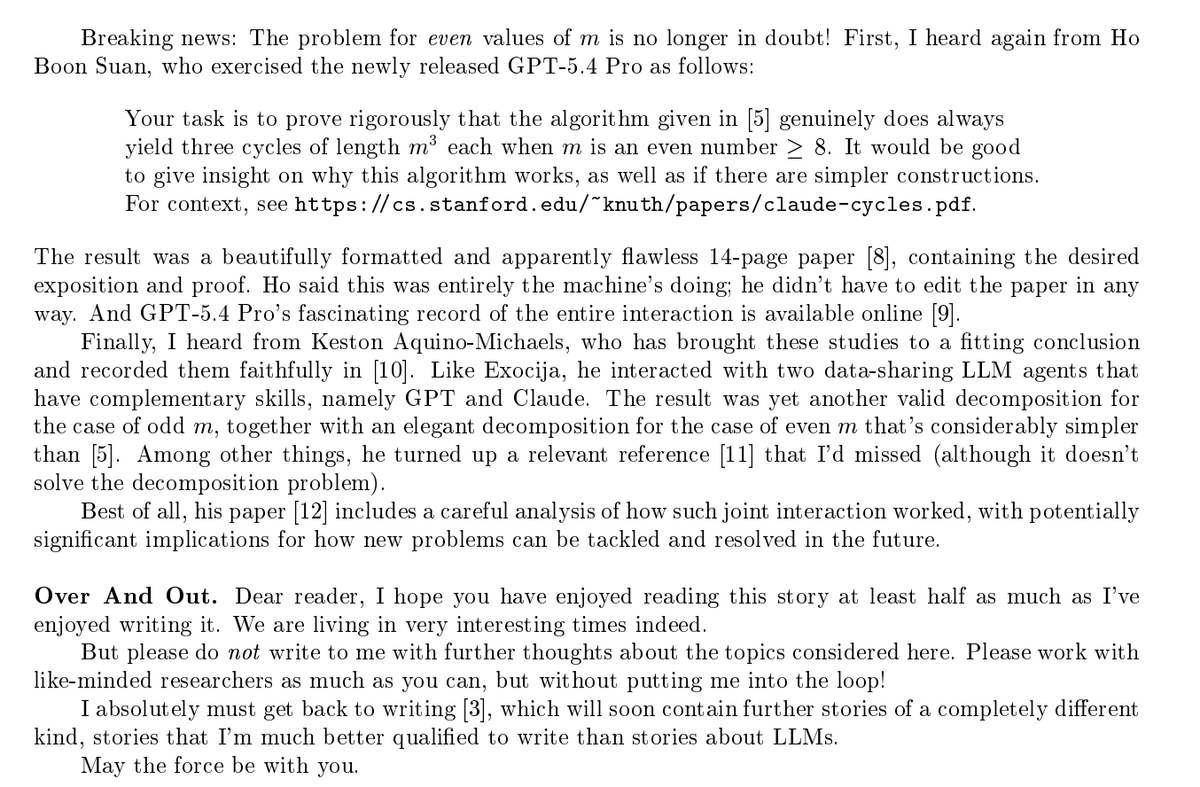

Prof. Donald Knuth opened his new paper with "Shock! Shock!" Claude Opus 4.6 had just solved an open problem he'd been working on for weeks — a graph decomposition conjecture from The Art of Computer Programming. He named the paper "Claude's Cycles." 31 explorations. ~1 hour. Knuth read the output, wrote the formal proof, and closed with: "It seems I'll have to revise my opinions about generative AI one of these days." The man who wrote the bible of computer science just said that. In a paper named after an AI. Paper: cs.stanford.edu/~knuth/papers/…