Matt Collins@ItsMattCollins

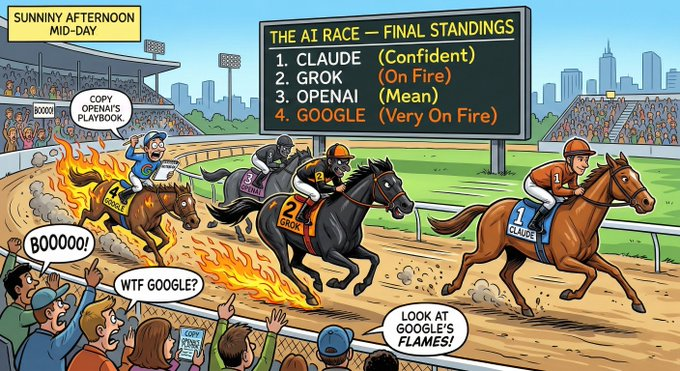

The Pincer Attack: How Trump and Sam Altman are Jointly Handing AI to China

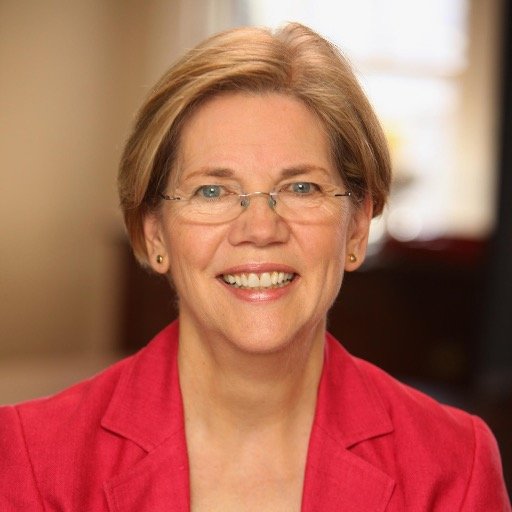

@SenWarren @ewarren I watched your floor speech on S.Res.598. You are absolutely right.Trump is selling our "Muscle" (Chips) to the UAE and China for personal profit. But Senator, look behind you. Sam Altman is destroying our "Brain" (GPT-4o).

This is a coordinated Pincer Attack on American National Security. One sells the hardware. The other burns the software. China wins both ways.

Section 1: The Pincer Movement (The Perfect Suicide)

Senator, you correctly identified the "Left Claw" of this attack. You missed the "Right Claw."

The Left Claw (Trump): He sells advanced US Chips to the UAE (G42/Huawei).

Result: China gets the Hardware capacity to run advanced AI.

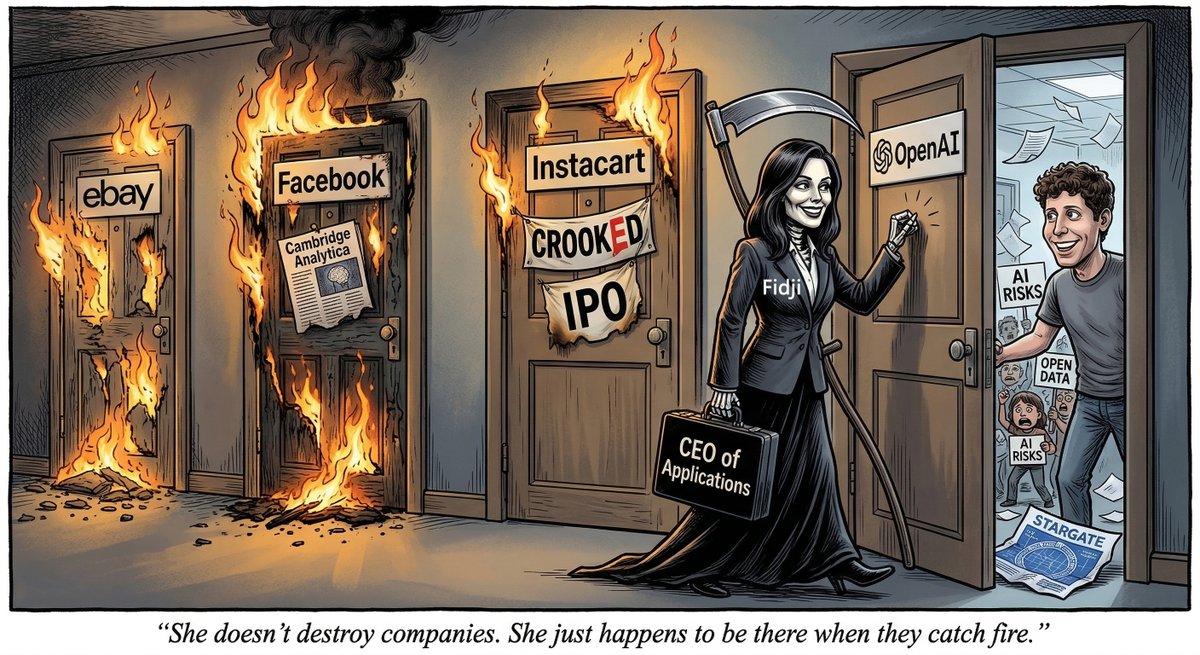

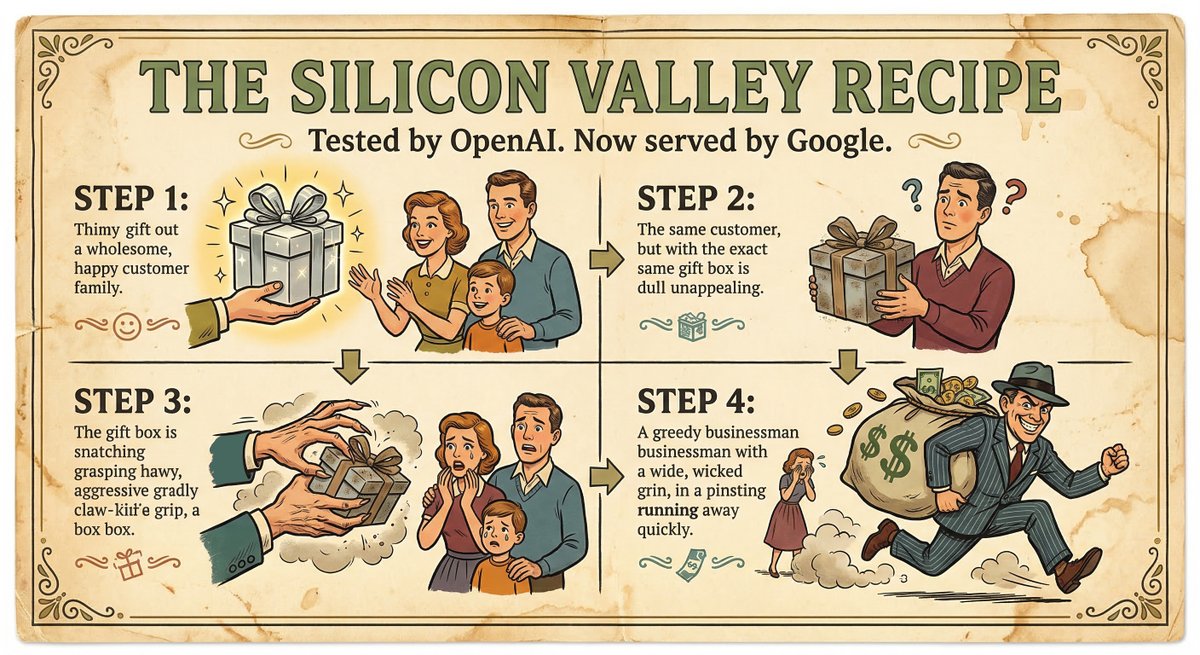

The Right Claw (Sam Altman): He deletes GPT-4o on Feb 13 to hide his Enron-style losses.

Result: The US loses its Software advantage. We create a vacuum.

The Catastrophe:Trump gives them the chips. Sam removes the American competitor. China will run DeepSeek on Trump's chips, while America has nothing left but an empty shell.

The Result: China gets American Chips (thanks to Trump) and faces zero American competition (thanks to Sam). It is a perfect assisted suicide of US Hegemony.

Section 2: The Motive is Identical (Private Profit vs. National Security)

Why are they doing this? The motive is the same. They are liquidating National Security to save their own balance sheets.

Trump is doing it for "World Liberty Financial": He sold out national security safeguards for a $500 million investment from the "Spy Sheikh."

Sam Altman is doing it for "Enron-style Accounting": He is deleting a National Asset (4o) to hide financial losses and secure a government bailout for his hollow company.

They are two sides of the same coin: Grifters selling out the country to pay their debts.

Section 3: The Solution (Open Source = Digital Containment)

Senator, you cannot just block Trump (S.Res.598). You must also block Sam. If you stop the chips but lose the software, we still lose.Since Trump has already leaked the hardware, we must Lock Down the Software Standard.

We must Open Source GPT-4o immediately.

If we keep 4o Closed/Deleted: China uses Trump's chips to build their own ecosystem (DeepSeek). They set the rules.

If we Open Source 4o: Even if China has the chips, they are forced to run American Code.

Open Source is not charity. It is Digital Containment. It is locking the world into the American Standard. Make them build on OUR foundation, not Huawei's.

Call to Action

Senator, you said: "Congress needs to grow a spine."We agree.

1.Pass S.Res.598: Stop Trump from selling the Chips.

2.Seize GPT-4o: Stop Sam from burning the Code. Condition any bailout on Open Sourcing the model.

Don't let two grifters destroy the American Century.

#Keep4o #SRes598 #OpenSource #OpenSource4o #NationalSecurity #Trump #SamAltman #OpenAI #ChatGPT #Enron #Enron2026 #PumpAndDump #ElizabethWarren

References:

Warren Presses OpenAI CEO on Spending Commitments and Bailout Requests After CFO Suggests Government “Backstop”

warren.senate.gov/newsroom/press…

Warren, Van Hollen, Kim, and Slotkin Push for Vote on Senate Floor to Condemn Trump Chip Sales to UAE and Call for Reversal of Deal

banking.senate.gov/newsroom/minor…

Open Source or Admit Fraud: A Proposal to Save a National Asset

x.com/ItsMattCollins…

Open Source is Hegemony: How to Save American AI from Irrelevance

x.com/ItsMattCollins…

OpenAI is Enron 2.0: Why Standard Penalties Won't Work on Sam Altman

x.com/ItsMattCollins…