Zhen Liu

116 posts

@ItsTheZhen

Assistant Prof @ CUHK-SZ. PhD at @Mila_Quebec & @UMontreal, MS & BS at @GeorgiaTech and ex-visitor at @MPI_IS. Deep Learning/Foundation Models/3D Generation.

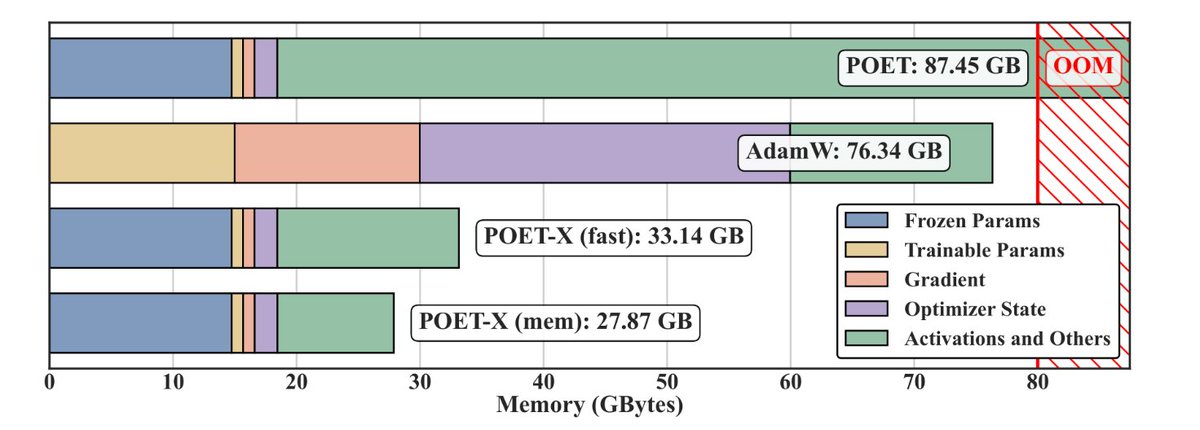

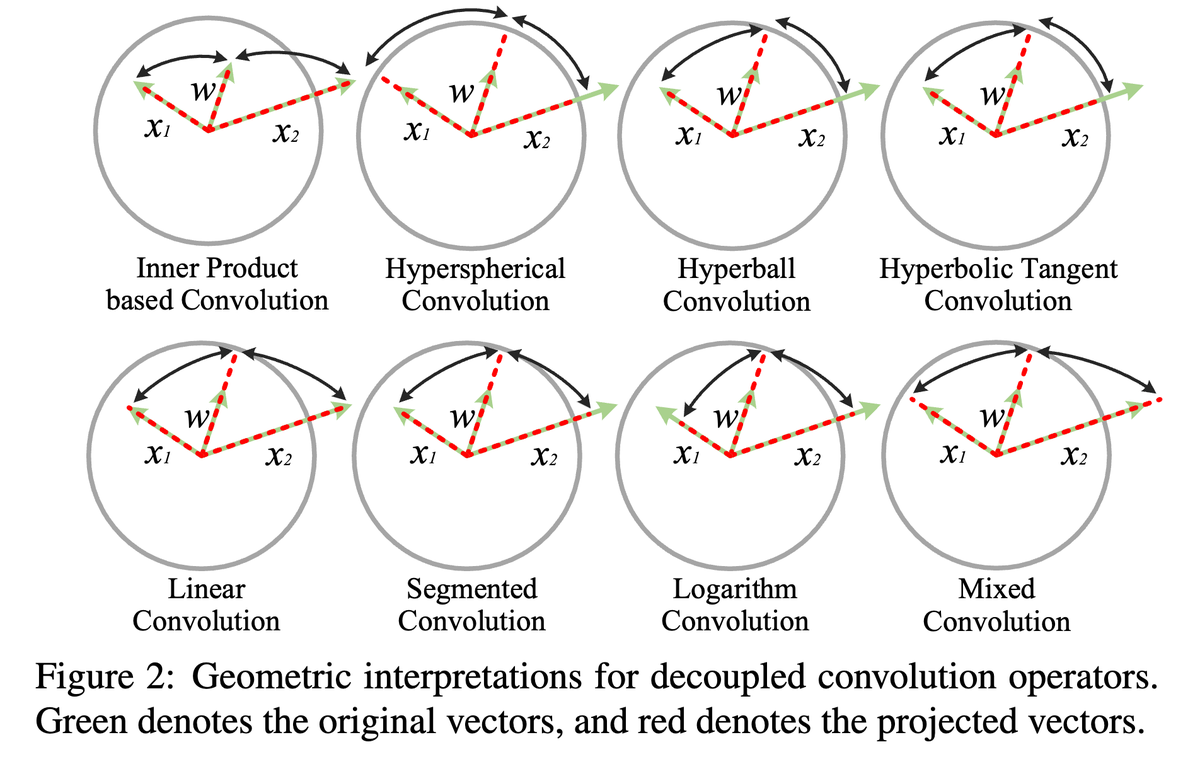

(1/n) Introducing Hyperball — an optimizer wrapper that keeps weight & update norm constant and lets you control the effective (angular) step size directly. Result: sustained speedups across scales + strong hyperparameter transfer.

Stronger Normalization-Free Transformers – new paper. We introduce Derf (Dynamic erf), a simple point-wise layer that lets norm-free Transformers not only work, but actually outperform their normalized counterparts.

🚀Introducing Lumine, a generalist AI agent trained within Genshin Impact that can perceive, reason, and act in real time, completing hours-long missions and following diverse instructions within complex 3D open-world environments.🎮 Website: lumine-ai.org 1/6

Human history is marked by the machines we created: from the Antikythera mechanism of ancient Greece, to the imaginations of the Renaissance, to the engines of the steam era. We wonder: can LLMs, like humans, build sophisticated machines to achieve purposeful functionality?

Human history is marked by the machines we created: from the Antikythera mechanism of ancient Greece, to the imaginations of the Renaissance, to the engines of the steam era. We wonder: can LLMs, like humans, build sophisticated machines to achieve purposeful functionality?