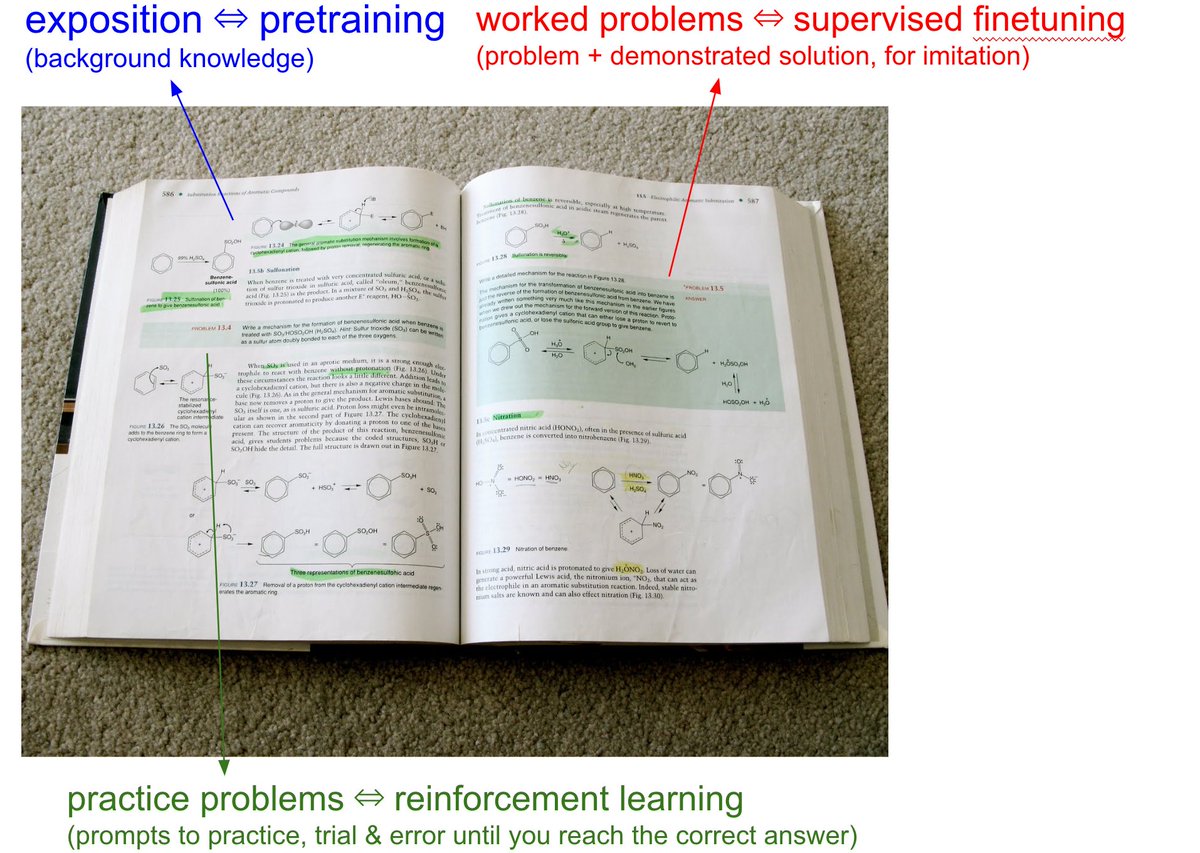

@karpathy and I are back! At @sequoia AI Ascent 2026. And a lot has changed. Last year, he coined “vibe coding”. This year, he’s never felt more behind as a programmer. The big shift: vibe coding raised the floor. Agentic engineering raises the ceiling. We talk about what it means to build seriously in the agent era. Not just moving faster. Building new things, with new tools, while preserving the parts that still require human taste, judgment, and understanding.