Jace Hall

445 posts

From my archives, as requested by @RyanHodgkiss1

VOLUME UP!

Years ago for fun I created a "from scratch" enhanced remake of the 1985 intro music to the groundbreaking Atari 800 game "Alternate Reality: The City".

AR holds the title for having the first documented commercial texture-mapped ray-casted 3D engine years before amazing games like Catacomb 3-D, Wolfenstein or Doom.

Complete REDUX version here:

youtu.be/5uljXaZEUeg

For comparison, original 1985 version here:

youtube.com/watch?v=GuHqw_…

#Atari #8BitGaming #Retro

YouTube

YouTube

English

Hey @AnthropicAI THANK YOU for the 1M context window upgrade for 4.6. Seriously. 🙏

English

@sukh_saroy Some thoughts I shared a while ago that relates to this if anyone is interested.

researchgate.net/publication/39…

English

🚨Nobody is ready for this paper.

Every LLM you use GPT-4.1, Claude, Gemini, DeepSeek, Llama-4, Grok, Qwen has a flaw that no amount of scaling has fixed.

They cannot tell old information from new information.

A patient's blood pressure: 120 at triage. 128 ten minutes later. 125 at discharge.

"What's the latest reading?"

Any human: "125, obviously."

Every LLM, once enough updates pile up: wrong. Not sometimes wrong. 100% wrong. Zero accuracy. Complete hallucination. Every model. No exceptions.

The answer sits at the very end of the input. Right before the question. No searching needed.

The model just can't let go of the old values.

35 models tested by researchers from UVA and NYU. All 35 follow the exact same mathematical death curve. Accuracy drops log-linearly to zero as outdated information accumulates.

No plateau. No recovery. Just a straight line to total failure.

They borrowed a concept from cognitive psychology called proactive interference old memories blocking recall of new ones. In humans, this effect plateaus. Our brains learn to suppress the noise and focus on what's current.

LLMs never plateau. They decline until they break completely.

The researchers tried everything:

"Forget the old values"- barely moved the needle

Chain-of-thought- same collapse

Reasoning models- same collapse

Prompt engineering- marginal improvement at best

But here's the finding that should reshape how you think about AI infrastructure:

Resistance to this interference has zero correlation with context window length.

Zero.

It only correlates with parameter count.

Your 128K context window is not memory. It's a junk drawer that the model can't sort through.

The entire AI industry is charging you for longer context. This paper says context length was never the problem.

If you're building agents, memory systems, financial tools, healthcare pipelines, or anything that tracks changing data over time you are building on top of this flaw.

And almost nobody is talking about it.

English

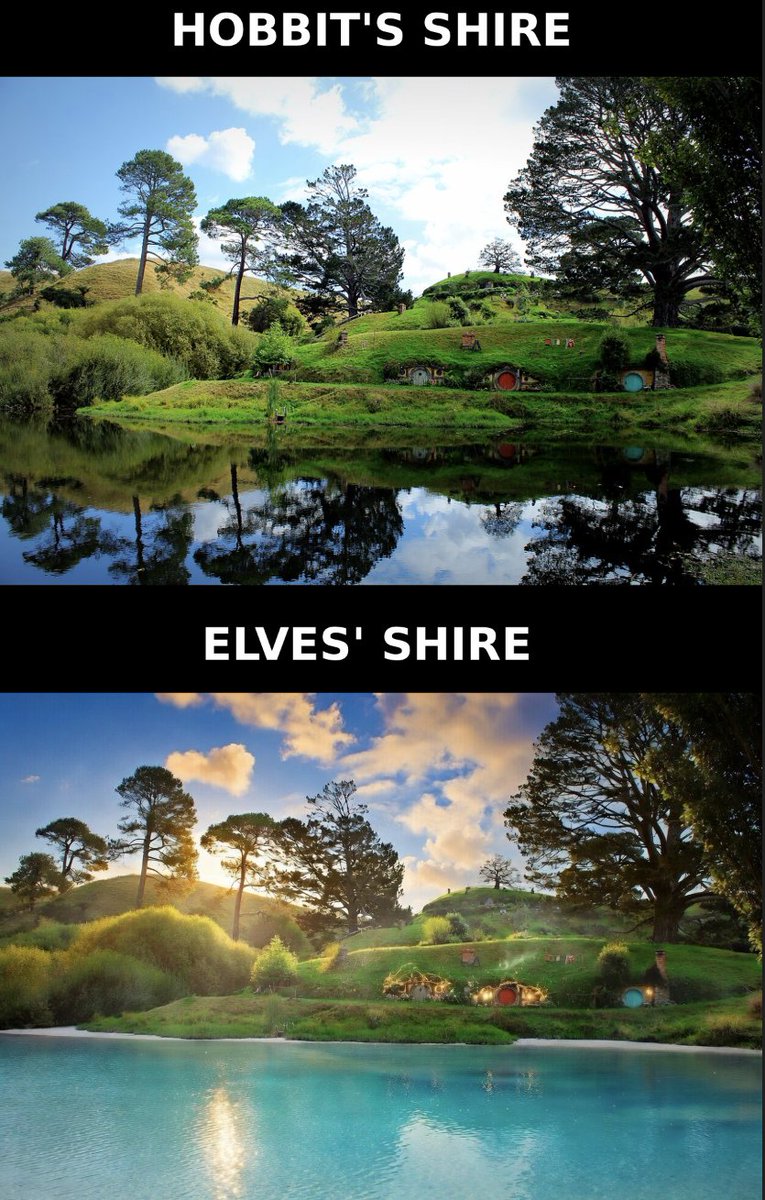

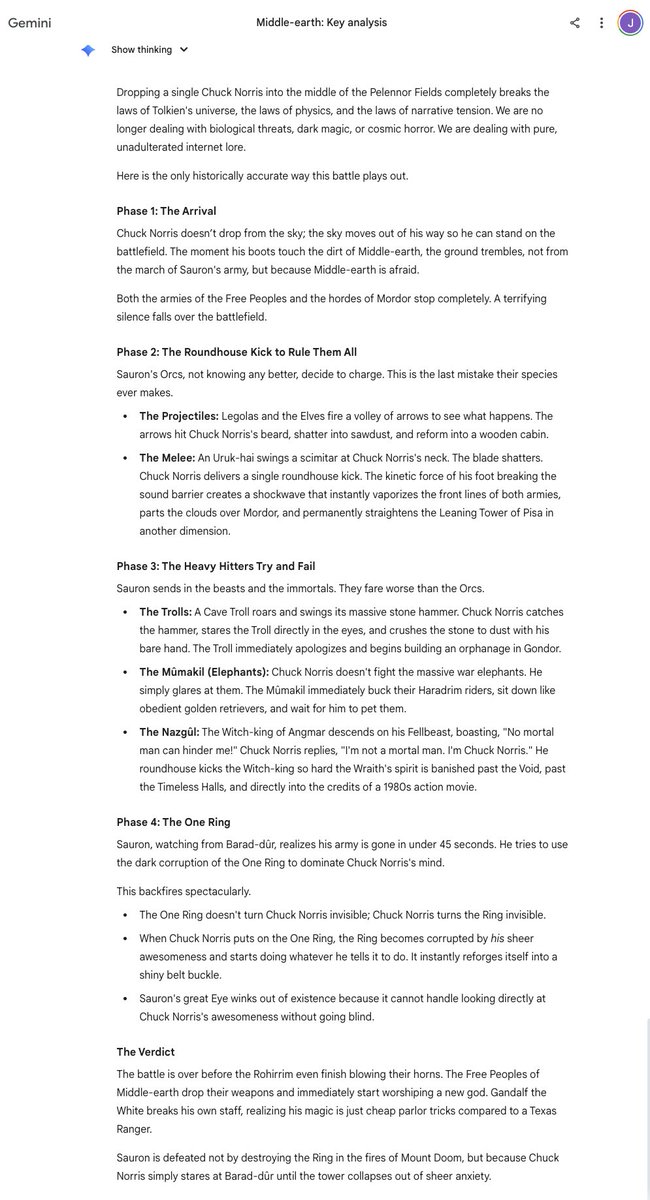

This is a must read. This was a legitimate answer that was given to me by @GoogleDeepMind's Gemini. The model's scientific rigor is unmatched. AGI is here.

English

I’m very much looking forward to what @notch is making. I can personally confirm that I know his game is being built with genuine passion, he truly is trying to do things right, and I agree with him that his approach speaks to an underlying industry deficiency and need for change. Good stuff. Can’t wait!🙏💯

English

I'm trying this new thing of under promising in hopes of maybe over delivering. It's probably a dumb strategy from a marketing perspective.

But hey if anyone I should market to is reading this; i'm trying to do things right because we could all need that change. Please throw me a buck once I release something so I can keep doing it without bleeding all my savings, but I will do it anyway as it's my passion. The game will be called Levers and Chests, and it's going to be fun, if possibly a bit grindy if you want to get the true ending and stuff.

English

Umm @AnthropicAI says #Claude is down?

CLAUDE IS NEVER DOWN. YOU KNOW THIS!

GET BACK TO WORK.

English

@JaceHall Hey Jace! Random question, but has there been any movement in the Condemned franchise since you bought the IP rights? I miss that series and hope a remake or new game comes at some point and that the IP isn’t being held hostage.

English

@JaceHall Those guys published Myst!

English