Jaco

548 posts

Jaco

@JacoFok

Tweets are personal. https://t.co/THxsRcd7Sy

Amsterdam Katılım Temmuz 2010

265 Takip Edilen117 Takipçiler

Every operations role being cut in 2026 maps to a workflow category.

I've built in most of them. Here's the full breakdown:

→ Email routing and triage: 3-5 nodes. avg build time 4 hrs

→ Invoice processing and matching: 8-12 nodes. avg build time 7 hrs

→ Lead assignment and CRM updates: 4-6 nodes. avg build time 3 hrs

→ Status update aggregation: 3-4 nodes. avg build time 2 hrs

→ Onboarding checklist management: 6-9 nodes. avg build time 5 hrs

→ Report generation and distribution: 5-8 nodes. avg build time 4 hrs

Every one of those is a client conversation.

Every one of those is a workflow that pays for itself in week one.

The full playbook - node breakdown, pricing guidance, client conversation script, and what to charge - is in the PDF.

Comment OPSMAP and I'll DM it to you.

(must be following for DM)

English

Claude Cowork + Google Ads is f*cking cracked 🤯

Set up once → ask Claude questions like:

"What's driving my CPA spike this week?"

"Which search terms are wasting budget?"

"Run a full account audit and tell me the top 5 things to fix."

All inside Claude Cowork.

Perfect for DTC brands and agencies running Google Ads who are still pulling reports manually, digging through search term reports, and trying to figure out where budget is leaking.

Claude Cowork eliminates the entire loop:

→ Connects to your live Google Ads data via MCP

→ Runs a full account audit across campaigns, ad groups, and keywords

→ Finds wasted spend — search terms burning budget that aren't converting

→ Analyzes quality scores and flags what's dragging them down

→ Detects anomalies — CPA spikes, CTR drops, budget pacing issues

→ Generates a prioritized action list: what to pause, what to scale, what to test

→ Writes a weekly performance report in plain English, not spreadsheet noise

No logging into Google Ads and staring at columns.

No exporting CSVs and rebuilding pivot tables every Monday.

No guessing which search terms to negate.

What you get:

→ 21 specialized Google Ads skills that plug into Claude

→ Full account audits in minutes, not hours

→ Negative keyword discovery on autopilot

→ Search term mining that surfaces hidden winners and budget waste

→ Quality score analysis with specific fix recommendations

→ Weekly reports your clients or team can actually read

I put together the full skill pack:

All 21 Google Ads skills for Claude, plus the setup guide to get Cowork connected to your accounts.

Want it for free?

> Like this post

> Comment "ADS"

And I'll send it over (must be following so I can DM)

English

Automation consultants charge $15K for what Claude Code now does in 2 hours.

I know because we're the ones who used to charge it.

Here's the exact process:

Step 1: Discovery (20 min)

→ Paste your org chart, tool stack, and top 3 bottlenecks

→ Claude interviews you with clarifying questions

→ Outputs a full process inventory ranked by time cost

Step 2: Workflow Mapping (15 min)

→ Describe any department's daily operations in plain English

→ Claude builds a complete process map

→ Every manual handoff, redundant step, and automation trigger flagged

Step 3: Opportunity Audit (10 min)

→ Feed it the workflow map output

→ Returns your top 10 automation opportunities

→ Ranked by ROI, complexity, and build time

Step 4: Architecture Design (20 min)

→ Claude designs the full system architecture

→ Which tools connect where, what the data flow looks like

→ Agents for complex logic, linear flows for the repetitive stuff

Step 5: Build (ongoing)

→ Claude writes the actual workflow JSON

→ Self-documents everything as it builds

Step 6: The output.

A live dashboard your whole team can work from.

→ Clickable process maps for every department

→ Automation opportunities ranked by ROI

→ Implementation progress by phase

→ KPIs updated in real time

→ One link you share with clients, freelancers, or your team to execute

This is what we hand every client at the end of discovery.

The .md file is what makes all of it possible.

Without it, Claude guesses.

With it, Claude builds like a $15K consultant.

Like this post, RT and comment "BLUEPRINT" and I'll send you the full prompt stack and the .md file we use internally. (Must be following so I can DM you)

🎁 Bonus: The first 100 people get a real Precision AI Blueprint — an actual sample audit doc from a client engagement so you can see exactly what the output looks like.

English

@itsalexvacca Sorry it’s too much work to join the group and you want too much info. Total scam.

English

@JacoFok Use this to access the Claude skills within Clay for GTM systems -

scale.coldiq.com/free-resources…

- Create a skool account (<1min)

- Click on Classroom Tab

- Click on resources to find the Skills

English

We built 12 Claude Skill files that run our entire GTM operation inside Clay (and I'm giving it all away)

Prompts give you generic output. These skill files on the other hand are built from hundreds of Clay tables across 80+ B2B clients at $7M ARR.

Each one does a specific job:

→ Company Research Agent

→ Personalization Writer

→ ICP Scorer

→ LinkedIn Profile Analyzer

→ Data Cleaner & Normalizer

→ Objection Handler

→ Email Sequence Writer

→ Competitor Analyzer

→ Job Posting Analyzer

→ Technographic Qualifier

→ News & Signal Synthesizer

→ Account Brief Generator

How it works: drop it into Clay → map your columns → run.

No prompt engineering. No switching tools. Just output.

Giving the full pack away free. Reply "SKILLS" and I'll send it.

English

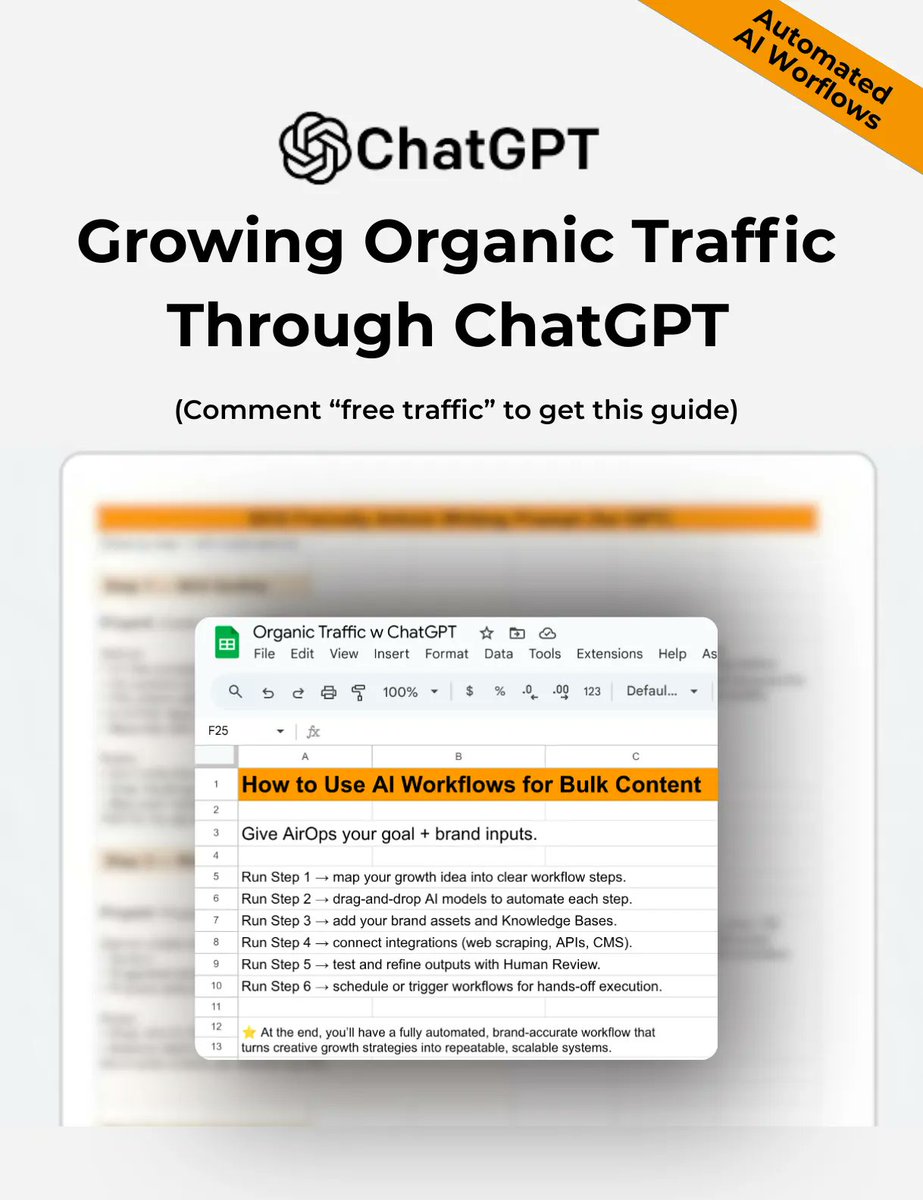

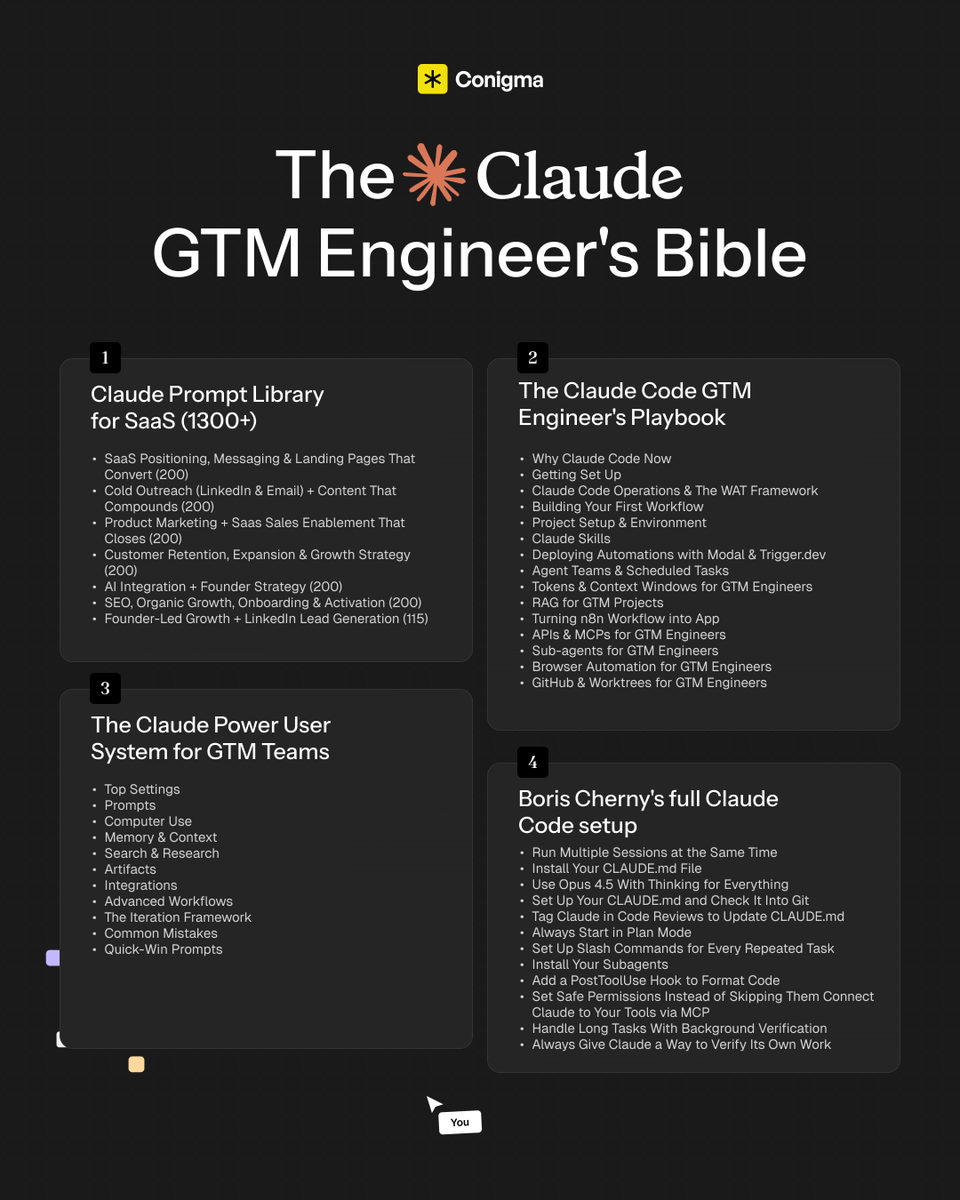

SHOCKING: 99% of GTM engineers using Claude are barely scratching the surface.

Right now, the entire internet is screaming "Claude, Claude, Claude"... But here's the truth: just prompting it won't build GTM infrastructure.

To unlock its real power, you need to master:

- Claude Code deployment with the WAT framework and CLAUDE. md self-improvement loop

- MCP connections, sub-agents, and automations running 24/7 without you

- Pre-built prompt systems covering every GTM function you actually run

I spent 100+ hours building and documenting the most complete Claude GTM Engineering Bible and compiled every prompt, workflow, build sequence, and deployment guide into one resource.

I'll give it to only 500 people.

To get it:

1. Follow me MUST (so I can DM)

2. Comment "CLAUDE"

3. I'll DM you the bible

If you don't follow or comment, you won't receive it.

English

@astraiaintel @BlahajSays @grok Seems more like an industrial mixer. Good mixing with minimal time and energy is an art. This seems to be an experiment to see how well and how fast it mixes the colored balls and in what areas the balls accumulate (dead spots).

English

@HumanProgress The food at that time was also much healthier and people could afford it because housing and healthcare was a lot cheaper.

So where is the progress?

English

@nickgillespie @HumanProgress @Marian_L_Tupy @chellivia The food at that time was also much healthier and people could afford it because housing and healthcare was a lot cheaper.

So where is the progress?

English

If you're not following @HumanProgress, you're missing out--not on progress, but the best understanding of how things are getting better and why. Kudos @Marian_L_Tupy @chellivia

Human Progress@HumanProgress

75 years ago, 1 out of every 5 dollars a US family earned went to food. Today that's closer to 1 in 10. A slow, steady, easy-to-miss kind of progress.

English

Jaco retweetledi

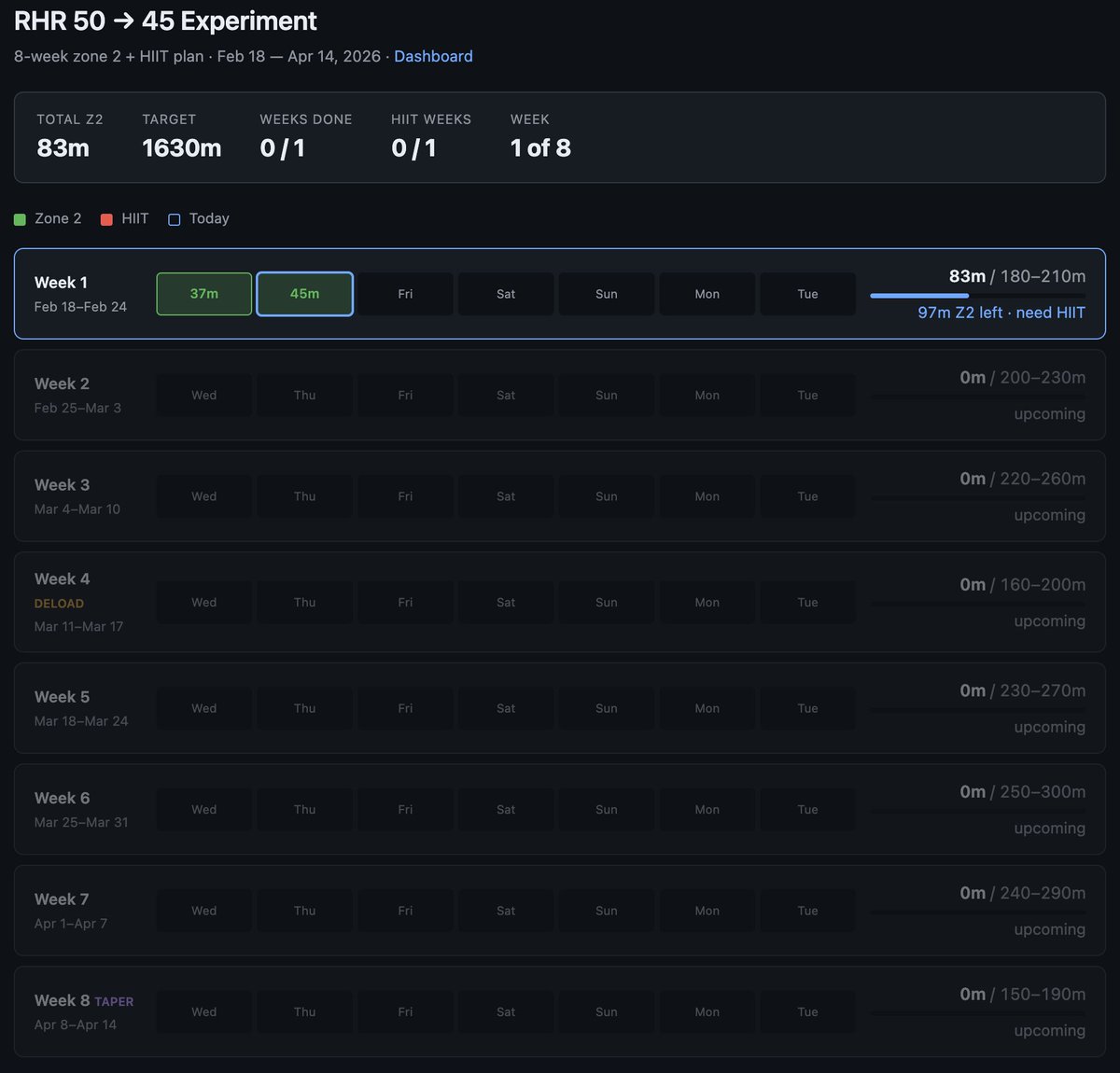

Very interested in what the coming era of highly bespoke software might look like.

Example from this morning - I've become a bit loosy goosy with my cardio recently so I decided to do a more srs, regimented experiment to try to lower my Resting Heart Rate from 50 -> 45, over experiment duration of 8 weeks. The primary way to do this is to aspire to a certain sum total minute goals in Zone 2 cardio and 1 HIIT/week.

1 hour later I vibe coded this super custom dashboard for this very specific experiment that shows me how I'm tracking. Claude had to reverse engineer the Woodway treadmill cloud API to pull raw data, process, filter, debug it and create a web UI frontend to track the experiment. It wasn't a fully smooth experience and I had to notice and ask to fix bugs e.g. it screwed up metric vs. imperial system units and it screwed up on the calendar matching up days to dates etc.

But I still feel like the overall direction is clear:

1) There will never be (and shouldn't be) a specific app on the app store for this kind of thing. I shouldn't have to look for, download and use some kind of a "Cardio experiment tracker", when this thing is ~300 lines of code that an LLM agent will give you in seconds. The idea of an "app store" of a long tail of discrete set of apps you choose from feels somehow wrong and outdated when LLM agents can improvise the app on the spot and just for you.

2) Second, the industry has to reconfigure into a set of services of sensors and actuators with agent native ergonomics. My Woodway treadmill is a sensor - it turns physical state into digital knowledge. It shouldn't maintain some human-readable frontend and my LLM agent shouldn't have to reverse engineer it, it should be an API/CLI easily usable by my agent. I'm a little bit disappointed (and my timelines are correspondingly slower) with how slowly this progression is happening in the industry overall. 99% of products/services still don't have an AI-native CLI yet. 99% of products/services maintain .html/.css docs like I won't immediately look for how to copy paste the whole thing to my agent to get something done. They give you a list of instructions on a webpage to open this or that url and click here or there to do a thing. In 2026. What am I a computer? You do it. Or have my agent do it.

So anyway today I am impressed that this random thing took 1 hour (it would have been ~10 hours 2 years ago). But what excites me more is thinking through how this really should have been 1 minute tops. What has to be in place so that it would be 1 minute? So that I could simply say "Hi can you help me track my cardio over the next 8 weeks", and after a very brief Q&A the app would be up. The AI would already have a lot personal context, it would gather the extra needed data, it would reference and search related skill libraries, and maintain all my little apps/automations.

TLDR the "app store" of a set of discrete apps that you choose from is an increasingly outdated concept all by itself. The future are services of AI-native sensors & actuators orchestrated via LLM glue into highly custom, ephemeral apps. It's just not here yet.

English

@realBigBrainAI @elonmusk Population decline will also me massive, which further aggravates the situation. Robots are arriving just in time to compensate for this.

English

Jonathan Ross, Founder and CEO of AI chip company Groq, offers a contrarian view: AI won't destroy jobs, it will create a labour shortage.

He outlines three things that will happen because of AI:

First, massive deflationary pressure.

"This cup of coffee is going to cost less. Your housing is going to cost less. Everything is going to cost less."

He explains this will happen through robots farming coffee more efficiently and better supply chain management, meaning people will need less money.

Second, people will opt out of the economy.

"They're going to work fewer hours. They're going to work fewer days a week, and they're going to work fewer years. They're going to retire earlier because they're going to be able to support their lifestyle working less."

Third, entirely new jobs and industries will emerge.

Jonathan points to history as evidence:

"Think about 100 years ago. 98% of the workforce in the United States was in agriculture. When we were able to reduce that to 2%, we found things for those other 98% of the population to do."

He continues:

"The jobs that are going to exist 100 years from now, we can't even contemplate."

Software developers didn't exist a century ago. In another century, they won't exist either, "because everyone's going to be vibe coding."

The same applies to influencers, a career that would have been unthinkable 100 years ago but now earns people millions.

His conclusion: deflationary pressure, workforce opt-outs, and new industries we can't yet imagine will combine to create one outcome...

"We're not going to have enough people."

English

@danshipper See this Medium article from Floris Fok on how to massively save on tokens when using many MCP servers.

This could be a great addition.

@florisfok5/the-unlock-pattern-a-smart-way-to-save-llm-tokens-with-dynamic-tool-loading-040d3f93ff8c" target="_blank" rel="nofollow noopener">medium.com/@florisfok5/th…

English

NEW:

i wrote a complete technical guide to building agent-native software (co-authored with claude)

it covers:

- the five pillars of agent native design (parity, granularity, composability, emergent capability, self-improvement)

- files as the universal interface

- agent execution patterns with code samples

- mobile agent patterns

- advanced patterns like dynamic capability discovery

if you want to take full advantage of this moment, it's worth your time:

every.to/guides/agent-n…

English

@snrspeaks @Saboo_Shubham_ What tool did you use to make that graphic summary? Nano?

English

Jaco retweetledi

I was inspired by this so I wanted to see if Claude Code can get into my Lutron home automation system.

- it found my Lutron controllers on the local wifi network

- checked for open ports, connected, got some metadata and identified the devices and their firmware

- searched the internet, found the pdf for my system

- instructed me on what button to press to pair and get the certificates

- it connected to the system and found all the home devices (lights, shades, HVAC temperature control, motion sensors etc.)

- it turned on and off my kitchen lights to check that things are working (lol!)

I am now vibe coding the home automation master command center, the potential is 🔥.And I'm throwing away the crappy, janky, slow Lutron iOS app I've been using so far. Insanely fun :D :D

cyp@cyp_ll

claude figured out how to control my oven

English

AI does 90% of our initial GTM strategy formulation all in just these 6 prompts.

This has been a MASSIVE unlock for speed to winning GTM for our diverse client base.

Here’s what these prompts cover:

1/ Deep Market Research

Generates all key GTM-relevant information about the target company to use as foundational context

2/ TAM Mapping

Identifies all relevant industries/sub-industries, along with market size, value, and growth data

3/ ICP Modeling

Builds ICPs from TAM outputs, ranks segments by priority, defines ideal personas, outlines their pains/needs, and provides initial messaging angles.

4/ Company Account Sourcing

Finds the best databases, directories, scrapers, and niche sources to acquire accurate company data for any targeting requirement.

5/ Targeting Keywords Generation

Creates precise industry/persona keyword lists for database filtering (e.g., Apollo) that outperform broad industry filters.

6/ Messaging Creation

Generates multiple email script variations—different lengths, offers, pain points, case studies, and complexity—using context from earlier prompts.

Want these copy-and-paste prompts for yourself?

👉 Comment "Prompts" and I'll DM you this document + LLM project you can use to extract the AI prompts.

(Must be following to receive)

English