Jacob C Tanner

216 posts

Jacob C Tanner

@JacobCTanner1

PhD Student- Complex Networks & Systems and Cognitive Science, Indiana University

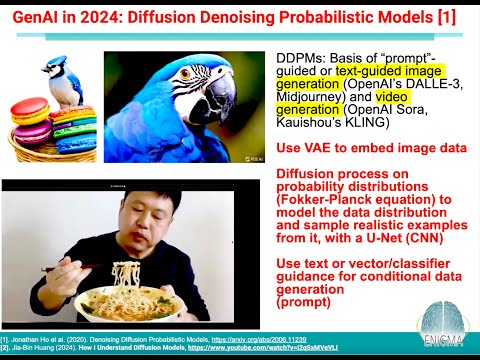

"This is just a stock image of a cat. Physicists are not allowed to own cats, for various reasons." (from a great lecture [1] on generative AI [2] by Jordan Cotler) [1] youtube.com/watch?v=bHLdAF… [2] This is a very clear explanation of denoising diffusion probabilistic models (DDPMs [3]), and how they arise in statistical physics (diffusion of smoke in air or ink in a fluid, drifts of sand dunes in the wind). [3] DDPMs and their compressed extension, LDMs, are used in text-based image generation (MidJourney, SORA, Kling). A trickier but very clear lecture is the one by the inventor of DDPMs, Jascha Sohl-Dickstein, also featuring his dog (!) youtube.com/watch?v=XCUlnH… [4] for an even deeper version, someone has posted an entire book on Twitter tonight, which is pretty amazing "Statistical Optimal Transport": arxiv.org/abs/2407.18163 #AI #DDPM