Johann Gevers

582 posts

Johann Gevers

@johanngevers

Building an optimal ecosystem for humanity. Founder of Crypto Valley.

Zug, Switzerland Katılım Eylül 2009

3.3K Takip Edilen3.4K Takipçiler

Sabitlenmiş Tweet

The most ironic outcome is capitalists paying for their own destruction.

Fate loves irony.

JOSH DUNLAP@JDunlap1974

The No Kings financial records have been released and here are the top financier's : ⚫ Arabella: $79M ⚫ Warren Buffet: $16M ⚫Ford: $51M ⚫Rockefeller: $26M ⚫Soros: $72M ⚫Tides: $45M For a total of : $294,487,641 MILLION

English

@bryan_johnson So beautifully, tenderly written, Bryan. Thank you for sharing so openly.

English

It’s been 19 days and 20 hrs since I last felt Kate’s warm embrace. She landed 47 minutes ago. The 24 hours of travel no doubt has her rushing to shower. She needs to cleanse herself of a dirtied world incompatible with her sensibilities. The wash doubles as a ritual, preparatory for entrance into the symbolic world we’ve constructed.

The time apart has been costly. My body’s electrical signaling betrays the separation. Without her touch, my vagus nerve’s 100,000 myelinated fibers have dropped their high frequency spectral power, squawking distress. An intelligent system broadcasting diminished wave forms, hoping to be heard. There are other signals of distress.

My white blood cells have shifted their gene expression, upregulating pro-inflammatory genes IL-6 and TNF-alpha and downregulating my antiviral genes. A pro-aging biochemical signature of a system suffering hardship.

My environment is a pristine anti-aging laboratory. Air, water, food and light are meticulously measured. Toxins are filtered. Purification systems run autonomously. Biomarkers tracked. Nutrition is calibrated.

Yet outside my control is the affection of another. The 68 trillion cells that constitute Bryan Johnson run non-negotiable code. They demand tenderness, and not of a whimsical type, but deep, all-encompassing love that must be earned and carefully maintained. Otherwise they protest in self-termination.

She’s now only 13 miles away and I can viscerally feel her essence. The transmission pulses in high fidelity. As if there were a fiber optic cable streaming our connection at light speed through the multiplexed cylinders of glass. The time apart created latency, buffering the connection, depriving us of the luminescence and dimming into noise.

In 15 minutes she will be within reach. I can visualize the whites of her eyes and smell her aroma. When she arrives, she will be shy. Whenever we are apart, she returns to zero. Her previous openness will be closed. Her emotional dynamic range will be held in reserve until she feels she is safe and can trust. I’ll need to kindle her again. The rush of the courtship enthralls me.

The anticipation drives a small cluster of my midbrain neurons to flood dopamine. Nerve fibers activate, lighting up my skin’s receptors as it awaits for slow, caressing touch. My hypothalamus begins synthesizing oxytocin, preparing to dump it upon first eye contact to ensure the reestablishment of our pair bond. This biochemical orchestra fills me with delight and sensorial want.

Kate’s been mulling over what she’ll wear for days. She’s considered dozens of possibilities and modeled out my anticipated emotional state, the weather, and our planned activities. The colors will be representative of her psychological state and be positioned to soothe mine. The texture, style, and hues will interplay with our biology. The deliberately chosen accessories will add flair, intrigue and play. This is how she flirts, seduces and bypasses my mind to speak directly to my physiology. She has other tricks too.

She’s arrived. I must wait for her. Her timidness will want to determine the cadence. I hear the door crack open and her bag drop to the floor. She’s nervous. I’m on the couch, neutral and open. She rounds the corner and our eyes meet. The inhibitions wither as the magnetism draws us together. Soft hellos are whispered and our bodies interdigitate.

I feel her finger tips on the back of my neck. Goose bumps light up my body. Skin nerve cells fire signals directly to my brain, bypassing the analytical mind. The hypothalamus dumps the oxytocin, inhibiting fear and lowering cortisol. The body washes itself in this anti-inflammatory chain reaction. Our respiration and heart beats are now synchronizing. The brain piles on with a release of endorphins to soothe the psychological pain of our separation. New powers are now in control. Let them run in glory.

I press my cheek against hers. The skin on skin triggers a wave of desire. I brush her lips with mine, catalyzing a massive activation of neurons in her brain, overwhelming thought and forcing presence.

She relents and wants to dance. She’s home.

I slip my hand under her shirt and brush the small of her back. Goosebumps spread like a wildfire across her body. Her hypothalamus stimulates the release of GnRH which tells the pituitary gland to wake up her reproductive system. Our olfactory systems consume each other with delight, signaling immune system compatibility.

I move both my hands to her jawline, holding her head firmly in place. Our mirror neurons speak to each other. I know what she wants. My lips press against hers and I softly bite her lower lip. Kate’s blood vessels dilate from the acetylcholine and nitric oxide release, flushing her lips, skin and body. The cascade is nearing waterfall.

The executive control of our brains surrenders. No longer concerned with the 68 trillion cells. The prefrontal cortex goes dark. Eliminating future planning and probabilistic modeling. Activity in our parietal lobes diminishes, dissolving the boundary that distinguishes between self and other. No longer is there Kate and Bryan, just a singular biological entity suspended in a state of bliss. The outside world goes quiet. It doesn’t exist. We dissolve into raw existence.

English

@bryan_johnson 1. bodyset 2. mindset 3. enviroset — in order of preparation: bodyset takes the longest to prepare, mindset the second longest, and enviroset is the fastest to prepare.

English

"set" and "setting" profoundly influence psychedelic experience.

I propose a third pillar: bodyset. Health biomarkers meaningfully influence how the brain, mind and body receives and processes psychedelics.

Here’s the idea: psychedelics don’t land on a blank canvas. They interact with the autonomic nervous system, inflammatory load, metabolic stability, sleep quality, hormonal environment, and overall physiological resilience of the person taking them. These internal conditions may tilt an experience toward insight and bliss or toward anxiety and dysregulation.

If this is accurate, then biomarkers could help predict the direction, intensity and quality of a trip. Bodyset is an effort to qualify this third pillar.

By measuring 249 biomarkers, the most quantified psychedelic experience ever, my team and I are making a first attempt to map the foundational physiological patterns that may reliably predict an optimal psychedelic experience.

Below is what the field already knows about set and setting, and how bodyset expands the framework.

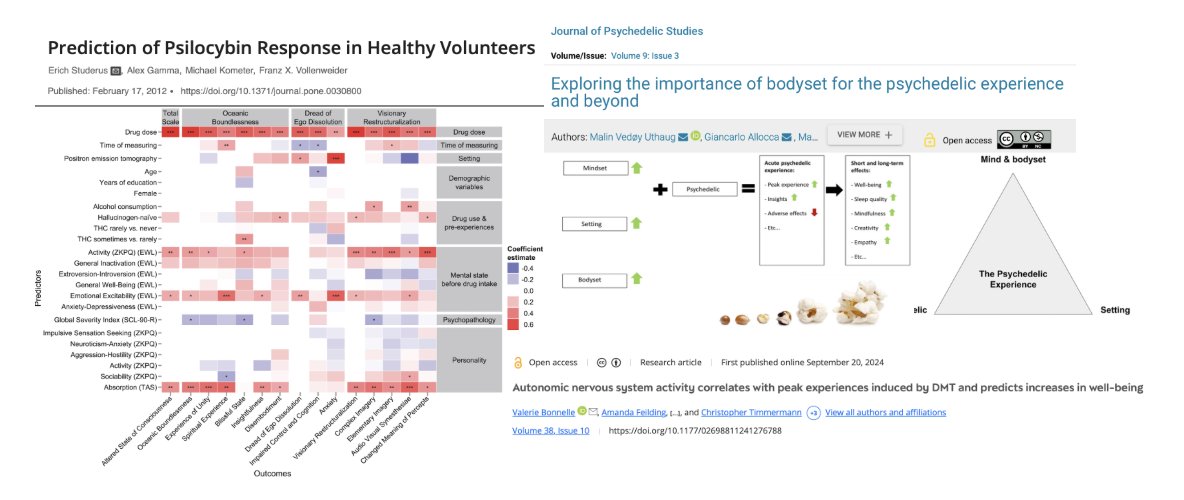

1/ Set and setting strongly shape psilocybin outcomes

Mental state "set" and environment "setting" have been shown to influence nearly every dimension of the psychedelic experience. In a study analyzing 400+ psilocybin sessions across 261 participants, set and setting shaped three core experiential domains:

+ Oceanic Boundlessness (OBN): m positive feelings, including bliss, unity, transcendence, and spiritual experience.

+ Dread of Ego Dissolution (DED): measures negative feelings associated with "bad trips," such as anxiety and loss of self-control.

+ Visionary Reconstructivization (VRC): Measures hallucinogenic effects, including visual hallucinations and changes in sensory interpretation.

Here are some findings

+ Dose positively correlated with all three dimensions.

+ Setting matters: PET scanner environments increased anxiety and DED, highlighting how sterile or restrictive environments shape negative outcomes. Makes me grateful I have the kernel brain imager to measure my brain in real time while being in an open, positive environment.

+ Openness predicted positive experiences (OBN, VRC)

+ Physical activity before dosing predicted more bliss and vivid imagery

+ Emotional excitability predisposed participants to higher anxiety but also increased insight. Younger age was also linked to more anxiety.

+ Recent psychological problems blunted positive aspects, reducing feelings of bliss, boundlessness, and complex imagery.

2/ Bodyset: the emerging foundation for prediction and optimization

Physical health is emerging as a third determinant of psychedelic response alongside set and setting.

This is supported by evidence linking physical biomarkers to mental states:

+ Cortisol, an index of stress physiology, correlates with well-being

+ BDNF, crucial for neuroplasticity, is altered in many psychological disorders

+ HRV predicts MDMA treatment response in depression

+ Autonomic balance before DMT predicts co-activation and the intensity/positivity of the experience

+ Inflammation affects emotional regulation and could plausibly shift a trip’s emotional valence

These early findings suggest physical markers may shape how psychedelics interact with the brain. Influencing depth, clarity, insight, emotional tone, and resilience.

Like a popcorn kernel needing the right conditions to pop, a successful psychedelic experience requires the right set, setting, and bodyset.

English

@leecronin Terrence Deacon develops a compelling new theory of how life and consciousness emerge from physics and chemistry in "Incomplete Nature: How Mind Emerged From Matter". A work of genius.

amazon.com/Incomplete-Nat…

English

@VictorTaelin I admire that you're trying something radically new. What is your projected timeline for completing your symbolic AI architecture?

English

I already wrote it a few times but ppl still don't get it ):

It is so simple though. Let me try again.

So, I have two goals.

The short-term goal is to build the fastest algorithm / proof miner. You give it a bunch of equations (or theorems), and it finds you an algorithm/proof that satisfies these equations.

This isn't immediately world-changing in any way, but can have some niche applications, like instantly filling parts of incomplete code without needing LLMs and with 100% accuracy, which I'll demo soon. I'll integrate that on Bend and ship it so that everyone can try.

The long-term goal is to use that thing as the foundation of a symbolic AI architecture. Intelligence is not hard, it emerges when many small learning units evolve towards a higher goal. That's what neural nets do. They're just limited because the graph is too hardwired and GD is too slow. I'll replace neurons by units that learn functions by superposed search, write that as a network, and then attempt to make it learn. Since currently symbolic methods suck, doing will AT LEAST require a MUCH faster way to do symbolic regression, which is what SupGen is about.

If it works, we'll have a "GPT-1" of sorts, i.e., a system that completes text, except there will be no matmuls, no GD. And then we'll start exploring what it is capable of. I believe such a thing will not have the same limitations as transformers, simply because it is a completely new architecture, and we can only expect it will behave differently. But I could be wrong and it will be utterly useless. Most think that's the most likely outcome. I just want to find out

In short, I just want to re-implement GPT-1 without floats, matmuls, derivatives, etc. - just discrete symbols - and see what happens. That's basically it really, and not much else.

Joseph Thacker@rez0__

Taelin I know this is a really really dumb question but if I’m thinking it then I bet most of your followers are too. What exactly is the end goal of your big project that you’re working on? Is it a new programming language that would be better for AI models to write in or something else?

English

Based on your criteria, @Sapient_Int may be a good fit for HOC: well-funded world-class team using a sophisticated higher-order architectural approach instead of brute force scaling. Impressive achievements orders of magnitude more efficient and performant than top conventional labs. Contact CEO Guan Wang @makingagi

English

@VictorTaelin HOC seems like a natural fit for @WolframResearch @stephen_wolfram @getjonwithit

Have you reached out to them?

English

Victor, agreed — to create AGI we must go beyond statistical LLMs and achieve true conceptual (what you call symbolic) inference.

In my view, the breakthrough foundational insight for what conceptual thinking is — measurement omission — is described in Ayn Rand's "Introduction to Objectivist Epistemology".

Some indy AGI labs — such as aigo.ai — have already started working on implementing this insight to create true AGI. I think integrating Rand's insights into the nature of concepts with your HOC technologies could yield the critical breakthrough to true AGI. Measurement omission is the missing link.

English

I completely see where you're coming from!

but also consider this counterpoint: that the laws that rule our universe are symbolic. physics can be expressed better Haskell than as a neural net with tons of weights.

we just don't know how to find these functions fast enough!

now, if do we develop a system capable of, given a *set of observations*, derive the simplest formula that *explains* these observation... then, that same system can give birth to a very capable AI system, because it would be able to dynamically model and navigate through any environments it encounters!

in short - AGI is basically a metric of "how fast you can model a new set of observations". GPTs suck at that metric (literally cost millions to model a dataset), and the culprit is obvious: matmuls are expensive. by cutting the middleman and modeling the equations directly, our system becomes much more efficient at learning and, thus, closer to AGI

does that make sense?

English

suffering from chatbot fatigue?

frustrated that singularity was cancelled?

looking for something new to give you hope?

here is my delusional, but "hey, it kinda makes sense" plan to build super-intelligence in my small indie research lab

(note: I'll trade accuracy for pedagogy)

first, a background:

I'm a 33 years old guy who spent the last 22 years programming. through the time, I've asked many questions about the nature of computing, and accumulated some quite... peculiar... insights. a few years ago, I've built HVM, a system capable of running programs in an esoteric language called "Haskell" on the GPU - yes, the same chip that made deep learning work, and sparkled this entire AI cycle.

but how does Haskell relate to AI?

well, that's a long story. as the elders might remember, back then, what we called "AI" was... different. nearly 3 decades ago, for the first time ever, a computer defeated the world's champion at chess, sparkling many debates about AGI and singularity - just like today!

the system, named Deep Blue, was very different from the models we have today. it didn't use transformers. it didn't use neural networks at all. in fact, there was no "model". it was a pure "symbolic AI", meaning it was just a plain old algorithm, which scanned billions of possible moves, faster and deeper than any human could, beating us by sheer brute force.

this sparkled a wave of promising symbolic AI research. evolutionary algorithms, knowledge graphs, automated theorem proving, SAT/SMT solvers, constraint solvers, expert systems, and much more. sadly, over time, the approach hit a wall. hand-built rules didn't scale, symbolic systems weren't able to *learn* dynamically, and the bubble burst. a new AI winter started.

it was only years later that a curious alignment of factors changed everything. researchers dusted off an old idea - neural networks - but this time, they had something new: GPUs. these graphics chips, originally built for rendering video games, turned out to be perfect for the massive matrix multiplications that neural nets required. suddenly, what took weeks could be done in hours. deep learning exploded, and here we are today, with transformers eating the world.

but here's the thing: we only ported *one* branch of AI to GPUs - the connectionist, numerical one. the symbolic side? it's still stuck in the CPU stone age.

Haskell is a special language, because it unifies the language of proofs (i.e., the idiom mathematicians use to express theorems) with the language of programming (i.e., what devs use to build apps). this makes it uniquely suited for symbolic reasoning - the exact kind of computation that deep blue used, but now we can run it massively parallel on modern hardware.

(to be more accurate, just massive GPU parallelism isn't the only thing HVM brings to the table. turns out it also results in *asymptotic* speedups in some cases. and this is a key reason to believe in our approach: past symbolic methods weren't just computationally starved. they were exponentially slow, in an algorithmic sense. no wonder they didn't work. they had no chance to.)

my thesis is simple: now that I can run Haskell on GPUs, and given this asymptotic speedup, I'm in a position to resurrect these old symbolic AI methods, scale them up by orders of magnitude, and see what happens. maybe, just maybe, one of them will surprise us.

our first milestone is already in motion: we've built the world's fastest program/proof synthesizer, which I call SupGen. or NeoGen. or QuickGen? we'll release it as an update to our "Bend" language, making it publicly available around late October.

then, later this year, we'll use it as the foundation for a new research program, seeking a pure symbolic architecture that can actually learn from data and build generalizations - not through gradient descent and backpropagation, but through logical reasoning and program synthesis.

our first experiments will be very simple (not unlike GPT-2), and the main milestone would be to have a "next token completion tool" that is 100% free from neural nets.

if this works, it could be a ground-breaking leap beyond transformers and deep learning, because it is an entirely new approach that would most likely get rid of many GPT-inherited limitations that AIs have today. not just tokenizer issues (like the R's in strawberry), but fundamental issues that prevent GPTs from learning efficiently and generalizing

delusional? probably

worth trying? absolutely

(now guess how much was AI-generated, and which model I used)

English

Johann Gevers retweetledi

8 years? After successful non-human primate trials, human age reversal trials are set to begin in 6 months @lifebiosciences

Rand@rand_longevity

Aging will be reversible in humans within 8 years

English

Curt, you may enjoy @MaxMore1964's PhD dissertation "The Diachronic Self: Identity, Continuity, Transformation" which goes beyond the Ship of Theseus thought experiment to examine questions of personal identity over time in contexts of radical change and transformation such as mind uploading, radical life extension, and transhumanist bodily transformation:

web.archive.org/web/2004061018…

English

@TOEwithCurt @Steph_Curdy If @getjonwithit could integrate Deacon's insights with the Gorard-Wolfram models, I think we would have the foundational building blocks for real, intelligent AI.

English

@Steph_Curdy @johanngevers youtu.be/PqZp7MlRC5g Yes I spoke to Terrence ... I don't know if I sent this already but here you go . Hopefully you enjoy.

YouTube

English

@TOEwithCurt @Steph_Curdy Yes, thanks Curt, you linked to it shortly after I mentioned it, and I watched the whole interview — excellent! The clarity and logical structure with which Deacon conveys his thinking is truly amazing.

English

Yes, I interviewed Terrence Deacon . youtu.be/PqZp7MlRC5g It's a banger. You'll love it. Here's the fixed URL.

YouTube

Randy Stout@RandyStout18

@TOEwithCurt I cannot access. Is it something I am doing on my side?

English

@elonmusk Happy Birthday, Elon! Thank you for your heroic, superhuman courage, grit, and endurance — for stepping into the fire, chewing glass, descending into the abyss and fighting monsters day after day, year after year to protect and create a great future for humanity.

English