Jonas Templestein

4.9K posts

Jonas Templestein

@jonas

CEO https://t.co/7dJOmc0va5, prev. cofounder/CTO Monzo, dad of three

gpt 5.5 has changed my life. my kid has been sick the past couple of days and ive been hanging out with him, but set up a tmux fork with TTS and automatic sshing to all my boxes. and man. im getting more work done than ever

Source: Anthropic is in advanced talks to acquire New York-based Stainless, which helps developers generate SDKs from APIs, for at least $300M (The Information) (Visit Techmeme dot com for the link and full context!)

🚨 New LEGO Lord of the Rings Minas Tirith Set Coming In June! ($650) This gargantuan 8,278-piece model of the Tower of the Guard brings Gondor's capital to life in epic fashion, and will be released on 1 June 2026 via LEGO Insiders Early Access. The set comes with 10 minifigures, each with incredible detailing with prints on arms, torsos, as well as on armour. No expense has been spared here. Oh and there's no stickers too. Fans who order LEGO Minas Tirith between 1-6 June 2026, will also receive 40893 Grond as a GWP (gift with purchase) while stocks last. This set is just utterly stunning, and LEGO have outdone themselves here.

maybe every mcp server can be 3 tools - describe(filter) => schema: get all capabilities - search(input) => toolcalls[]: for a (maybe unstructured) language query (+ opt. metadata) get a sequence/tree of toolcalls - execute(tools) => result: take that list above and run it

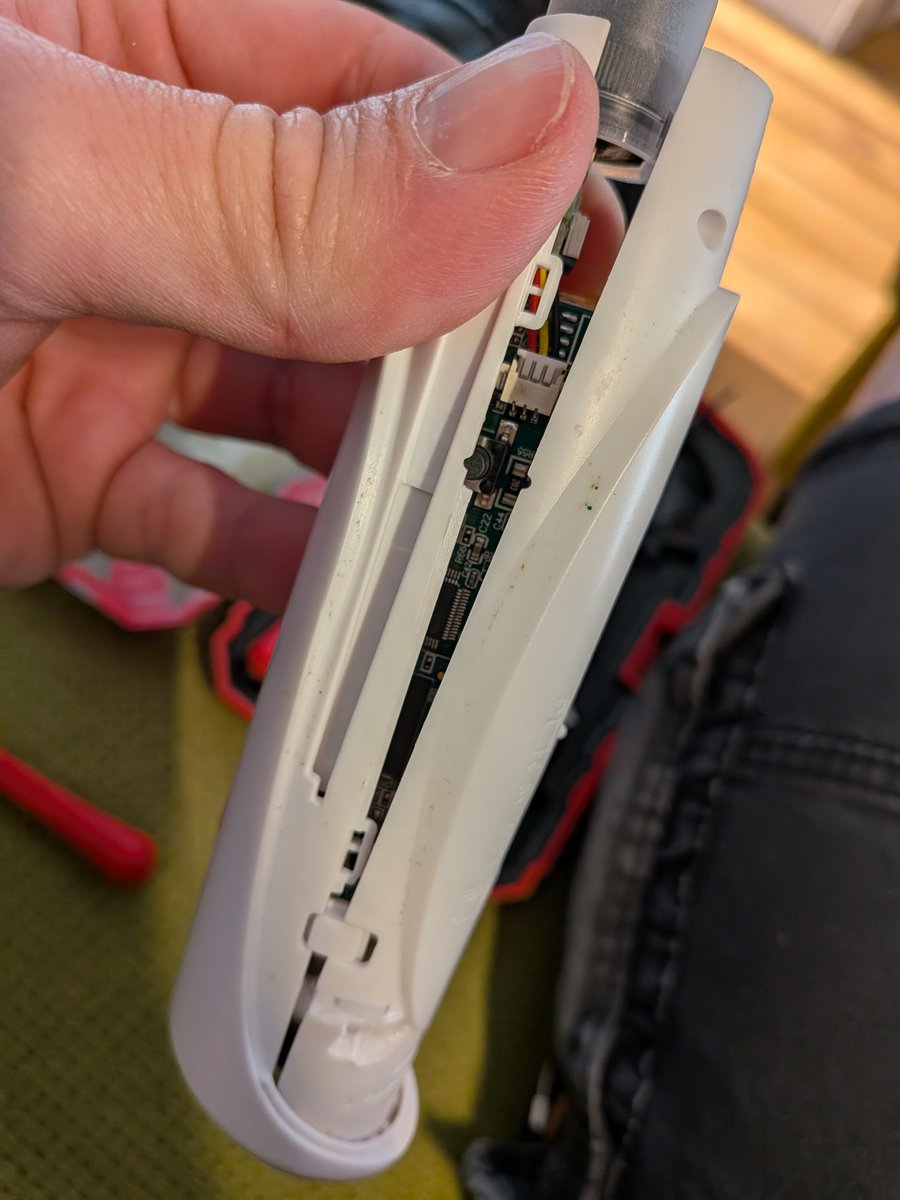

New friend just arrived! Been counting the days 🤪 Hello World Reachy Mini 🦾 As easy & cool as Lego, seriously promising. Shipped an app to the Reachy Mini App Store in just a few hours! 👉huggingface.co/spaces/Taikos/… Congrats @pollenrobotics @huggingface @ClementDelangue and the team!

AOC: “There’s a certain level of wealth and accumulation that is unearned. You can’t earn a billion dollars. You just can’t earn that. You can get market power, you can break rules, you can abuse labor laws, you can pay people less than what they’re worth, but you can’t earn that”

I’m convinced: you can compensate for a “worse” model with your own human intelligence and abilities. Unfortunately, the corollary… yeah.