Jonathan Heek

17 posts

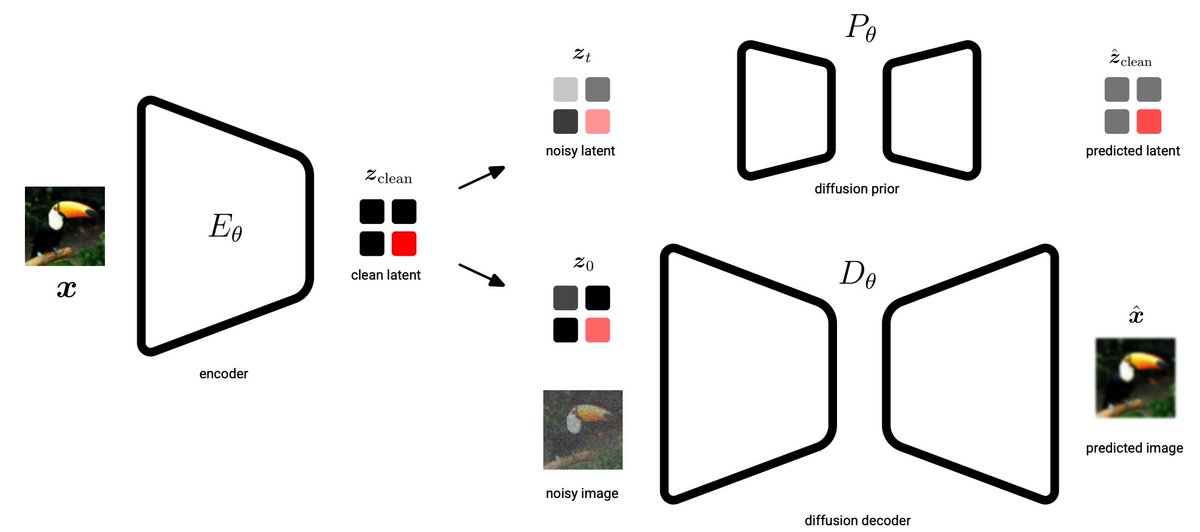

1/6 Introducing Unified Latents: what if your diffusion model's latents were measured in bits? Instead of relying on dimensionality reduction, we learn a latent AE with explicit bitrate control.

Paper: arxiv.org/abs/2602.17270

@emiel_hoogeboom, @TimSalimans

English

Jonathan Heek retweetledi

Is pixel diffusion passé?

In 'Simpler Diffusion' (arxiv.org/abs/2410.19324) , we achieve 1.5 FID on ImageNet512, and SOTA on 128x128 and 256x256.

We ablated out a lot of complexity, making it truly 'simpler'. w/ @tejmensink @JonathanHeek @KayLamerigts @RuiqiGao @TimSalimans

English

Jonathan Heek retweetledi

🚀 Interested in time series generation?⏲️Excited to share my @GoogleDeepMind Amsterdam student researcher project: Rolling Diffusion Models!

arxiv.org/abs/2402.09470 (to appear at ICML 2024)

Thanks for the great collaboration @emiel_hoogeboom, @JonathanHeek, @TimSalimans! 🧵1/4

GIF

English

Jonathan Heek retweetledi

We have a new distillation method that actually *improves* upon its teacher.

Moment Matching distillation (arxiv.org/abs/2406.04103) creates fast stochastic samplers by matching data expectations between teacher and student.

Work with @emiel_hoogeboom @JonathanHeek @tejmensin.

1/4

English

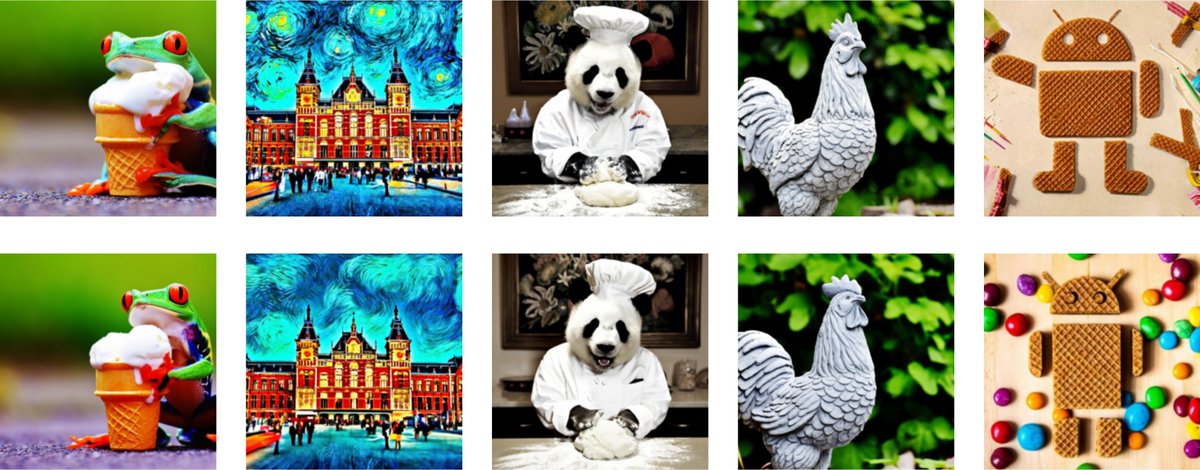

Fast sampling with 'Multistep Consistency Models': We get 1.6 FID on Imagenet64 in 4 steps and scale text-to-image models, generating 256x256 images with 16 steps.

Guess which row is distilled?

With @emiel_hoogeboom @TimSalimans

Arxiv: arxiv.org/abs/2403.06807

English

Jonathan Heek retweetledi

If diffusion models are so great, why do they require modifications to work well? Like latent diffusion and superres diffusion?

Introducing "simple diffusion": a single straightforward diffusion model for high res images (arxiv.org/abs/2301.11093) . w/ @JonathanHeek @TimSalimans

English

Jonathan Heek retweetledi

🥳 It is now super easy to fine-tune EfficientNet in FLAX! We open sourced a FLAX version of all officials EfficientNet checkpoints as a by product of our last paper: github.com/google-researc…

English

Jonathan Heek retweetledi

JAX on Cloud TPUs is getting a big upgrade!

Come to our NeurIPS demo Tue. Dec. 8 at 11AM PT/19 GMT to see it in action, plus catch a sneak peek of a new Flax-based library for language research on TPU pods.

Link: neurips.cc/ExpoConference… (neurips.cc/Register2 is still open!)

English

Jonathan Heek retweetledi

I’d like to share the new JAX/Flax PixelCNN++ (using new Flax ‘linen’ API github.com/google/flax/tr…), a performant baseline AR image model, built as part of my internship at Google Brain Amsterdam. github.com/google/flax/tr…. 👇

English

@LazyOp @NalKalchbrenner Thanks for spotting that. You are correct, those terms are missing from the pseudo-code. I will make sure that this gets fixed in the revision.

English

@NalKalchbrenner In algorithm 1 lines 10 and 11, shouldn't there be a +theta and +xi?

English

Jonathan Heek retweetledi

Announcing exciting progress in Bayesian deep learning: the new ATMC sampler achieves first of its kind Bayesian inference results on ImageNet

Check out the results and the paper 👇

Heek et al: arxiv.org/abs/1908.03491

English

@duane_rocks @avitaloliver @DeepSpiker Actually it's both. There's uncertainty in the model outputs and uncertainty about the model parameters. Sampling is used to marginalize over the uncertainty in the model parameters to obtain predictive uncertainty.

English

Super proud of work from my teammate @JonathanHeek from Google Brain Amsterdam: Scales up Bayesian Inference with a sampler that outperforms all ImageNet models that don't use batch norm.

Important added benefit is accurate uncertainty estimates, via rigorous calibration testing

Nal@nalkalc

Announcing exciting progress in Bayesian deep learning: the new ATMC sampler achieves first of its kind Bayesian inference results on ImageNet Check out the results and the paper 👇 Heek et al: arxiv.org/abs/1908.03491

English

@goodfellow_ian @NalKalchbrenner There's definitely reason to believe that a "Bayesian discriminator" will result in a better behaved estimate of D*. The predictions will be less saturated potentially resulting in a better signal for the generator. An ensemble of discriminators could improve robustness further.

English

@NalKalchbrenner Can it make a better GAN discriminator due to better estimate of D* = p_data / (p_data + p_model)?

English