Pierre Foret

84 posts

Pierre Foret

@Foret_p

QR at Citadel Securities. Ex Google AI resident.

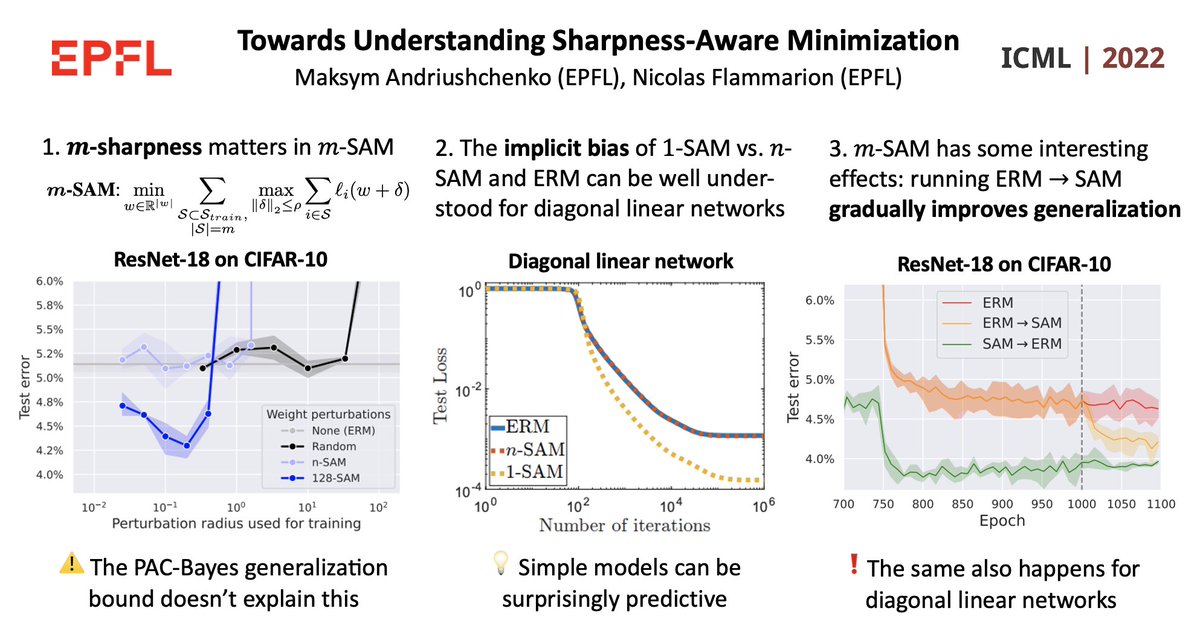

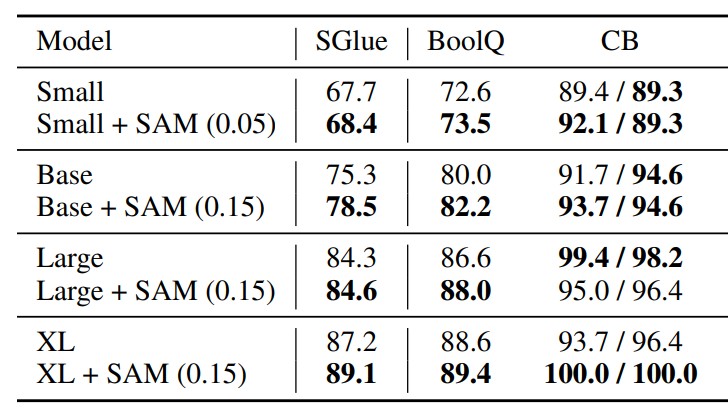

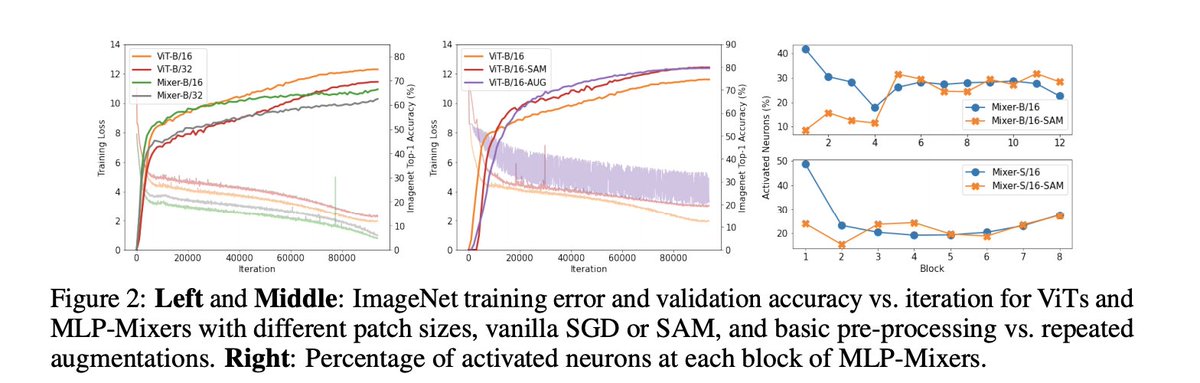

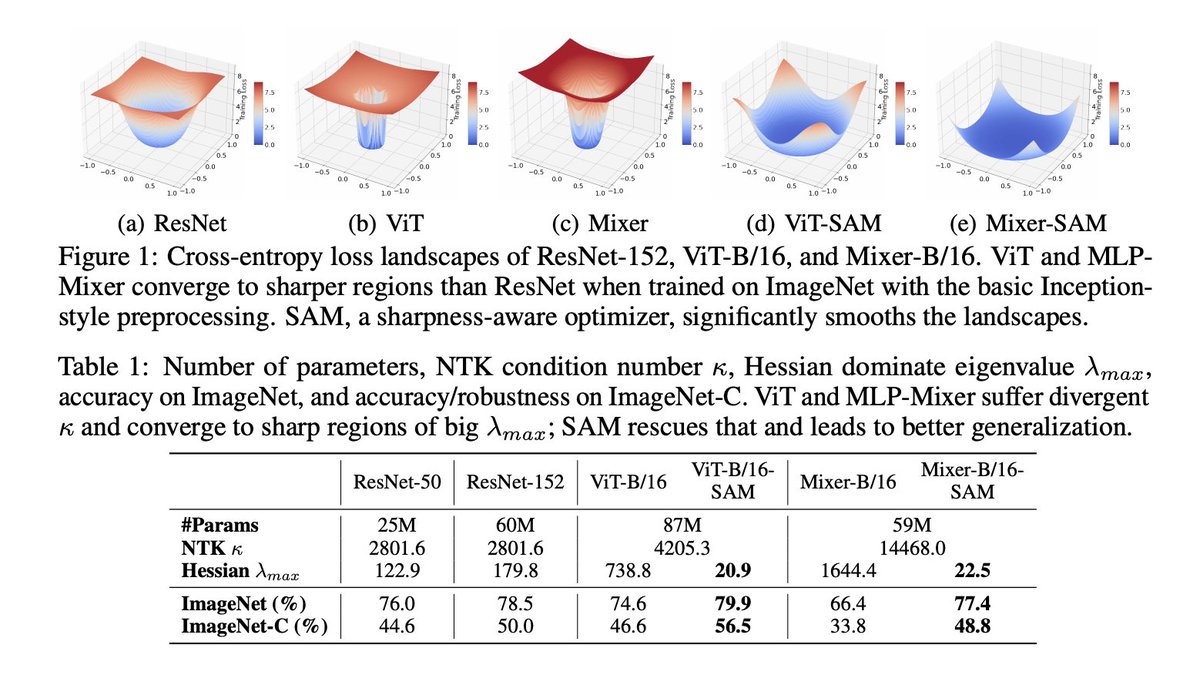

When Vision Transformers Outperform ResNets without Pretraining or Strong Data Augmentations pdf: arxiv.org/pdf/2106.01548… abs: arxiv.org/abs/2106.01548 +5.3% and +11.0% top-1 accuracy on ImageNet for ViT-B/16 and MixerB/16, with the simple Inception-style preprocessing

Drawing Multiple Augmentation Samples Per Image During Training Efficiently Decreases Test Error pdf: arxiv.org/pdf/2105.13343… abs: arxiv.org/abs/2105.13343 ImageNet SOTA of 86.8% top-1 accuracy after just 34 epochs of training with an NFNet-F5 using the SAM optimizer

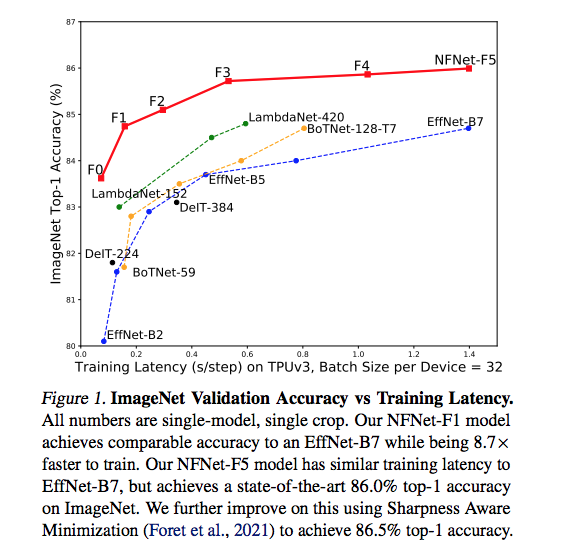

Our most recent work on training Normalizer-Free nets! We focus on developing performant architectures which train fast, and show that a simple technique (Adaptive Grad Clipping, or AGC) allows us to train with large batches and heavy augmentations and reach state-of-the-art.