Juliann · learning out loud

491 posts

Juliann · learning out loud

@JuliannPod

How do we learn, grow, and bet on ourselves in the AI era? AI · cognition · craft · culture. Writing what I find.

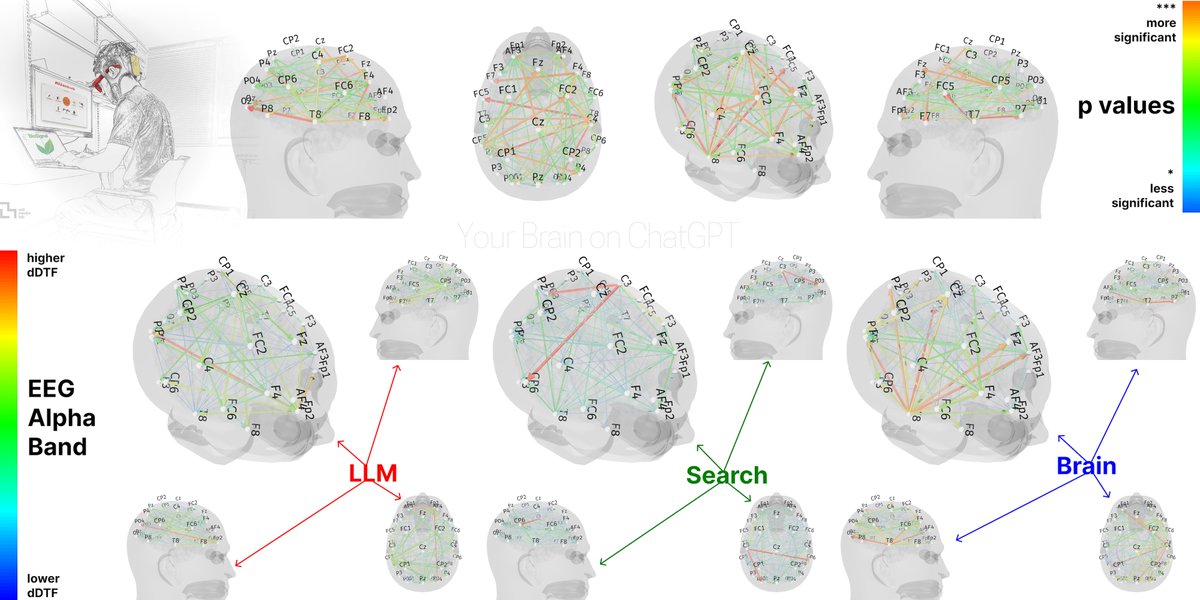

I'm 22 years old and Claude Code is deteriorating my brain. Every single day for the last 6 months I've had 6 to 8 Claude Code terminals open, waiting for a response just so I can hit 'enter' 75% of the time. And it's doing something to me. In convos with a couple of friends, it's been a point that's been brought up pretty frequently. None of us feel as sharp as we used to. I don't know if it's just us, or others in their 20s are feeling the same thing, but it's something I've been thinking about a lot. P.S. I know this is a problem with my reliability/usage of it, not Claude Code itself, but the effects are real nonetheless

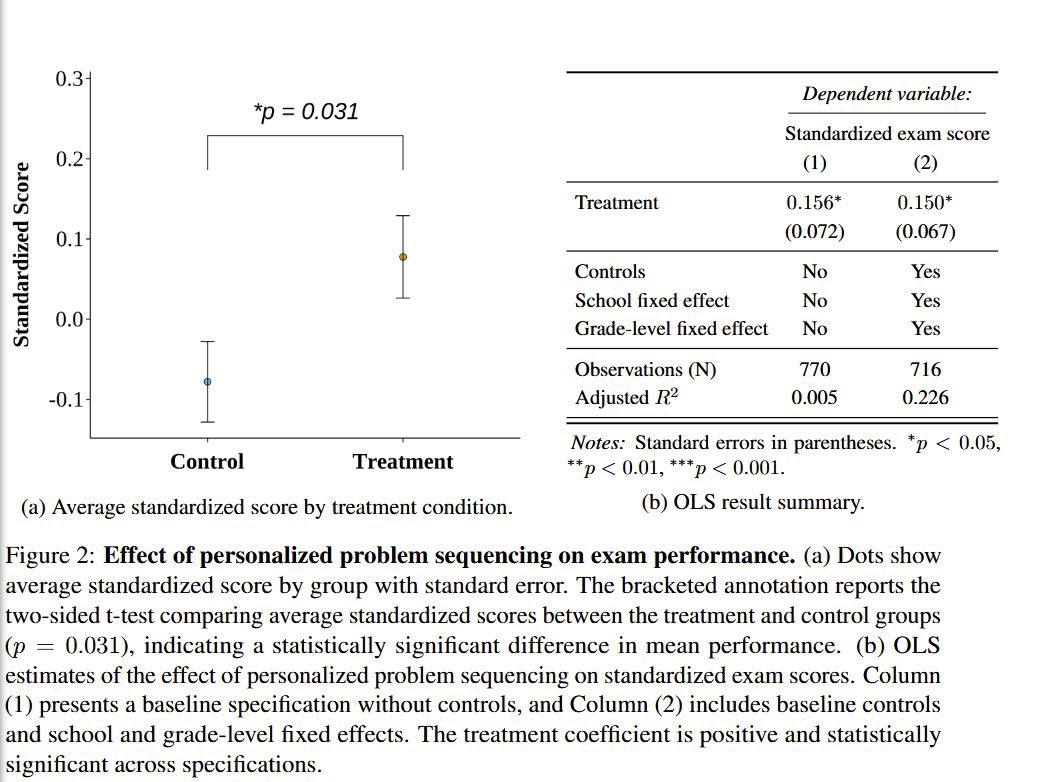

Students who used AI to study remembered less than those who did not.

AI promised to do the work for us so we could enjoy our time doing other things. Since llms, me and everyone ambitious around me has been working harder than ever. I don't think this stops anytime soon.