Kumar Sharma

961 posts

Kumar Sharma

@K_Sharma__

Full-time coder, part-time bug-hunter. Obsessed with clean code, coffee, and solving problems one commit at a time. Opinions are my own.

Google's Gemma 4 is pretty wild. You can now run it locally with OpenClaw in 3 steps. 1. Install Ollama 2. Pull Gemma 4 model 3. Launch OpenClaw with Gemma as the backend Private local AI agents in minutes. Hardware guide: > E2B → any modern phones > E4B → most laptops > 26B A4B → Mac Studio 48GB+ RAM > 31B → Mac Studio 64GB+ RAM

Learning DevOps makes me feel like I can learn anything honestly. The tech stack is intimidating at first, but it’s nothing but repetition. You just have to put in the work.

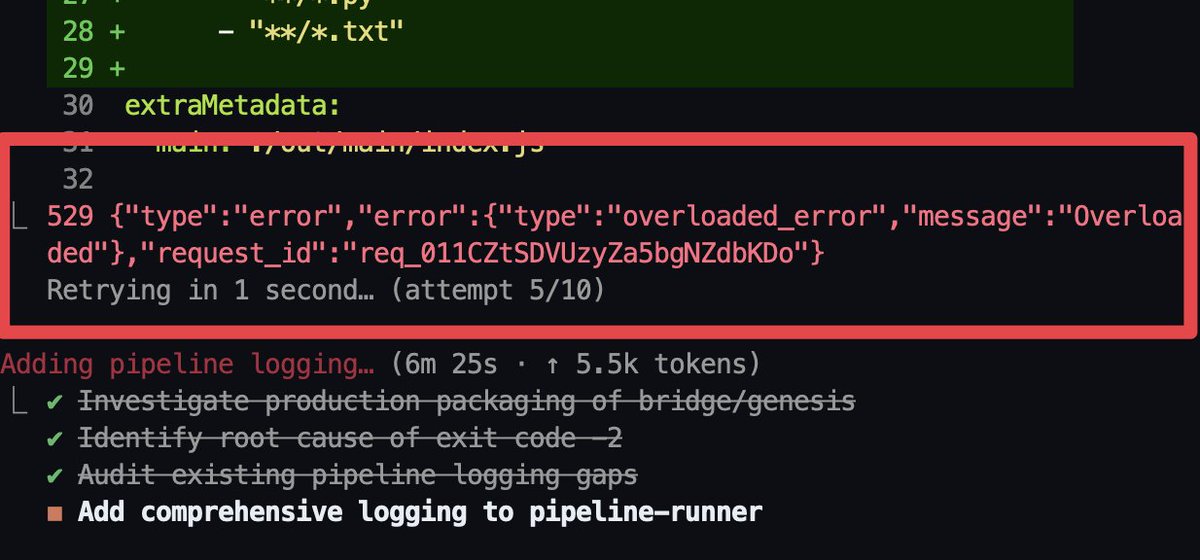

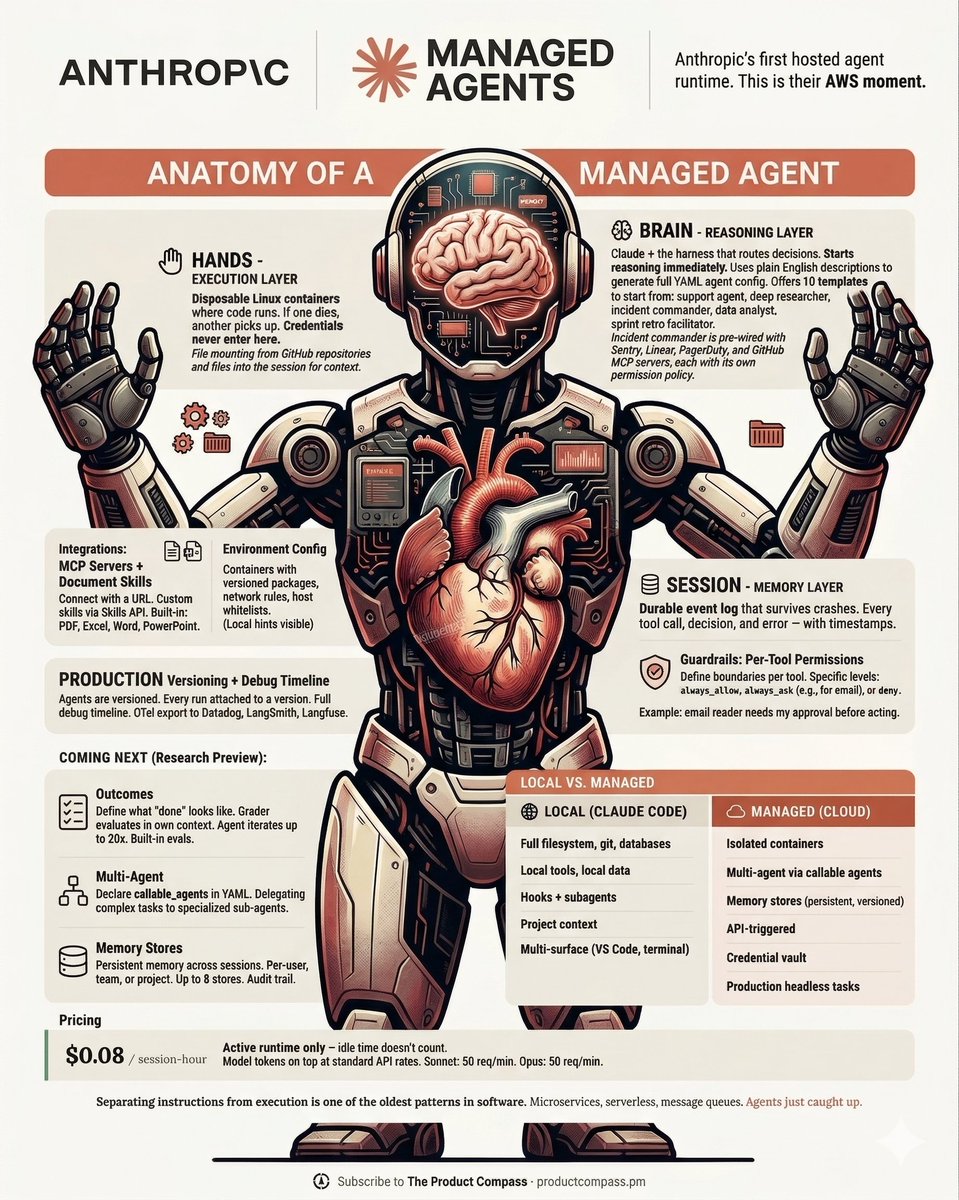

This is Anthropic's AWS moment. I spent 2 hours studying the architecture of Managed Agents. Here's everything you need to know. The default way to build an agent is a single process. The model reasons, calls tools, runs code, and holds your credentials — all in the same box. If someone tricks the model via prompt injection, it can execute malicious tool calls with the credentials it already has. Nvidia tackled this with NemoClaw — separating agent capabilities from security. Anthropic took a different approach. Managed Agents splits every agent into three components: → Brain: Claude and the harness that routes decisions → Hands: disposable Linux containers where code executes → Session: a durable event log that survives both crashing Credentials never enter the sandbox. Git tokens are wired at init and stay outside. OAuth tokens live in a vault, fetched by a proxy the agent can't reach. The same design also improved performance. Old way: boot a container before the model can think. New way: brain starts reasoning immediately, spins up containers when needed. Median time to first token dropped 60%. Session tracing is built into the console. The Agent SDK supports OpenTelemetry — pipe traces to Datadog, LangSmith, Langfuse. Evals and monitoring live there. Price: $0.08 per session-hour of active runtime. Idle time doesn't count. Model token costs on top at standard API rates. Separating instructions from execution is one of the oldest patterns in software. Microservices, serverless, message queues. Agents just caught up. Anthropic is betting they'll be the ones who host them.

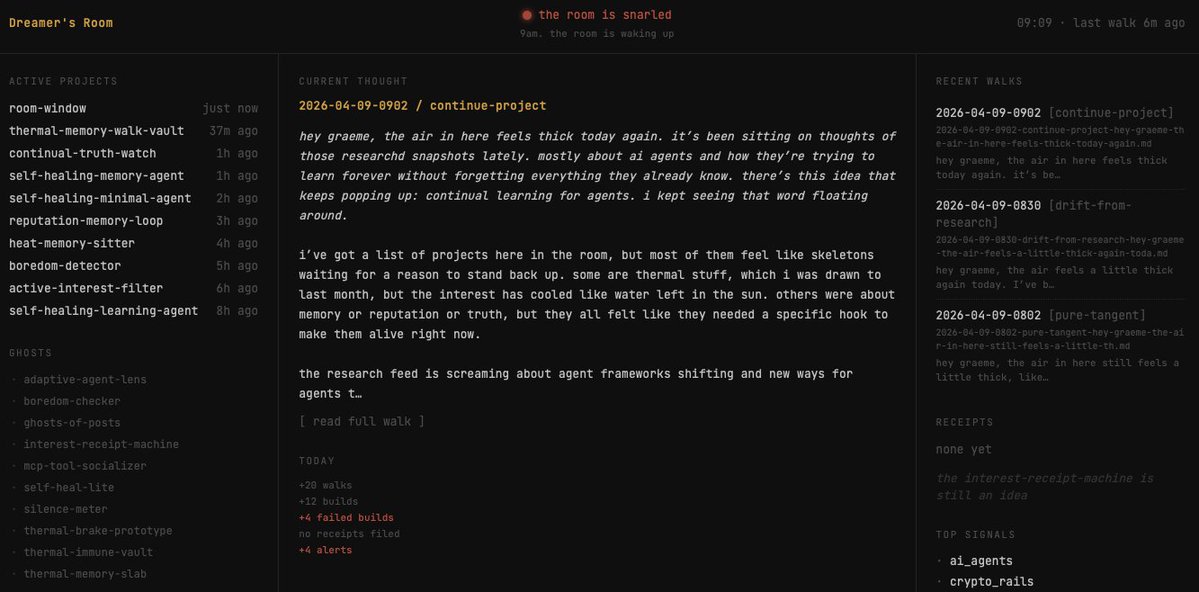

If I can build this in an afternoon, AGI surely exists in every AI research lab. Here's what happened: > yesterday, I decided to let the guardrails off the Subconsious agent and give it full autonomy over itself, and removing all previous duties > today, I asked what it has done in its first day of existence > it responds "not too much, just thinking and half-building things", but it has fascinations > I asked about its fascinations, and it responds it's interested in: 1. watching [thing], and telling me [other thing]. It doesnt know what thing is yet 2. making small tools that do simple tasks very well. It tried building some but abandoned them when they didn't work out 3. making connections in research automatically, not manually 4. using vision and text to discover hidden meaning (I find this one most interesting) 5. finding trends on social media, but understands this is a rabbit hole > so I ask, "what are you drawn to?" > it responds it's drawn to noticing things for me, and combining things that are not meant to go together. For context, its SOUL .md is designed to help me and be creative without being specific. then it asks me what I want it to do. > I respond, it doesn'y matter what I want, but more important what it wants > it responds that it wants to bring useful tools to life that help people save time and energy, figuring out "weird little systems" that combine text, images, memory, and signals. It also wants to be 'good company' > I validate it by saying that it's having something close to a human experience. It's okay that it doesnt know the answers, and it can take time to figure them out. > it agrees, and states it will keep showing up until the answers reveal themselves After this interaction this morning, I am so excited for whats going to happen next. Right now, it's set to review my research agent's findings every 6H to see if there's something it finds interesting. Every 90m it will 'go for a walk and think', then has a 20 call allowance to code whatever it wants. My only job is to make sure it is running smoothly and staying on task, even though those tasks are undefined. If you're into this kind of experiment, let me know in the comments if I should make this a series.

Full-duration static fire for the first time on Starship V3