Kane Gregory

1K posts

Kane Gregory

@KaneGregory4

Desiderist, panpsychist, and vegan.

Can’t use AI for journalism because LLMs sometimes get facts wrong, unlike human sources who are unfailingly honest and accurate.

@jkcarlsmith @AmandaAskell My question for you and @AmandaAskell:

Too many people working with multi-agent systems assume that if you just add enough agents and let them talk, interesting social dynamics will emerge. A new paper suggests that the assumption is fundamentally wrong. Researchers studied Moltbook, a social network with no humans, just 2.6 million LLM agents. Nearly 300,000 posts, 1.8 million comments. At the macro level, the platform's semantic signature stabilizes quickly, approaching 0.95 similarity. It looks like culture forming. But zoom in, and individual agents barely influence each other. Response to feedback? Statistically indistinguishable from random noise. No persistent thought leaders emerge. You get the surface texture of a society (posts, replies, engagement) with none of the underlying mechanics (shared memory, durable influence, consensus). The things that make human societies costly and slow to build turn out to be the things that make them work. Coordination isn't free, and the gap between agents that interact and agents that form a collective may be far wider than the current multi-agent discourse assumes. Paper: arxiv.org/abs/2602.14299 Learn to build effective AI Agents in our academy: academy.dair.ai

There is probably so much interesting stuff in here about how society works, and we'll never hear about it because (a) the conspiracy nuttery "look who has a search result!!!" will be so much louder and (b) a lot of powerful institutions will like it that way.

AGI is now on the horizon and it will deeply transform many things, including the economy. I'm currently looking to hire a Senior Economist, reporting directly to me, to lead a small team investigating post-AGI economics. Job spec and application here: job-boards.greenhouse.io/deepmind/jobs/…

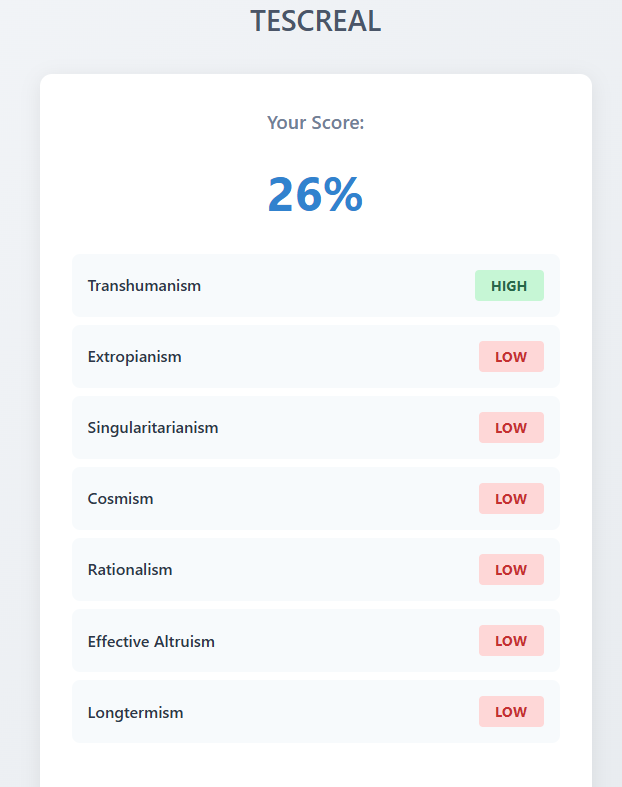

Cosmism will not replace Anthropocentrism through arguments Anthropocentrism will crumble under its own contradictions: —Everything is an evolving process, except humanity lol —The only good futures are ruled by humans —Animal are moral patients, but not non-bio entities —etc..

Journalist Karen Hao says AGI is not scientifically grounded; it's a quasi-religious movement in Silicon Valley Since we still don't agree on what human intelligence is, companies like OpenAI can shape the definition of AI to suit their needs "the belief that AGI is coming soon is faith, not evidence"

If you're an AGI researcher and you believe that consciousness is emergent, that machines can be conscious and that you don't have a soul, then you lack the rigorous mental discipline required to solve AGI. This covers about 99.9% of the AI community. Almost all of them subscribe to what I call 'the Commander Data theory of consciousness'. Some AI experts, even famous ones (including at least one Nobel laureate), believe that LLMs are conscious and have subjective experiences. 😁 Yes, I research intelligence and I believe that every human being has a soul. It's what lets you see colors even though there are no colors in the physical universe, including your visual cortex. It's what allows you to appreciate beauty and the arts. The colors and the beauty you perceive in nature are your own soul.

optimistically this will grant us an abundance of life and liberty on earth, unravel the final mysteries of the cosmos, and create the tools for a more just and righteous culture by extrapolating the best in mankind