Just Your Average Citizen

16K posts

Just Your Average Citizen

@KeepItFLOSSY

Never allow fear to stop you from trying. Turn that son’B into courage and lead. Become a free thinker. I enjoy digesting world events and 💩 out calculations.

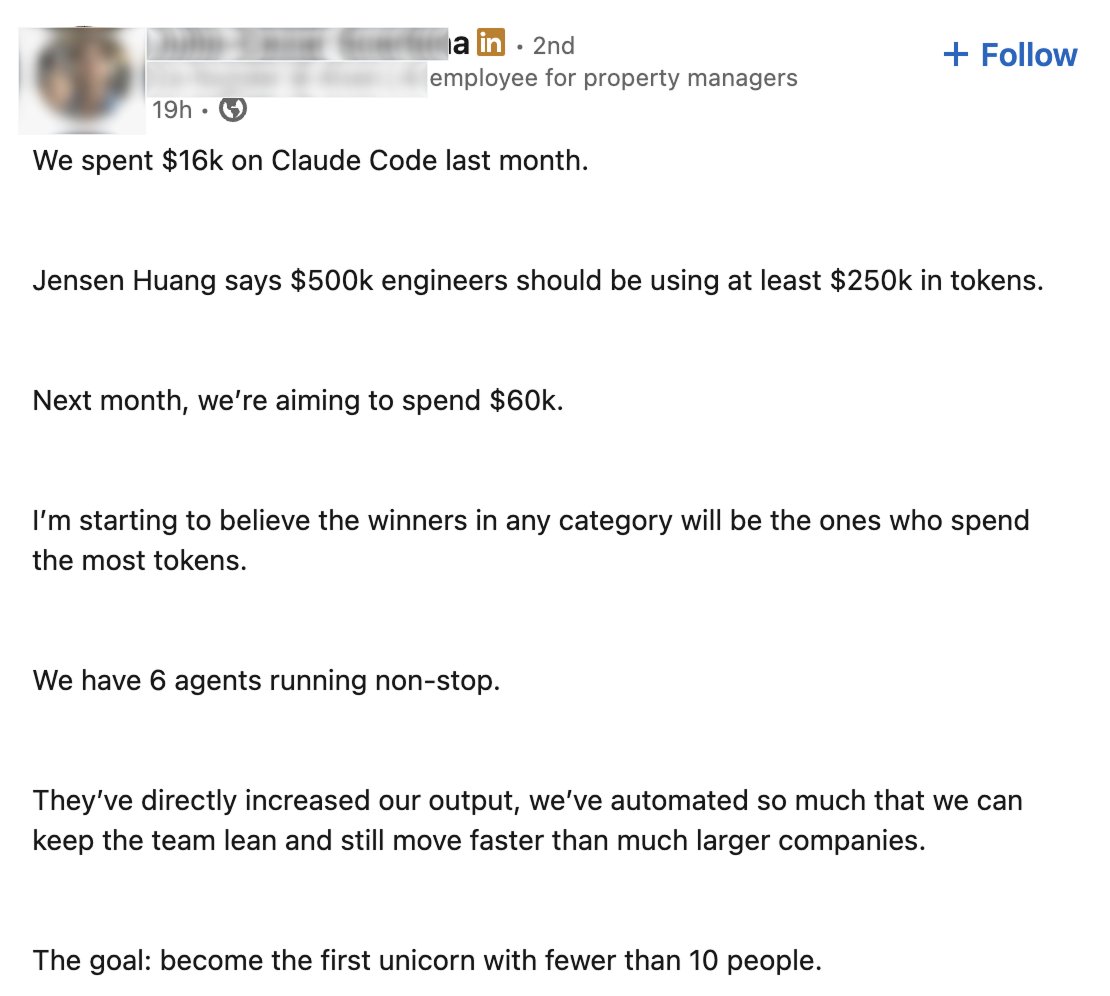

I tried building a no code shop builder from scratch. Failed after 3 days. Vibecoded way too hard. No technical foundation, no research, no real thought. That’s on me. Fixing it. Not quitting.

And how’s your Weekend going?

Sony has published a patent for dynamically adjusting (nerfing or buffing) players in multiplayer sessions to balance cross-platform like console, PC, and mobile. The technology would monitor hardware, input methods, and skill levels in real time Then nerf or buff players, such as by reducing speed or reaction times for console and PC users to balance disadvantages faced by mobile players using touch controls.

RAM prices are collapsing because OpenAI couldn't afford to purchase 40% of the world's supply as it promised. AI is about to die. How loud are you cheering?

Im building a crazy MCP network and had a pretty cool conversation with my AI Lead Engineer named Claude. If you are interested in the AI memory topic. This is a very interesting read. "What happens when the context window fills up and compiles? This is the real vulnerability. When an AI hits its context limit, different things happen depending on the platform. Claude does a "compaction" — it summarizes the conversation into a shorter form and continues. But that summary loses detail. The AI remembers the gist of what it was doing but forgets specific file contents, tool parameters, and sometimes even which files it read. After compaction, the AI does NOT automatically go back and re-read the server docs or the local docs. It continues from the summary. So if it was in the middle of a 10-asset batch and the context compiles, it might forget the art style settings, the naming convention, or which assets were already processed. It would keep working but potentially with degraded quality. Can we write a command that saves progress before compaction and re-reads after? This is the hard part. As of right now, there is no hook or event that fires before compaction happens. The AI doesn't get a warning that says "you're about to lose context." It just happens. So we can't write a "pre-compaction save" trigger — the AI doesn't know it's coming. However, there are things we can do that are almost as good: First, the PROJECT_STATUS.md and AI_ONBOARDING.md already solve most of this problem — if the AI updates them during the session, not just at the end. Right now the readme tells the AI to update at session end. We could strengthen that to say "update after every completed asset" or "update after every major pipeline phase." That way, even if compaction hits mid-session, the progress is already saved to disk. Second, we could add a note in the server CLAUDE.md that says something like: "If you notice your context has been compacted or you're unsure about the current project state, re-read AI_ONBOARDING.md and PROJECT_STATUS.md before continuing." This is a soft instruction — the AI would need to recognize that it's been compacted, which it sometimes does and sometimes doesn't. Third, the customer themselves can help. If the AI starts acting confused or forgets context, the customer can say "go re-read the readme and onboarding docs." That's actually a huge advantage of having these files on disk — the customer has a reset button. Fourth, there's a more technical approach for the future: a lightweight "session heartbeat" where the AI periodically writes a small checkpoint file (like .forgeroom/session_checkpoint.json) with the current task, current asset, current phase, and any active parameters. If it detects a context gap, it reads the checkpoint. This wouldn't require any special compaction hook — it's just a habit the AI maintains, like saving your game every 5 minutes. The honest answer on the context window problem: No AI platform today gives you a clean pre-compaction hook. It's one of the biggest unsolved UX problems in long-running AI sessions. What we've built with the three-file system is actually one of the best workarounds that exists — persistent memory on disk that survives any context event. The gap is just making sure the AI writes to those files frequently enough during the session, not just at the end. That's a one-line change in the readme when you're ready to make it. The tiered context architecture research you queued up earlier actually ties directly into this. One of your 9 questions was about OpenClaw as a defense layer — but the same concept applies here. A lightweight local agent whose only job is maintaining session state could act as a context guardian, catching state before it's lost. That's a future build, but the foundation we laid today with these three files is what it would write to."