Kenneth Stanley

2K posts

Kenneth Stanley

@kenneth0stanley

SVP of Open-Endedness @LilaSciences. Prev: Maven CEO, Lead@OpenAI, Uber AI, prof@UCF. NEAT,HyperNEAT,novelty search, POET. Book:Why Greatness Cannot Be Planned

New episode with @kenneth0stanley on Why Objectives Are the Enemy of Greatness. What if the surest way to fail at something ambitious is to have a clear plan to achieve it? What if a robot that doesn't know it's trying to walk learns faster than one explicitly trained to walk? Kenneth Stanley is one of the most provocative minds in artificial intelligence. Former professor and team leader at @OpenAI, he co-authored the cult classic Why Greatness Cannot Be Planned—a manifesto that challenges everything we think we know about achievement. His research discovered something shocking: in AI experiments, robots seeking novelty learned to walk better than robots explicitly trained to walk. Over the last two years, Ken has taken these theories from the lab into the real world. As SVP of Open-Endedness at @LilaSciences, he's building scientific super intelligence—AI that autonomously discovers new biology and chemistry. His work reveals a profound truth: the path to greatness is paved with stepping stones you can't predict, and the greatest discoveries come from following what's interesting, not what's planned. ⏰ Timestamps: 00:00 - Introduction: When Objectives Become the Enemy 03:01 - How AI Experiments Led to Social Critique 08:00 - The Picbreeder Experiment: Breeding Pictures Online 15:00 - A Car from an Alien Face (Deceptive Stepping Stones) 21:50 - It's Not Random: The Sharp Compass of Interestingness 25:36 - From AI to Life Lessons: Permission to Follow Curiosity 32:55 - The Spaceship That Looked Like a Mistake 42:19 - Getting Lost in St. Petersburg: The Collection Metaphor 44:59 - Stepping Stones That Looked Like Mistakes 49:48 - Advice for the Founder Feeling Hollow Despite Hitting Metrics 53:29 - Balancing Investors, Boards, and Open-Ended Exploration 1:03:12 - Will Humans Become Curators of AI Creativity? Look up Duncan CJ on YouTube, Apple Podcasts, etc. Enjoy!

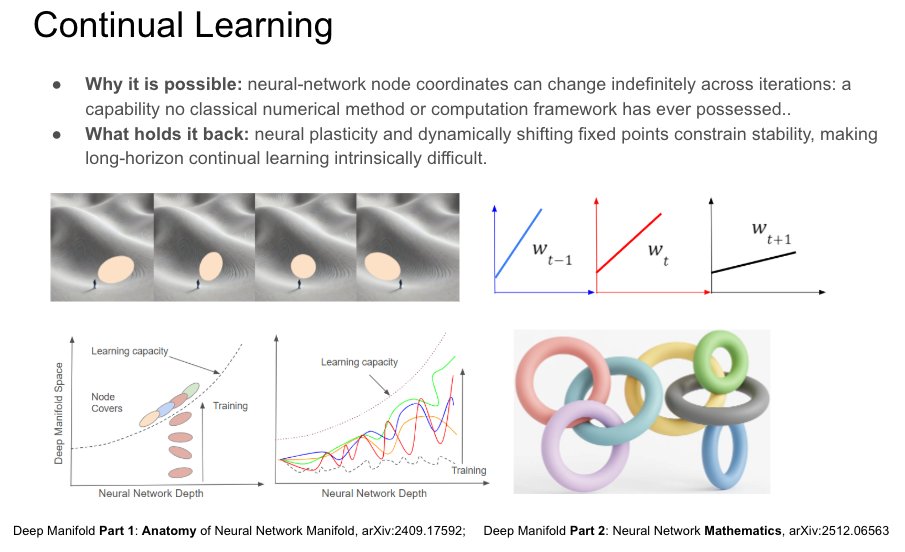

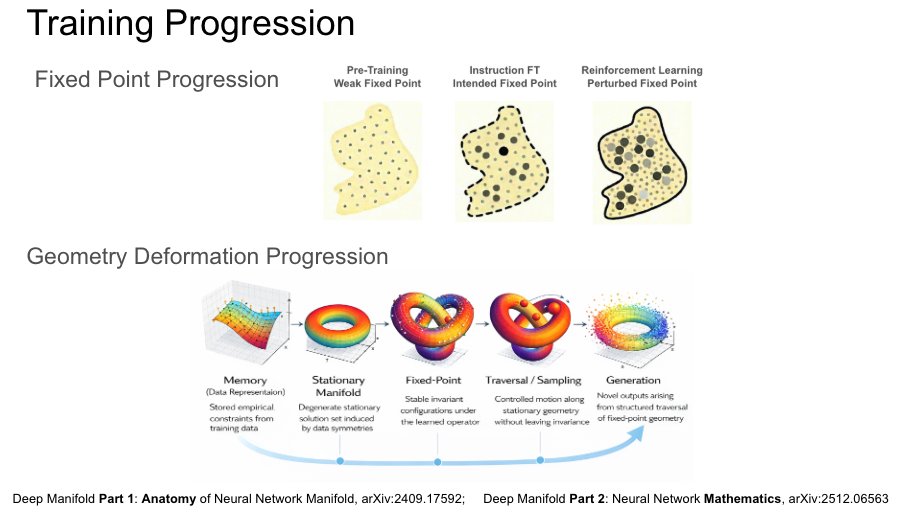

Difficulty achieving continual learning is also a bad omen for creativity: what you can imagine is naturally a function of what you can learn. Both are mediated by the adjacent possible to the same internal representations! Contorted algorithms (or the absence of clean options) for what should be simple and straightforward continual learning are therefore a hint that the large models they serve are creatively barren. That explains why something that is close to “knowing everything” and often competitive with the abilities of experts can still produce fewer breakthroughs than you would expect from a human with similarly astounding knowledge and expertise.

AI-powered robotic tools are muscling in on tasks typically done by humans. What does the future hold? go.nature.com/3PGxG8r

Introducing Hyperagents: an AI system that not only improves at solving tasks, but also improves how it improves itself. The Darwin Gödel Machine (DGM) demonstrated that open-ended self-improvement is possible by iteratively generating and evaluating improved agents, yet it relies on a key assumption: that improvements in task performance (e.g., coding ability) translate into improvements in the self-improvement process itself. This alignment holds in coding, where both evaluation and modification are expressed in the same domain, but breaks down more generally. As a result, prior systems remain constrained by fixed, handcrafted meta-level procedures that do not themselves evolve. We introduce Hyperagents – self-referential agents that can modify both their task-solving behavior and the process that generates future improvements. This enables what we call metacognitive self-modification: learning not just to perform better, but to improve at improving. We instantiate this framework as DGM-Hyperagents (DGM-H), an extension of the DGM in which both task-solving behavior and the self-improvement procedure are editable and subject to evolution. Across diverse domains (coding, paper review, robotics reward design, and Olympiad-level math solution grading), hyperagents enable continuous performance improvements over time and outperform baselines without self-improvement or open-ended exploration, as well as prior self-improving systems (including DGM). DGM-H also improves the process by which new agents are generated (e.g. persistent memory, performance tracking), and these meta-level improvements transfer across domains and accumulate across runs. This work was done during my internship at Meta (@AIatMeta), in collaboration with Bingchen Zhao (@BingchenZhao), Wannan Yang (@winnieyangwn), Jakob Foerster (@j_foerst), Jeff Clune (@jeffclune), Minqi Jiang (@MinqiJiang), Sam Devlin (@smdvln), and Tatiana Shavrina (@rybolos).