Kyle Huang

2.7K posts

@KyleHuang16

AI/UX | HCI researcher | Creative technologist | Nvidia | MSR Interest: Generative UI + Dynamic Experience

BREAKING: Arrow 1 by @QuiverAI ranks #1 on SVG Arena by Design Arena with an Elo of 1583 It's the first model to ever break 1500+ on one of our leaderboards, establishing the new SOTA frontier for SVG generation Huge congratulations to the @QuiverAI team for this remarkable breakthrough, just one day after launch!

Nothing extra Prompt 👇

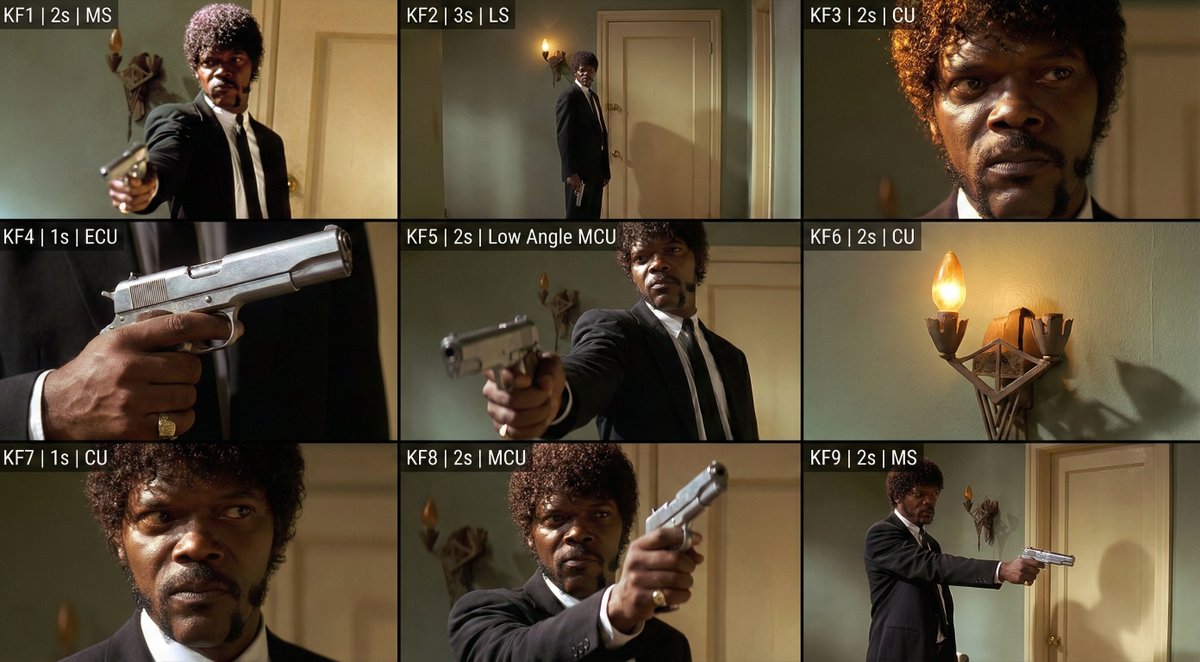

I didn't expect this change to attract so much attention. I did some more testing to verify its stability. Below is a test I conducted using screenshots from Harry Potter. Because the prompt was quite long, I've compiled it into an image and posted it in the comments. Thanks again to @techhalla for the great idea.