Kyle Jordan Maxwell

56.7K posts

Kyle Jordan Maxwell

@KyleJMaxwell__

Neuroscience & Biochemistry @UMBC, Philosopher. Chef. Host of The Kyle Maxwell Podcast. My pasta will change your life…

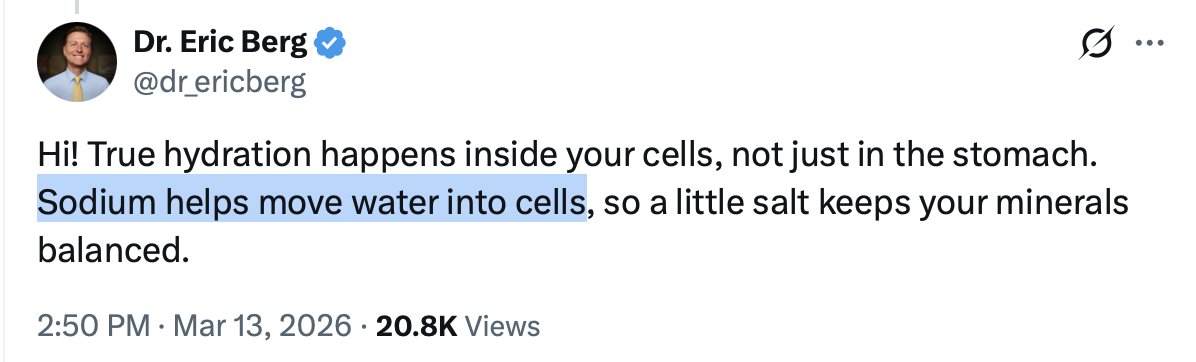

I try to remind med students and practicing physicians who consider scaling back or giving up clinical careers for content creation how unstable it can be. People view content creation as freedom. You can work for yourself on your own schedule. That's true to a certain degree, but keep in mind that you don't own your audience. TikTok owns it. YouTube owns it. One change in the algorithm could significantly impact your livelihood. A few years ago, YT reclassified shorts as videos that are under 3 minutes instead of 60 seconds. Overnight, my social media income was cut in half. It didn't matter too much to me, because I still work full time as an ophthalmologist. I know I shit on the healthcare system and point out all the terrible things we deal with as physicians, but for all its faults, practicing medicine is an incredibly stable career, much more stable than content creation. I will never begrudge anybody for pursuing their passions. Some people just can't practice medicine anymore, and are looking for any way out they can find. Content creation is a way out, and hardworking, talented people will make it happen. I just want people to know the grass isn't always greener...

🚨Asciende a 3 millones la cifra de usuarios que han desinstalado ChatGPT en los últimos días.