Matteo Latinov retweetledi

Matteo Latinov

63 posts

@svpino I like random forests as well, more than decision trees which I'd say can easily overfit the data

English

@marktenenholtz Good resources for learning timeseries forecasting?

English

@marktenenholtz Thoughts on mutual information for feature selection?

English

TL;DR:

1. Correlated features

2. Different model, different insights

3. Model agnostic

4. Negative feature importances

5. Strong generalization

Follow me @marktenenholtz for more high-signal ML content!

English

Matteo Latinov retweetledi

Matteo Latinov retweetledi

@xLaszlo Yes absolutely, domain specific names for the features. I see, so you could break up the dataset and still have the various features (now belonging to different classes) linked via the common id of the entity (row in this case)

English

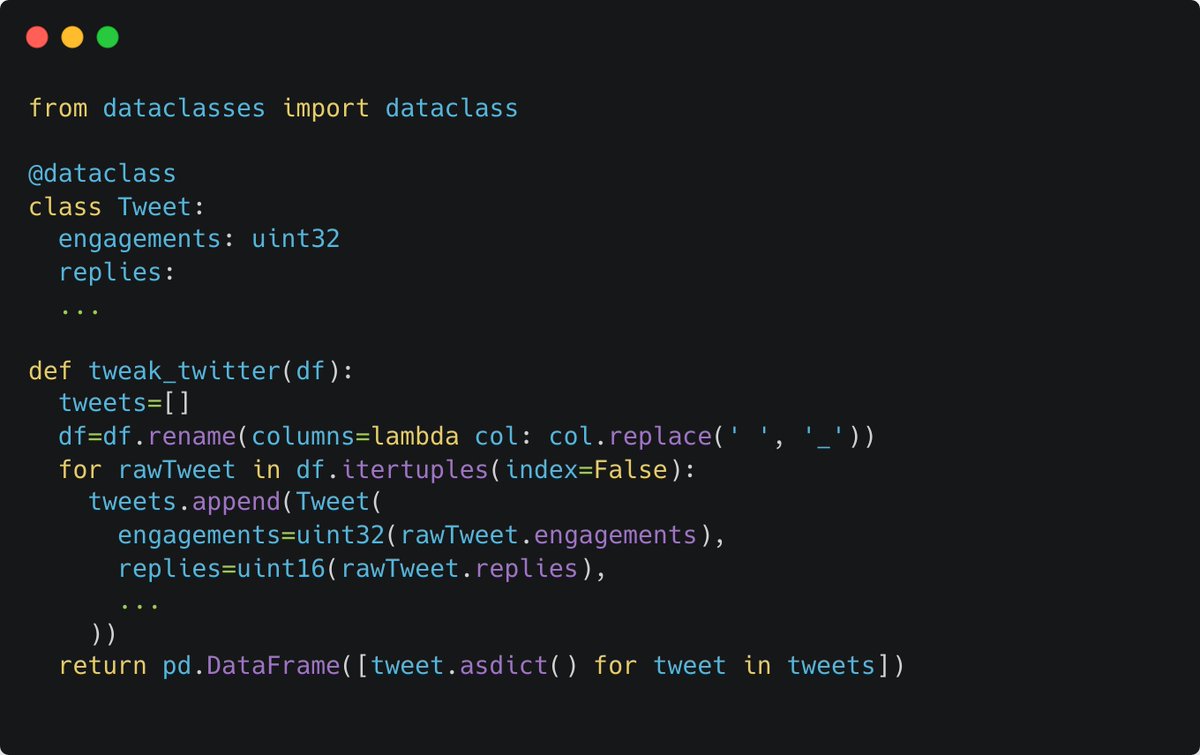

@LatinovMatteo Yes, why not. But I would definitely use domain specific names. Also check if the class can be decomposed into more logical parts and move them to their own class. Usage should be an indicator to that.

English

@xLaszlo how would you manage a dataclass with 100 attributes (e.g. dataset with 100 features)? Would you explicitly list all features in the dataclass and extract/assign each with .itertuples()? So in this example: engagements, replies, ... , feature100

English

Matteo Latinov retweetledi

Matteo Latinov retweetledi

I start every ML project by creating these files, more or less in this order:

1. .gitignore/README.md

2. eda.ipynb

3. data_notes.md

Days later...

4. baseline.(py/ipynb)

5. train.py

6. validation.py

7. error_analysis.ipynb

Structure -> creativity

English

Matteo Latinov retweetledi

#Sunday💤day - Check out our latest #podcast on #OSA! An excellent complement to our other work covering this incredibly important topic!

open.spotify.com/episode/64T5fS… pic.twitter.com/V1C6PP5tPE

English

Matteo Latinov retweetledi

Last day to get this for $15!

Goes up to $25 tomorrow!

twitter.com/marktenenholtz…

Mark Tenenholtz@marktenenholtz

"The Autonomous Data Scientist" is LIVE! Get it for $15 and you'll learn how to make $150k+ to work on some of the most exciting problems out there in data science. All for less than you'll pay for coffee this month. This price is only good until Thursday (it's going up)!

English

@marktenenholtz Really enjoyed the course! Inspirational and straight to the point. I'll be looking to apply this framework from here on out. Well worth the money IMHO.

5 stars were given😀

English

Matteo Latinov retweetledi

🔥#SundayFunday🔥 Burning heel pain got you down? It might be #plantar #fasciitis! Read this quick and informative article from @DMilesPT who educates us on heel pain, how to #rehab and how to #recover! barbellmedicine.com/blog/guide-to-…

English

Matteo Latinov retweetledi

Matteo Latinov retweetledi

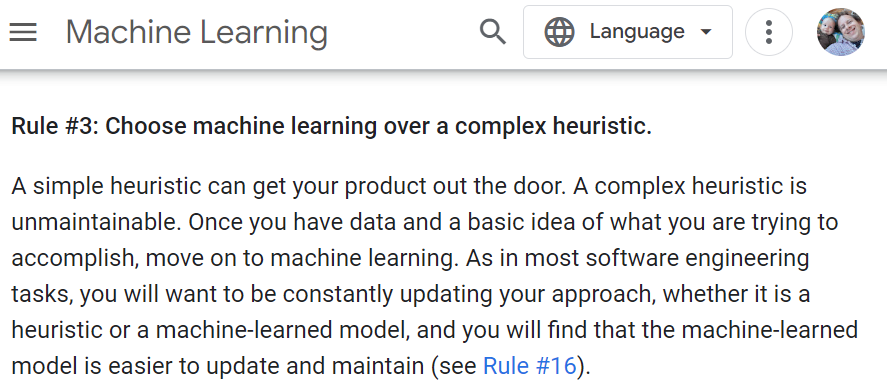

This is a great rule from Google's machine learning guides:

developers.google.com/machine-learni…

English

Matteo Latinov retweetledi

💤#SundayFunday💤 Today we share a ⭐NEW⭐ article from @AustinBaraki featuring @NateGordonMD on the important topic of #SleepApnea: An incredibly common condition which can have serious consequences but is fortunately, very treatable! Check it out! 👇👇

barbellmedicine.com/blog/a-basic-g…

English

Matteo Latinov retweetledi

@jeremyphoward Thank you! Any chance fastai will be available on Amazon Sagemaker Studio Lab in the near future?

English

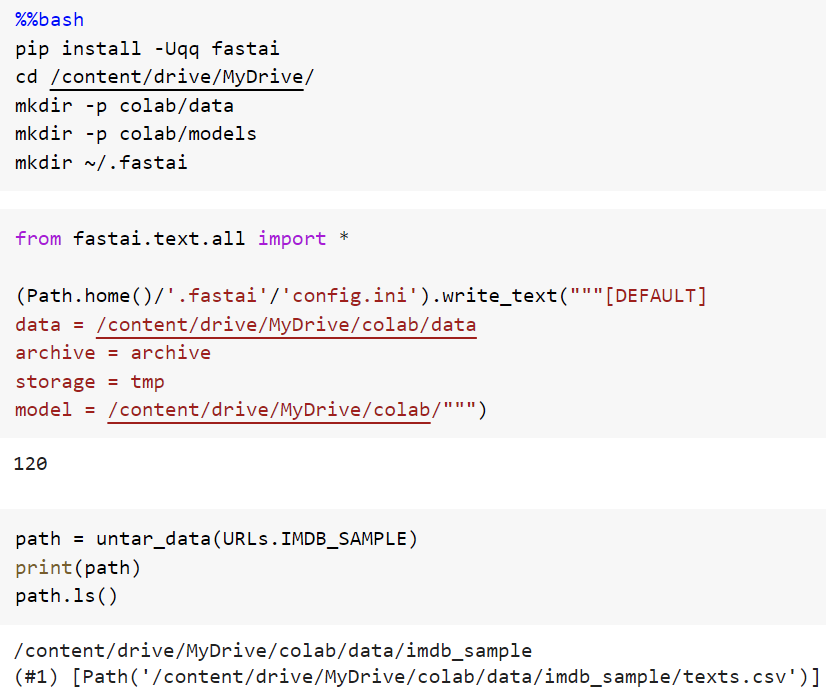

Do you use Google Colab and fastai? If so, here's a nifty trick to automatically load and save all models and data to Google Drive:

colab.research.google.com/drive/1cyvP1-3…

English