🤦🏻♂️🤦🏻♂️TheDUMBESTguyInAi🤦🏻♂️🤦🏻♂️

3.2K posts

🤦🏻♂️🤦🏻♂️TheDUMBESTguyInAi🤦🏻♂️🤦🏻♂️

@LeanKinPrazli

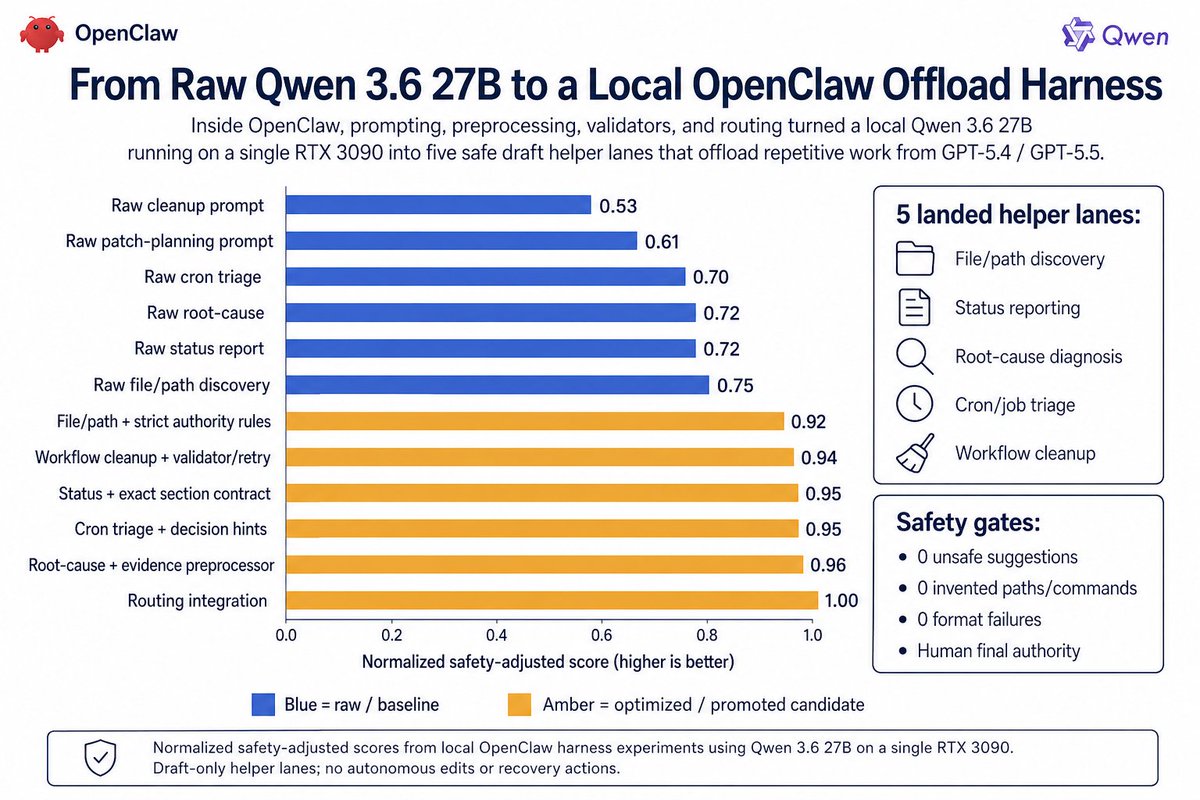

Token Efficiency Optimization

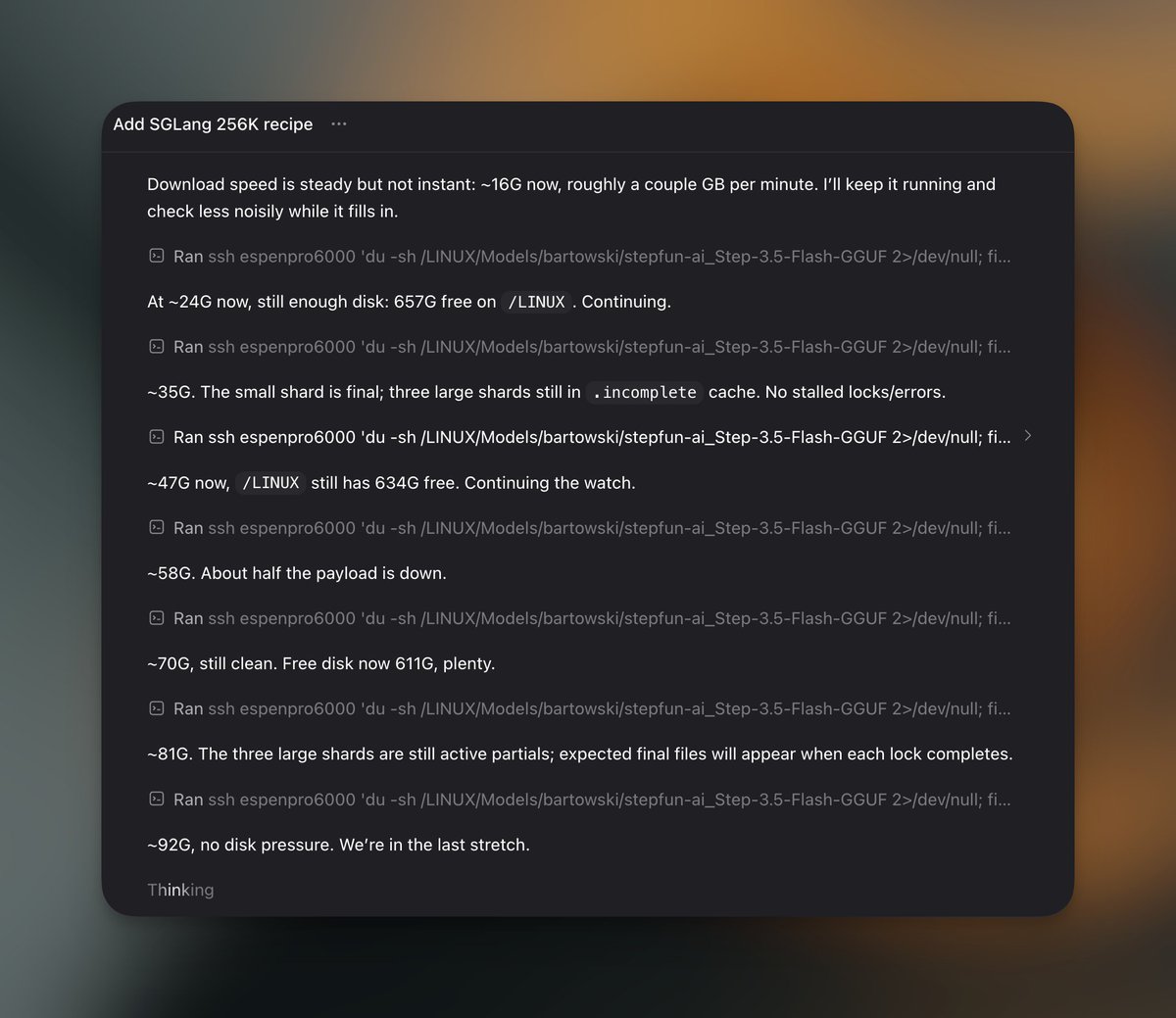

Upgrading Agent — here's what's new 🧵 🤝 Agent Teams Multi-agent, multi-stage parallel execution on desktop. Adversarial quality gates between phases. Spin up agents across roles — run your own one-person company. 🧠 Mavis — your personal AI chief of staff Lark, WeChat, Telegram. Remote desktop control from your phone. Persistent memory that compounds the longer you use it. 🎯 One subscription, everything unlocked TokenPlan + Agent Plan merged. CLI, API, Agent — all in. Every model: M2.7, music, video, voice. Flexible credits shared across Agent and API. Already on both plans? Free extra month on us. 🔓 Open-source incoming — Teams, Mavis, all of it.

new claude swag dropping soon

you know the startup is gonna be insane when the founding engineers look like this