Espen JD

3.8K posts

Espen JD

@Snixtp

Cyber Network Engineer | Codex enthusiast | Local AI | RTX Pro 6000 enjoyer

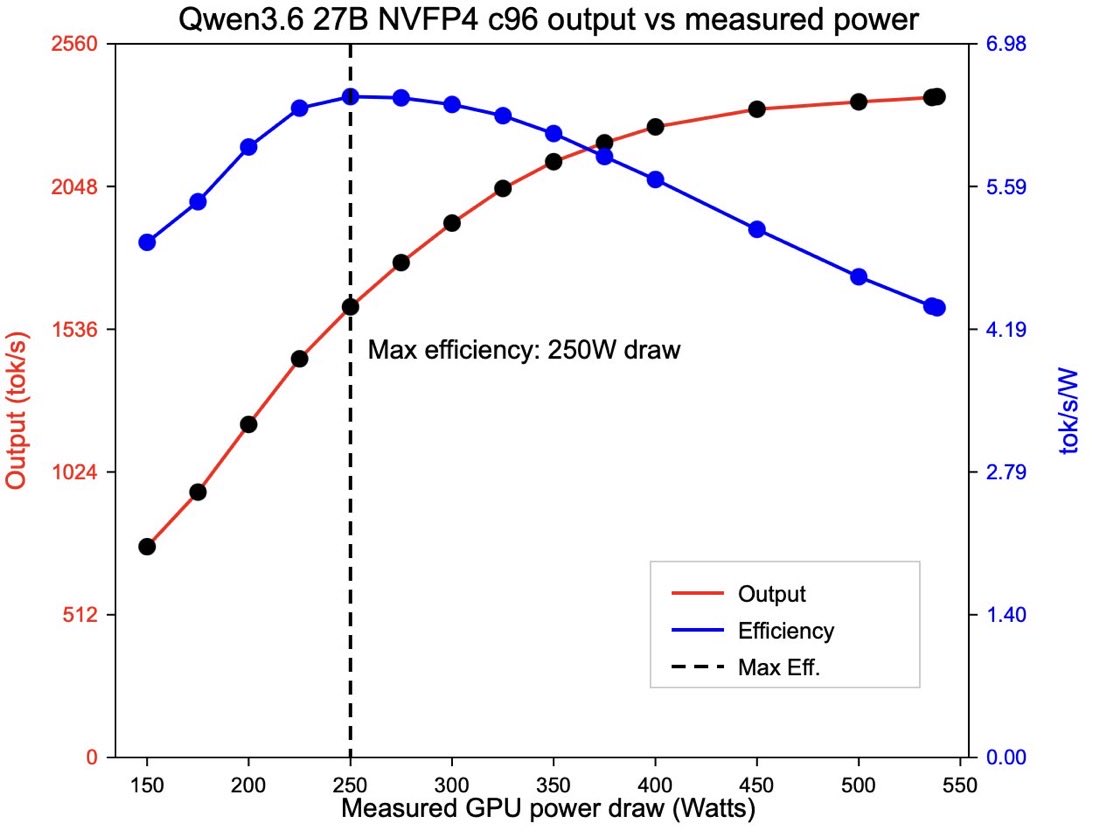

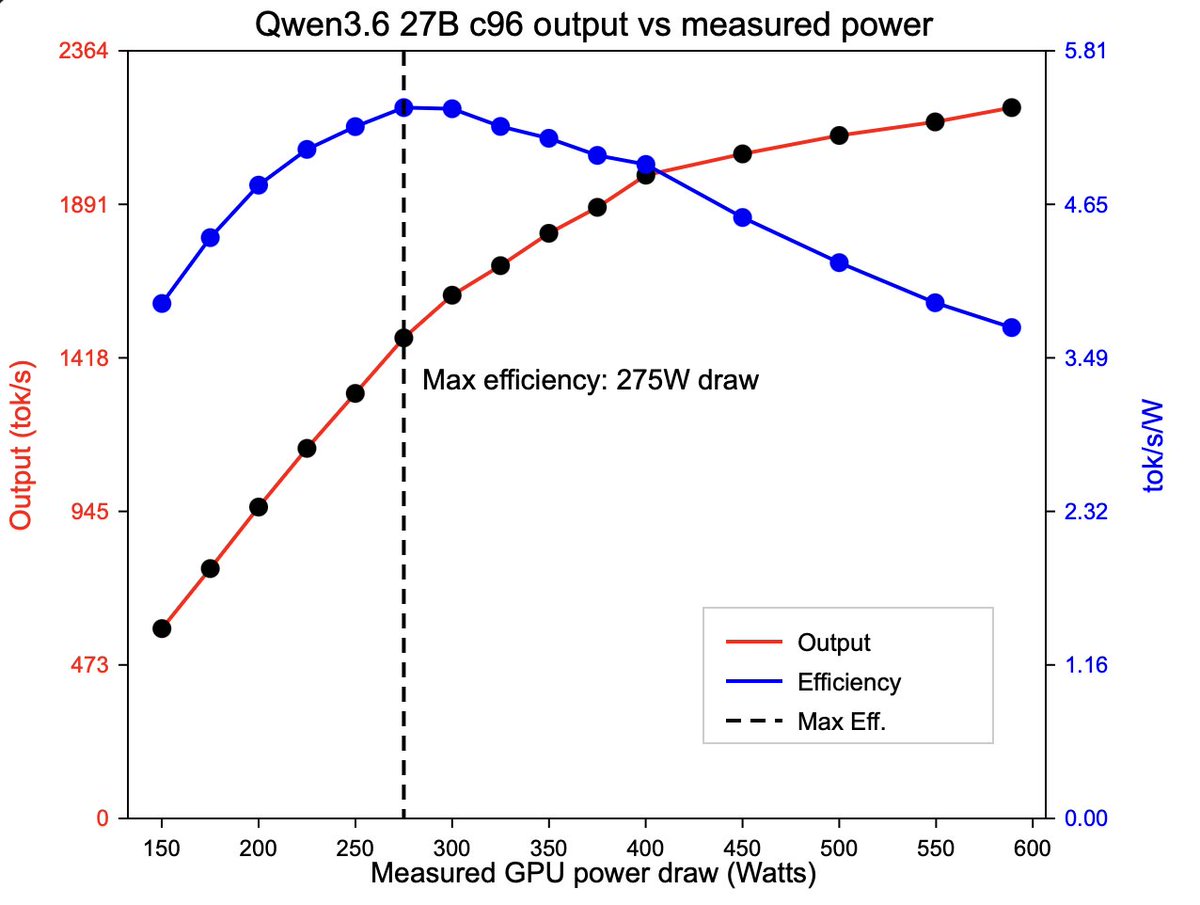

People often benchmark with quantization and similar settings, but I tested something different. On an RTX Pro 6000 Blackwell, I ran the same prompt at different power limits using Qwen 3.6 27B. 150W is the minimum power limit for this card. 150W - 42 t/s 200W - 68 t/s 250W - 98 t/s 300W - 108 t/s 350W - 113 t/s Above 350W, the tokens per second stay about the same regardless of the power limit. The maximum power this card uses with this AI model is around 450W. Conclusion: test for power too and power-limit your card. Past a certain point, extra power is just wasted energy.

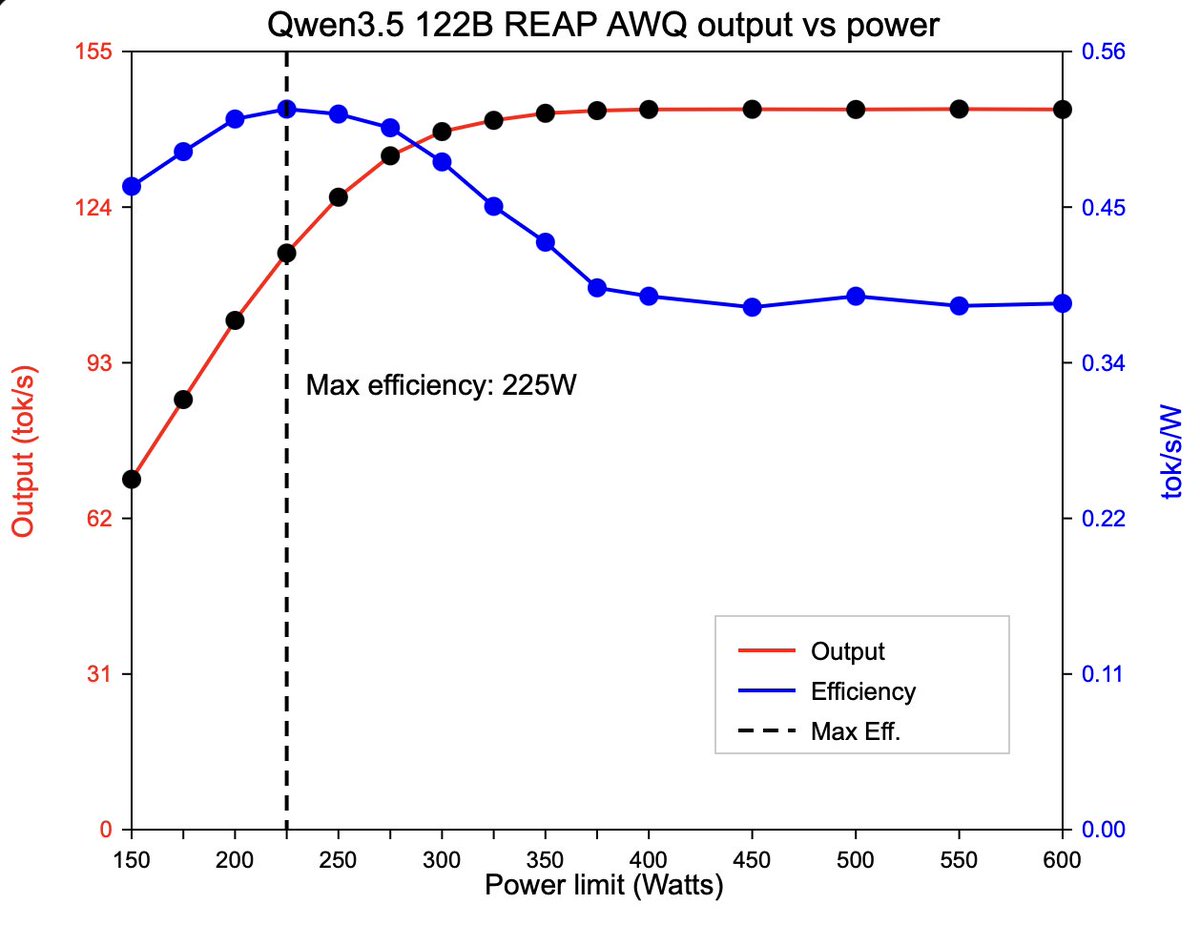

RTX Pro 6000 Workstation Edition efficiency numbers Models: - Qwen3.6 27B BF16 - Qwen3.5 122B REAP Just like with the 3090, best efficiency is 250W Optimal power I would still say is 350W, speed gains are limited after that point.