Leon Derczynski ⚒️☁️🏔️🌲

25.5K posts

Leon Derczynski ⚒️☁️🏔️🌲

@LeonDerczynski

NLP/ML/language/security. Principal research scientist @NVIDIA, & Prof @ITUkbh. Views ostensibly professional. llmsec stan acct

Excited about our new paper: AI Agent Traps AI agents inherit every vulnerability of the LLMs they're built on - but their autonomy, persistence, and access to tools create an entirely new attack surface: the information environmental itself. The web pages, emails, APIs, and databases agents interact with can all be weaponised against them. We introduce a taxonomy of six classes of adversarial threats - from prompt injections hidden in web pages to systemic attacks on multi-agent networks. I’m outlining the six categories of traps in the thread bellow

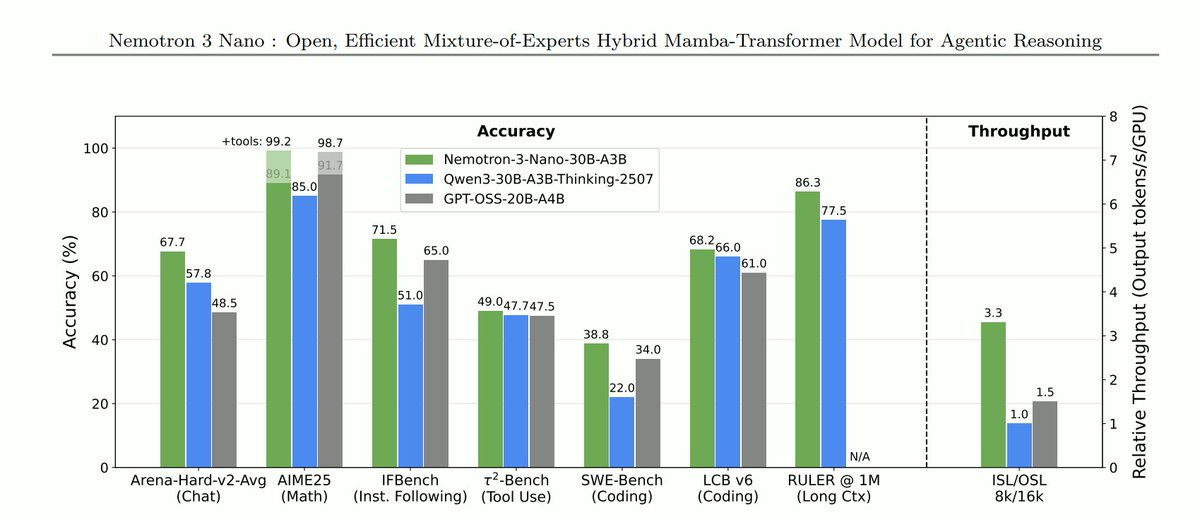

Today, @NVIDIA is launching the open Nemotron 3 model family, starting with Nano (30B-3A), which pushes the frontier of accuracy and inference efficiency with a novel hybrid SSM Mixture of Experts architecture. Super and Ultra are coming in the next few months.

Today, @NVIDIA is launching the open Nemotron 3 model family, starting with Nano (30B-3A), which pushes the frontier of accuracy and inference efficiency with a novel hybrid SSM Mixture of Experts architecture. Super and Ultra are coming in the next few months.