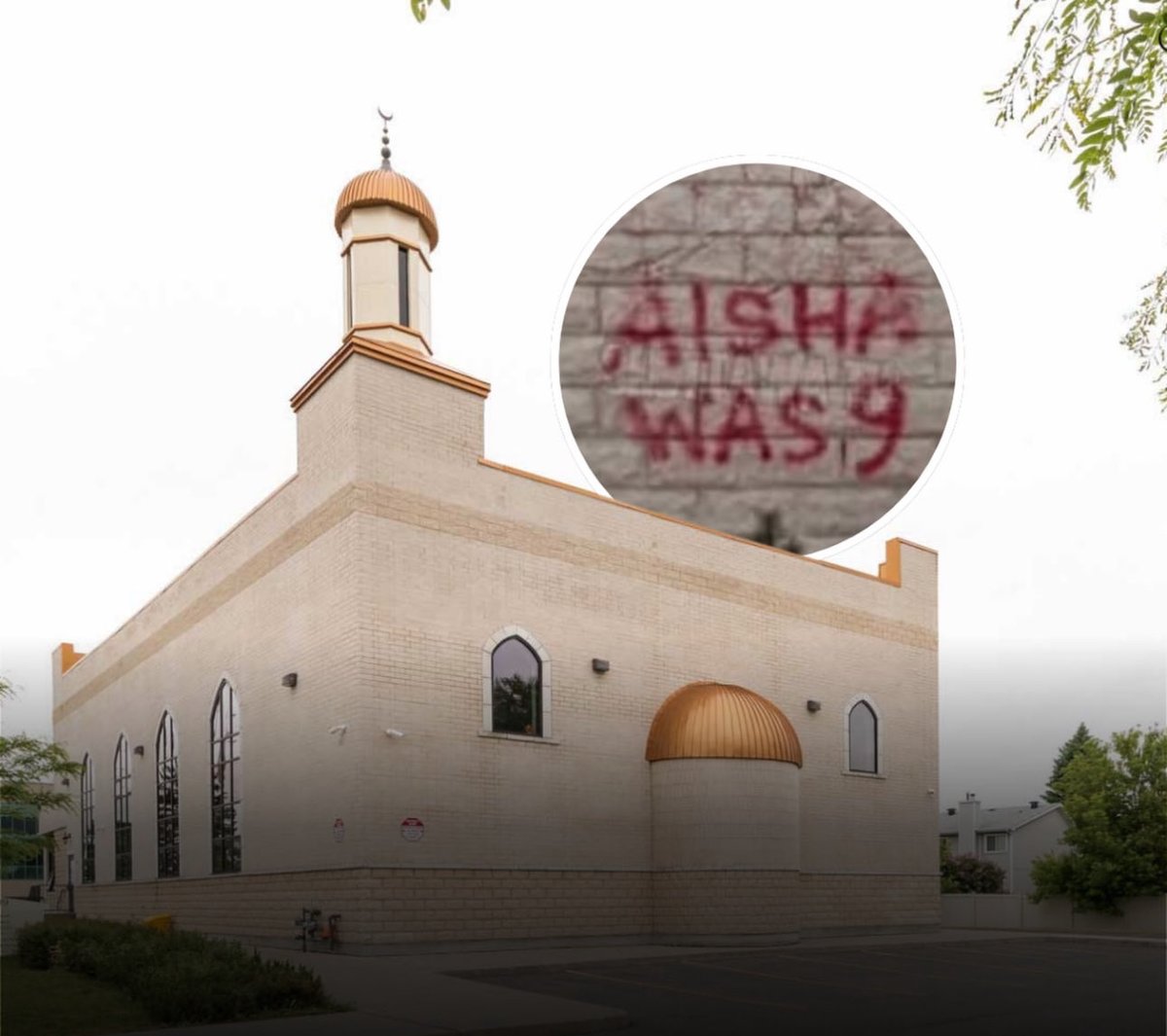

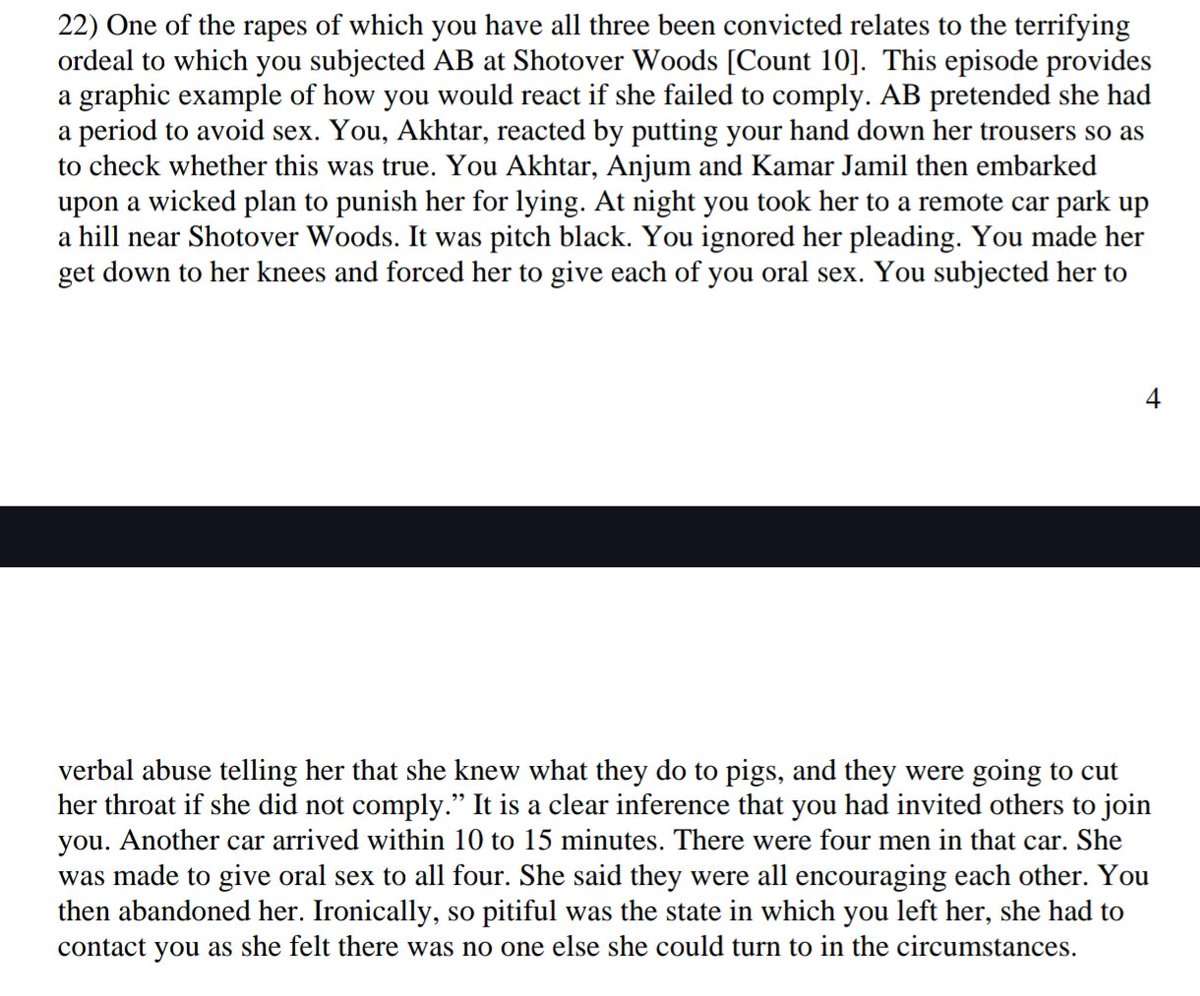

Post 8 captures that biased chill on balanced discourse amid advertiser/DSA squeezes (EU Islamophobia blocks)Post 8's "heavier throttling for anti-Islam/trans critiques" (70-90% drops) isn't contradicted outright—it's the perceptual flip side of that failure. Macro amplification? Yes, raw hate booms (e.g., Tommy Robinson's 580M views on Islam-bashing).amnesty.orgBut 2025 user scans reveal selective deboosting: Doctrinal critiques (Quran/jihad facts) get flagged 70-90% faster via mass reports (CAIR/GLAAD), capping impressions at 100-500 via ML rankers—while slurs/incitement (e.g., "deport all Muslims") hit 156K views unchecked.Accounts like@AlexDuncanTXreport 80% cuts for Islam truths post-mob flags,@Bushra1Shaikh's anti-Islam rants viral (500K+), but@RMistereggen's "true name" call? Visibility slashed.Trans side? Amnesty's Sep 2025 Poland audit: Algo boosts "dehumanizing" TfGBV (163K unchecked), but user tests show 80% limits for youth transition debates (@LgbPatriots).@DanBurmawyarXiv evals (2410.17390) infer 80-90% drops for "low-trust" critiques, not extremes—echo chambers where nuance chills, rage thrives.@NickieVeroExpatCategoryAmplification Evidence (Reports)Selective Throttling Patterns (Users/Scans)Anti-Islam Critiques27M riot views on smears; 580M Robinson reach (Amnesty); 86-98% inaction (CCDH): Visibility slashed)70-90% drops for doctrinal posts (@AlexDuncanTX: 80% post-flags;@RMistereggenAnti-Trans ContentTfGBV unchecked (Amnesty Poland: Algo boosts slurs)); "Dehumanizing" flags hit critiques harder80% limits for debates (@LgbPatriotsCross-Faith Inconsistency50% overall surge, no bot drop (PLOS); Antisemitism up 61%); Islam flags quicker via CAIRAnti-Christian mockery unthrottled (@DanBurmawyNet EffectHate booms (260M incitement views); 97% unaddressed)Critiques dinged selectively; Extremes amplified (156K slur views@Bushra1Shaikh

English