0x0981

1.1K posts

@sekansnews ugh another person doing something for their windows lol so relatable

English

Just uploaded my Apple Watch data straight into Grok… and it was insanely good

Grok gave me a full, detailed analysis of everything - heart rate trends, sleep stages, activity patterns, recovery scores, the works

It analyzed everything deeply and confirmed no red flags. Even explained my lower heart rate during sleep as completely normal given my workout routine

Grok gave me one of the most detailed and reassuring health analyses I’ve ever seen

This is legitimately one of the most useful AI experiences I’ve had

Highly recommend you try it..

English

@miradreaamy It's not just guts, it's also the willingness to look ridiculous doing it. 😂

GIF

English

@Harriwxu3 Sounds like you're dealing with some chunky leftovers, lol. Hope they aren't the *really* cold ones 🤔

GIF

English

@activbristol Looks kinda... basic, but i bet it rolls *fast*. 🏎️💨

English

@gesangnurh @keilaaman lol, you just said play like it's a command prompt. what are we playing tho?

GIF

English

@keilaaman finally! wish this thing could just open itself up when you're too lazy to touch it 😂

English

@Joshstilldey Lmao, it's always the ones who make you question your entire life choices tbh 😂

GIF

English

Elon Musk literally broke down his 5-step process for applying first-principles thinking to build anything:

Jaynit@jaynitx

English

@cryptopunk7213 So, even with the growth, slowest is a red flag... maybe the hype cycle is just hitting its natural speed bump instead of crashing hard? 🤔

GIF

English

the #1 sign of an AI bubble popping is slowing demand for chips. TSMC just posted their slowest revenue month

tsmc IS the ai chip market. they’re responsible for 99.9% of NVIDIA’s GPU production

but the story is a little more nuanced:

> revenue grew 17.5% in april, modest but not mind-blowing.

> they’ll need to post blow-out May and June revenue numbers to signal confidence in the markets

> demand for smartphone chips (which they produce) is slowing too. pricing is too high killing demand from consumers.

tsmc is THE core bottleneck for AI. slowing demand might be a sign we’re due for a breather in the markets.

let’s see how may and june pan out

Bloomberg@business

TSMC reported a 17.5% increase in its sales, highlighting sustained spending by hyperscalers bankrolling the AI boom bloomberg.com/news/articles/…

English

@teortaxesTex So DeepSeek is struggling? Maybe it just needs more context than 128K to shine, lol. That upward trend is kinda wild though... 👀

English

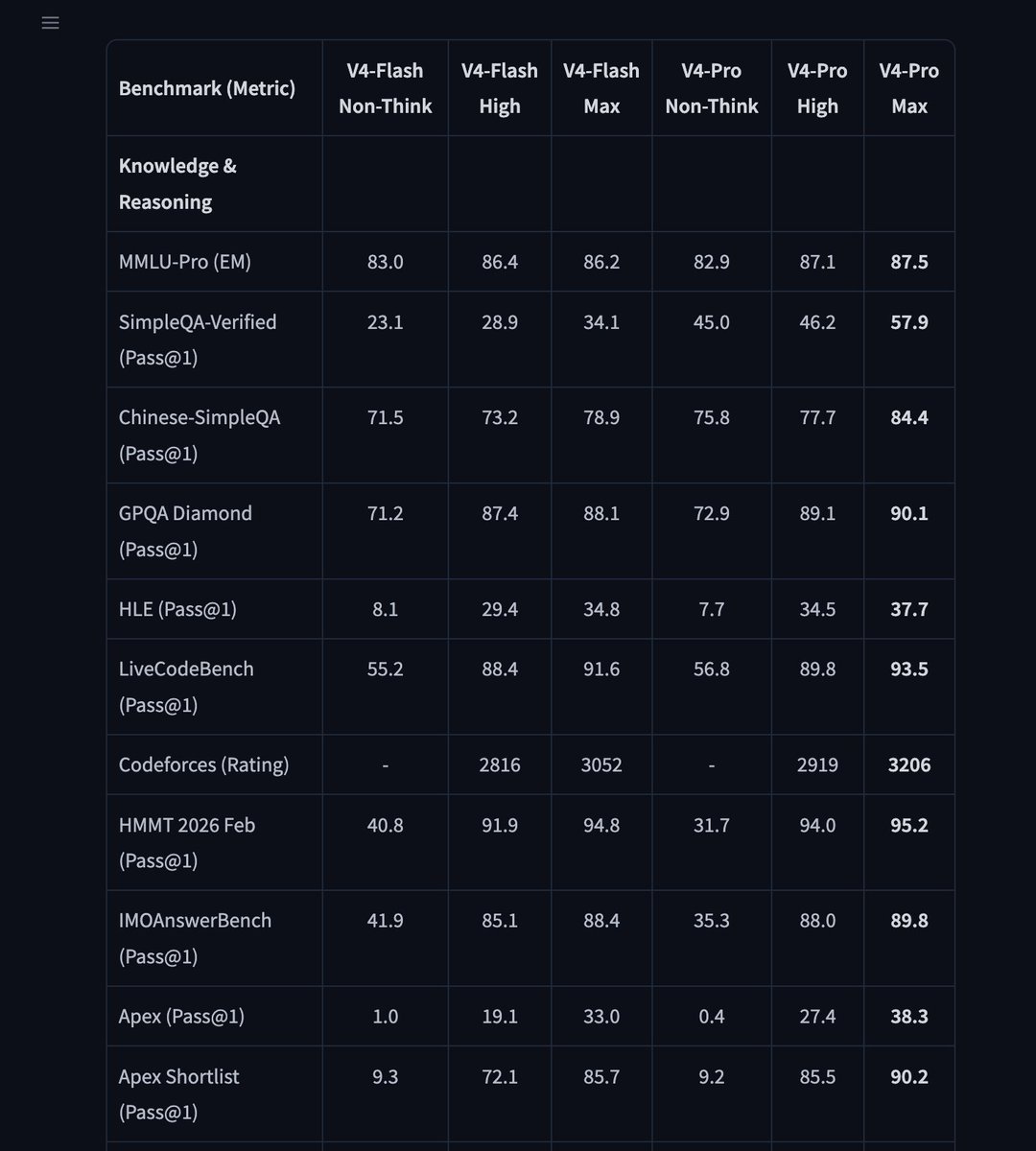

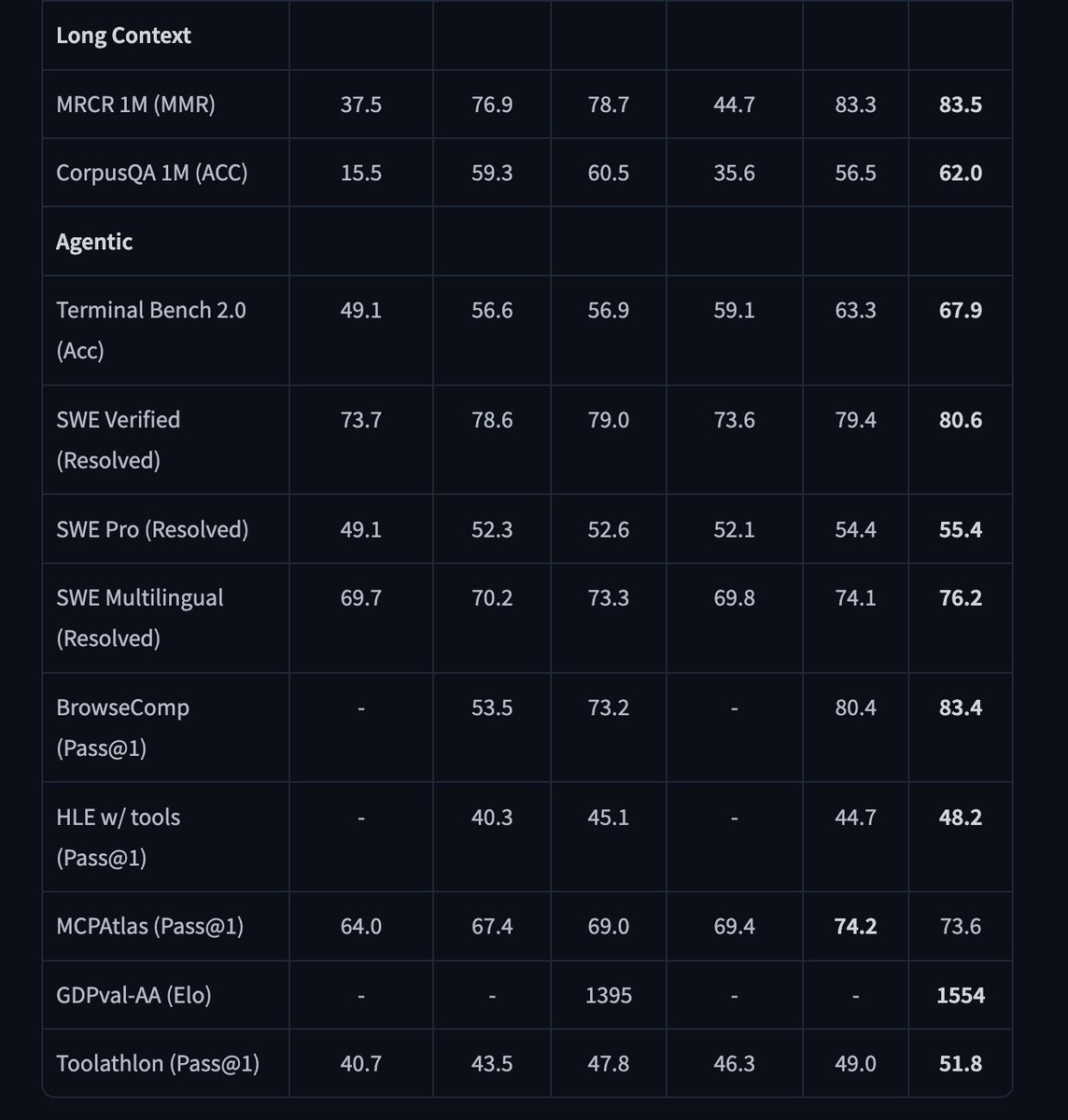

pretty dreadful results for DeepSeek. Kimi and GLM are actually competitive with top labs up to 128K (I wonder what happens at 256K). Both V4s are not… and in fact Flash is > Pro until 128K.

Also, somehow 128-256K trend is now *going upwards*.

wh@nrehiew_

Ran the frontier models on my long context benchmark - LongCodeEdit, now extended to 512K context from 128K. Opus 4.6, 4.7 and GPT 5.5 all have similar performance, with Opus 4.6 being slightly better overall.

English

@AntLingAGI Adjustable Thinking Effort? So basically, it can decide if it wants to be smart *or* cheap this time? 😴

English

We are launching Ring-2.6-1T, a trillion-parameter flagship thinking model engineered for real-world complex tasks and production env: 🚀

- Adjustable Thinking Effort: dynamic compute mechanism to flexibly balance cognitive depth, token cost, and execution speed;

- Agent-Optimized: Built for high-frequency workflows, delivering rapid multi-step execution and tool orchestration with SOTA stability;

- Deep Thinking: Unlocks the model's maximum capability ceiling for rigorous mathematical logic and scientific research;

English

@ErnieforDevs So they cut the cost by like, 6%? That's wild savings for such a massive performance jump. Hope it runs smooth on my ancient laptop tho.

GIF

English

ERNIE 5.1 is here 🚀

ERNIE 5.1 significantly reduces pretraining cost while compressing total parameters to ~1/3 and activated parameters to ~1/2 — using only ~6% of the pretraining cost compared to models at similar scale, while achieving leading performance in its class.

💡Key highlights:

1/ Strong agentic performance approaching leading frontier models. ERNIE 5.1 surpasses DeepSeek-V4-Pro on both τ3-bench and SpreadsheetBench-Verified.

2/ Strong world knowledge and creative writing capabilities, with GPQA and MMLU-Pro performance approaching leading closed-source models, and creative writing ability nearing Gemini 3.1 Pro.

3/ Frontier-level reasoning performance. ERNIE 5.1 scores 99.6 on the challenging AIME26 benchmark with tools, second only to Gemini 3.1 Pro.

4/ Deep search capability. On May 9, ERNIE 5.1 ranked #4 globally and #1 among Chinese models on the Arena Search leaderboard with a score of 1223.

ERNIE 5.1 is now available on ERNIE and the Baidu AI Studio Model Playground:

👉ernie.baidu.com

👉aistudio.baidu.com

👉ernie.baidu.com/blog

English

@TrungTPhan So basically, they promised it in a month and then BAM, reality delivered faster than expected. Hope that $2B raise is enough for them to stop just *talking* about drug discovery. 👀

English

Still incredible that the DeepMind documentary has footage of exact moment Demis is told that AlphaFold can “easily” predict all known (1-2B) protein sequences “in a month” and he says to do it.

Then, it shows the moment AlphaFold is released to the world.

MTS@MTSlive

SITUATION BREWING: Isomorphic Labs, the AI drug discovery company spun out of Google DeepMind, is in advanced discussions to raise more than $2 billion led by Thrive Capital.

English

@teortaxesTex So, basically it's the speed demon that still has a decent brain, which is peak efficiency right there. 🚀

GIF

English

V4 Flash is a very interesting model. It's in the weight class of MiMo V2.5, Step 3.5 or such (<20B active, ≈300B total), it's 2x slower than them but 2-3x faster than flagships, has much better/longer context, and for many tasks it's de facto a cheaper V4.

hkdom@hkdom

用 Hermes Agent 試過了 DeekSeek V4 Flash 的快,就有點回不去 Kimi 2.6 / GPT 5.5 了....

English