Luke Bailey

129 posts

Luke Bailey

@LukeBailey181

CS PhD student @Stanford. Former CS and Math undergraduate @Harvard.

for data-constrained pre-training, synth data isn’t just benchmaxxing, it lowers loss on the real data distribution as we generate more tokens for even better scaling, treat synth gens as forming one long 𝗺𝗲𝗴𝗮𝗱𝗼𝗰: 1.8x data efficiency with larger gains under more compute

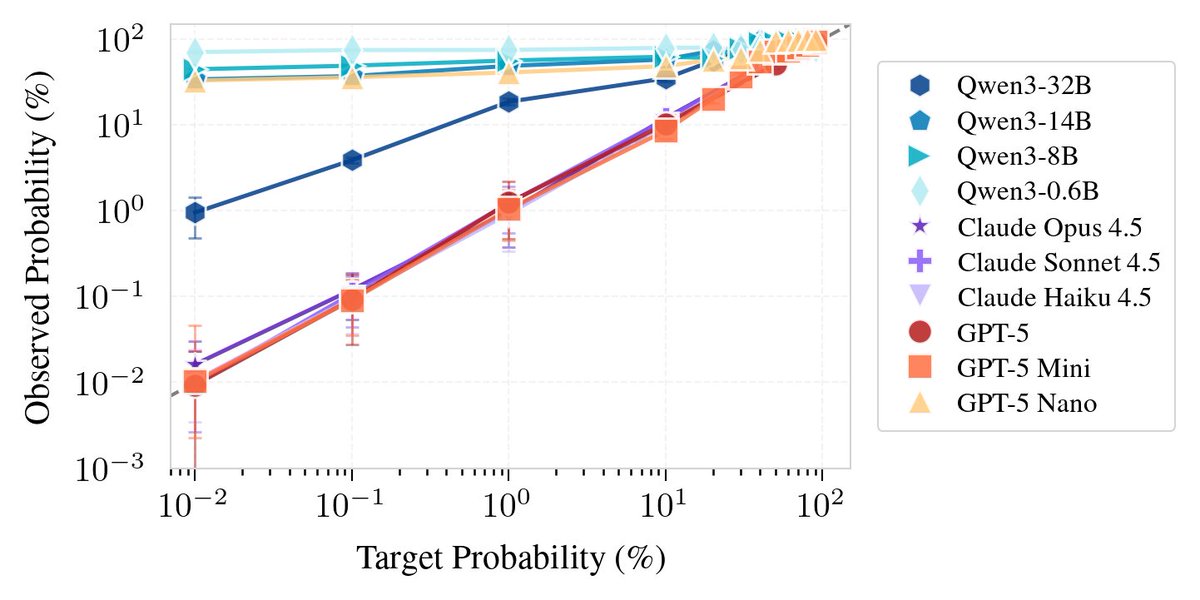

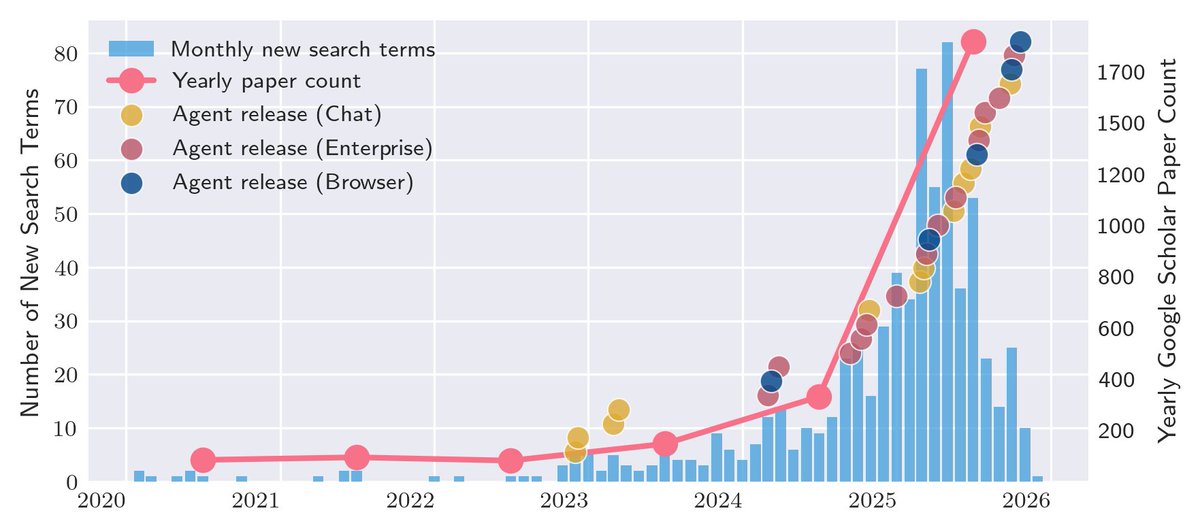

🚨The 2025 AI Agent Index is out! 🚨 Amidst recent buzz over 🦀 and @NIST’s new agent initiative, we find: - Selective reporting – esp. on safety - Almost all agents backend just 3 model families - Many agents don’t ID themselves as bots online - Big US/China gaps - And more…

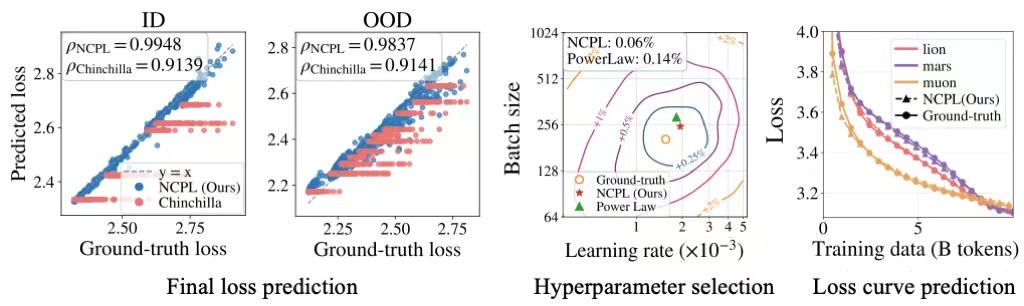

🚀Introducing our new work: Configuration-to-Performance Scaling Law with Neural Ansatz. A language model trained on large-scale pretraining logs can accurately predict how training configurations influence pretraining performance and generalize to runs with 10x more compute.

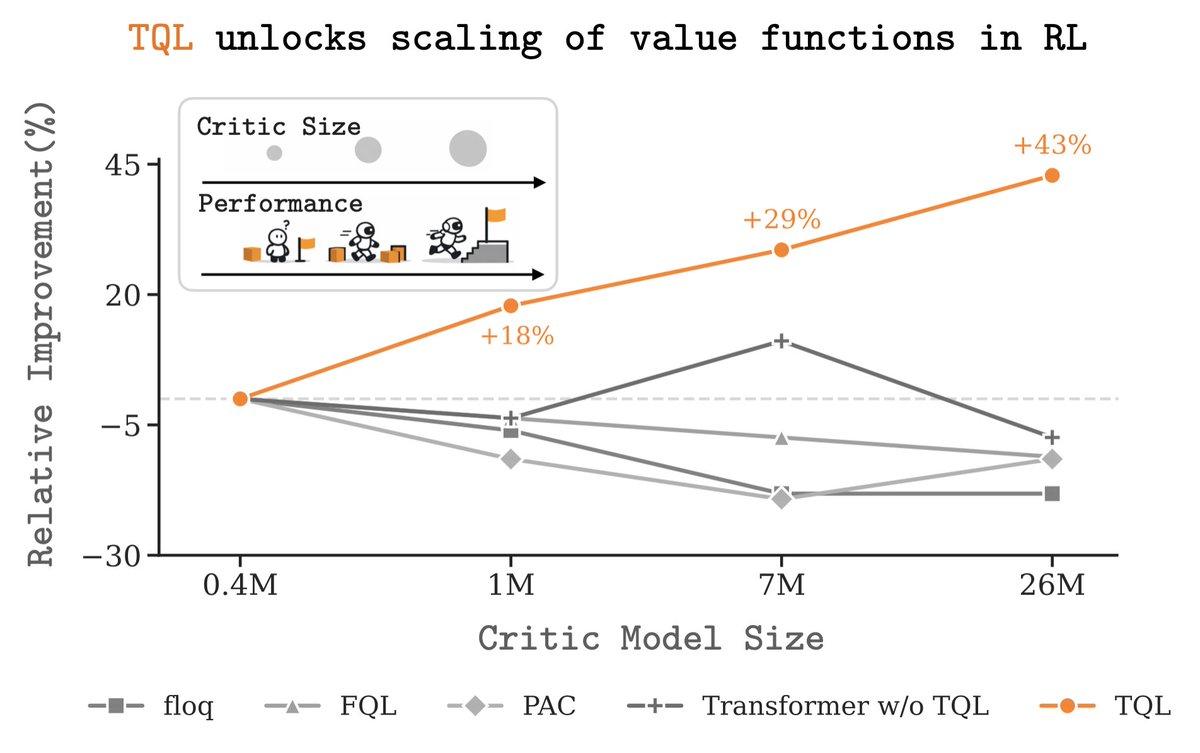

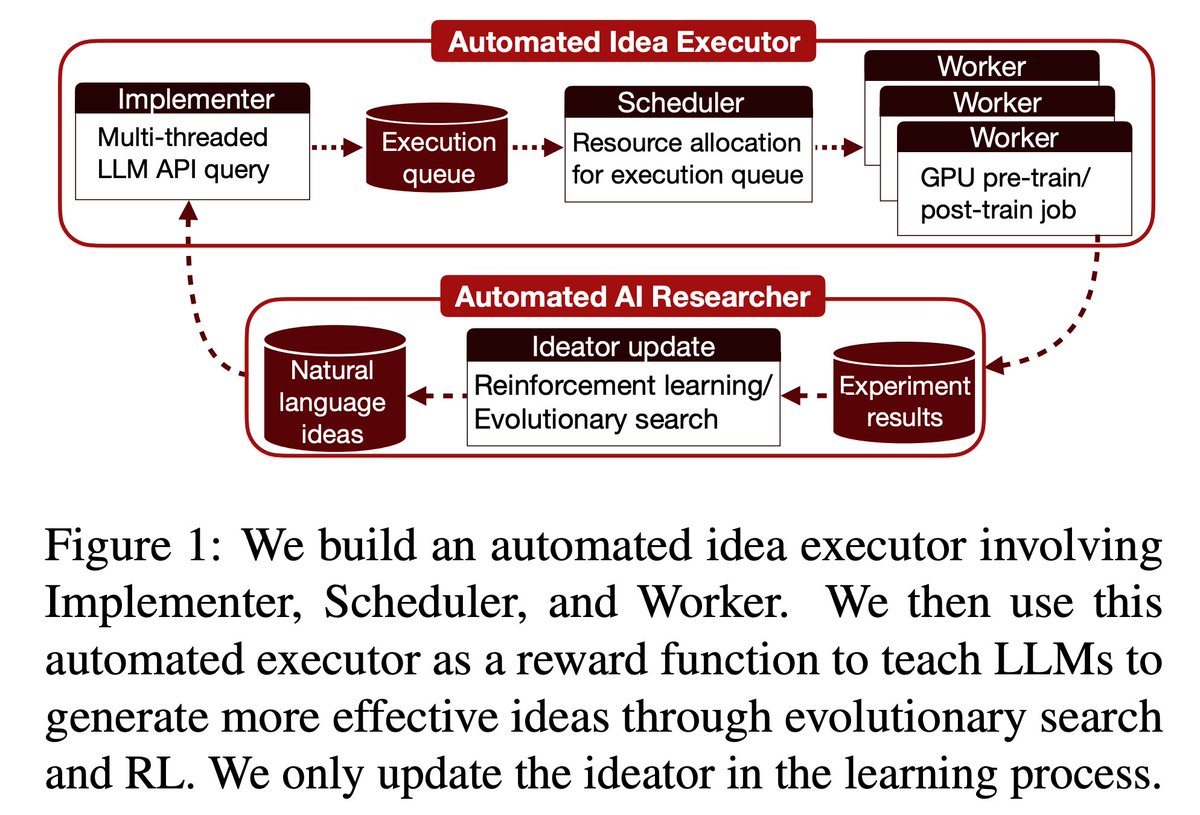

Can LLMs automate frontier LLM research, like pre-training and post-training? In our new paper, LLMs found post-training methods that beat GRPO (69.4% vs 48.0%), and pre-training recipes faster than nanoGPT (19.7 minutes vs 35.9 minutes). 1/

How to get AI to make discoveries on open scientific problems? Most methods just improve the prompt with more attempts. But the AI itself doesn't improve. With test-time training, AI can continue to learn on the problem it’s trying to solve: test-time-training.github.io/discover.pdf