Unconfirmed Labs@unconfirmedlabs

We built an archive of every Sui mainnet checkpoint ever produced (roughly 266.9 million checkpoints totaling 13.4 TB) and serve them from Cloudflare's edge network. Unlike a conventional mirror, every byte in the archive has been independently BLS-verified at ingestion time against the validator committee that signed it.

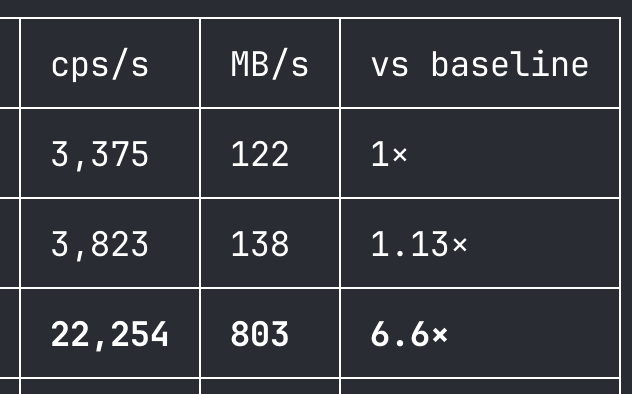

The architectural innovation that makes this practical on a small operational budget is per-epoch bundling. The naive design would store one R2 object per checkpoint. 266.9M objects, $1,201 in Class A ops for a single backfill, painful to list or stream. Instead we concatenate every zstd frame in an epoch into a single `epoch-N.zst` file with a 20-byte-per-checkpoint `.idx` sidecar. Ingest cost drops ~660× (≈$1.82 for the full mainnet backfill), bulk whole-archive downloads drop ~240,000×, and per-checkpoint lookups become one range read. A six-column D1 table holds only the routing metadata that isn't derivable (epoch, seq range, and SHA256 integrity hashes) with everything else (object keys, counts, idx sizes) computed on the fly by the proxy.

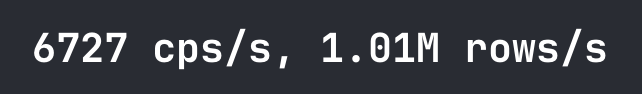

The serving story is shaped entirely around Cloudflare's Cache API. Point lookups at `/12345678.binpb.zst` hit a Hono Worker that resolves seq → epoch via D1, reads 20 bytes of idx to find the offset, and range-GETs the frame from R2 — then caches the result at the edge with a one-year immutable TTL. Warm reads serve from CF edge RAM, bypassing the Worker, D1, and R2 entirely: we measured 14,590 req/s at 256 concurrency with p50 14ms from a single Bun client; cold-path throughput plateaus at ~200 req/s because every miss chains three serial origin round-trips. Bulk consumers bypass the proxy via a separate R2 public domain at, which serves whole `.zst` and `.idx` objects directly. The TypeScript SDK caches routing and idx files locally so repeat lookups become network-free binary searches.

Check out the project below. Contributions are welcome!

github.com/unconfirmedlab…