Mike Ma {LLM Stock Prediction}

3.7K posts

Mike Ma {LLM Stock Prediction}

@MMikeMMa

Stock prediction with LLMs @SixHQai; prev Founder @RsrchRabbit (acq.), short-biased public equities, @wharton; obsessed w/ all things HUMINT / HCI

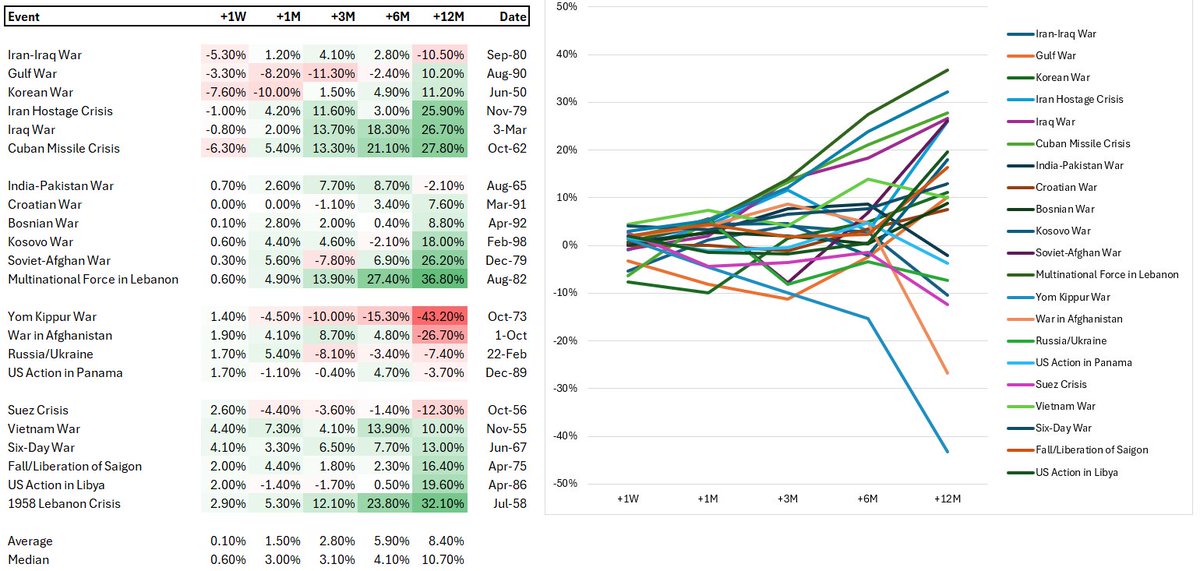

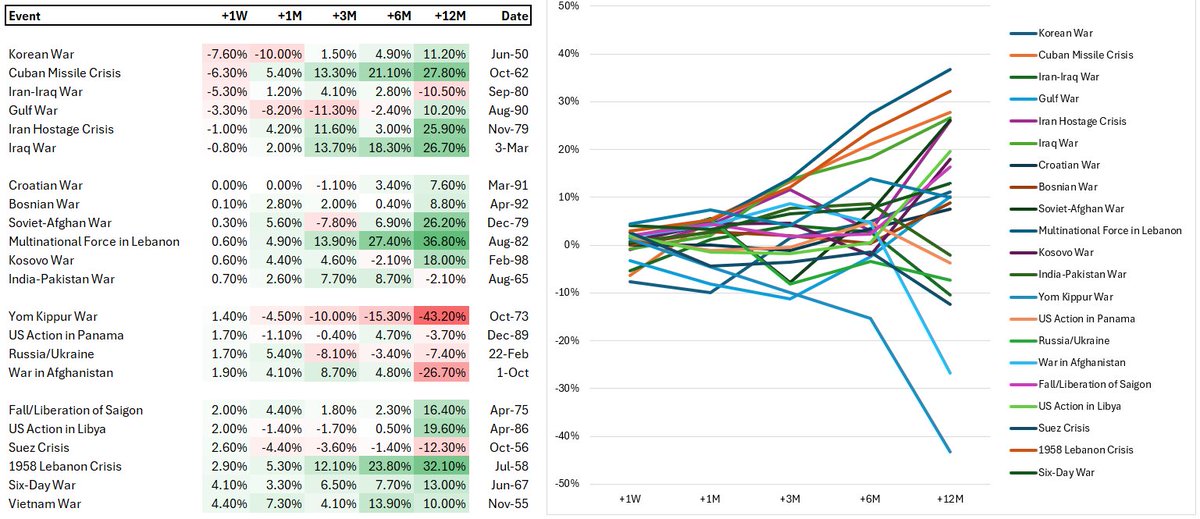

Larry Ellison on the AI moat: AI is commoditizing because models use the same public internet data. The true competitive edge isn't the model itself anymore, but access to exclusive, proprietary datasets. That is the only moat left.

particle life in 3d

Gemini's ability to summarize videos (of, mostly, podcasts) is an exceptional time saver. The summary is often better than the original video. And since it's time-stamped, you can check the segment that really interests you. Oftentimes I also use 5.3 to extract the core argument of a finance paper. Feels like a cheat, but it losslessly compresses 80 pages to 10. Same story: I will check the 5 pages in the paper that really matter. At the same time, while Codex and Claude Code are good at coding and at reading, they are not good at doing research. They solve or make progress in lemma-proving. But they need a lot of guidance and error-checking. They will be increasingly better. But progress seems slower at the the art of asking questions, rather than providing answers or summarizing them.

The dashboard design I am cooking rn is next level!

Sufficiently advanced agentic coding is essentially machine learning: the engineer sets up the optimization goal as well as some constraints on the search space (the spec and its tests), then an optimization process (coding agents) iterates until the goal is reached. The result is a blackbox model (the generated codebase): an artifact that performs the task, that you deploy without ever inspecting its internal logic, just as we ignore individual weights in a neural network. This implies that all classic issues encountered in ML will soon become problems for agentic coding: overfitting to the spec, Clever Hans shortcuts that don't generalize outside the tests, data leakage, concept drift, etc. I would also ask: what will be the Keras of agentic coding? What will be the optimal set of high-level abstractions that allow humans to steer codebase 'training' with minimal cognitive overhead?