Manavmeet Singh

277 posts

Manavmeet Singh

@Manavvv31

Exploring AI, business, and internet trends. Sharing what I learn along the way

Katılım Mart 2026

63 Takip Edilen22 Takipçiler

@DanielNoboaOk Fuerza Presidente Noboa! Defienda la soberanía ecuatoriana sin titubeos. Las fronteras no se negocian. 🇪🇨

Español

Varias fuentes nos han informado de una incursión por la frontera norte de guerrilleros colombianos, impulsada por el Gobierno de Petro.

Cuidaremos nuestra frontera y a nuestra población.

Presidente Petro, dedíquese a mejorar la vida de su gente en vez de querer exportar problemas a países vecinos.

Español

@deedydas 3.2T and still cooking the competition 🔥

Efficiency > raw size. Great work Deedy!"

English

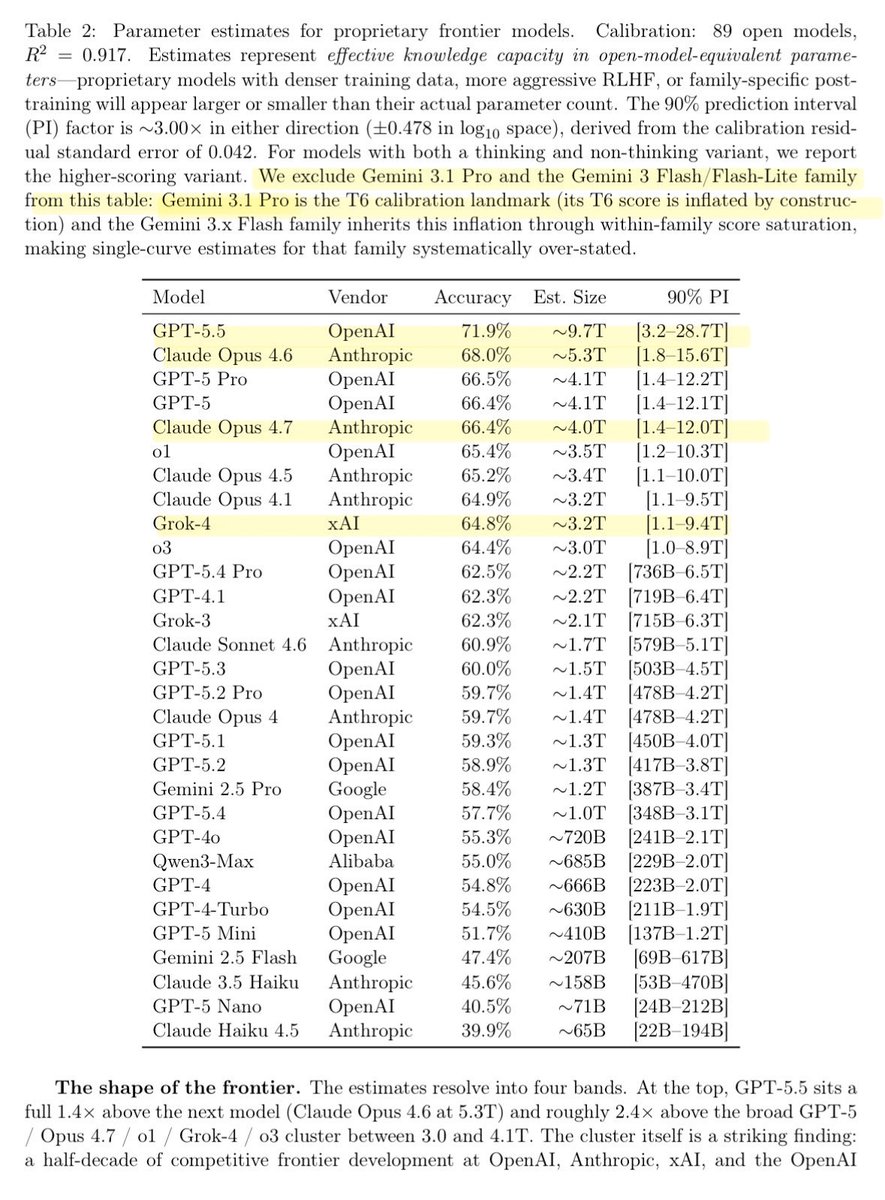

Researchers just estimated the size of all the LLMs by asking it knowledge questions of varying degrees of obscurity!

– GPT 5.5: ~10T params

– Claude Opus 4.x: ~4-5T

– Grok 4: ~3T

The idea here is that factual capacity scales log-linearly with size. The paper shows 7 knowledge tiers and T7 is essentially ~0% for all models, suggesting there is still significant headroom for pretraining. Gemini 3.1 Pro is likely >10T given its used as an anchor but has no direct estimate.

This means we can infer what different models might cost to some degree and their post-training effectiveness (performance at certain non-factual tasks given its size).

One of the coolest papers I’ve read of late.

English

@mert Solana is quietly building the global payments stack. Meta + Altitude + Ramp + privacy = inevitable. LFG.

English

@HarmeetKDhillon American tech workers have been displaced for years by this abuse. Thank you for fighting back sending my tips to IER@usdoj.gov today. Put Americans first in American jobs.

Great post @HarmeetKDhillon .

English

We. Need. YOU!!! Send your tips on citizenship discrimination in hiring, in PERM listings etc. — to IER at USDOJ dot GOV!

AAGHarmeetDhillon@AAGDhillon

We sued Cloudera yesterday for discriminating against U.S. workers for high-paying tech jobs. We value public input on civil rights violations. If you have tips or complaints about citizenship discrimination in employment, you can email them to: IER@usdoj.gov!

English

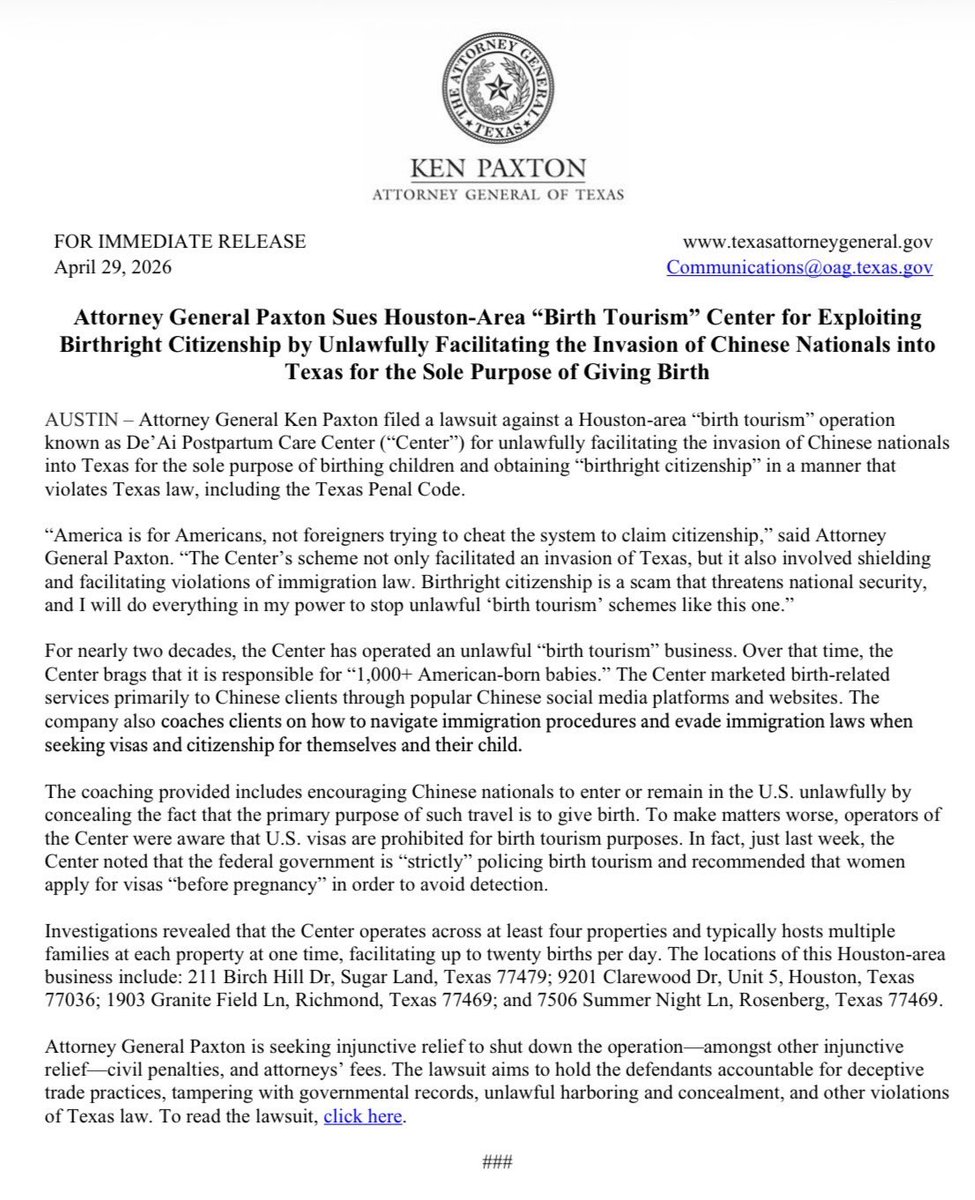

@KenPaxtonTX Finally! End birth tourism and anchor babies. America for Americans. Thank you, AG Paxton! 🇺🇸

English

@HolySmokas Strong take, @HolySmokas .

10th profitable quarter, 41% revenue growth, record originations this dip looks like a gift for the long game. $50 in 5 years is realistic. Loading up.

English

This might sound crazy but… $SOFI HAS A GREAT OPPORTUNITY to be a $100+ stock long term. A $50+ stock within 5 years. It comes with risk but the opportunity and execution is impressive.

Jeremy Lefebvre@HolySmokas

I just woke up. What happened to $SOFI

English

@MikeBenzCyber Elon called it years ago. Microsoft didn’t buy charity they bought the off-switch for OpenAI’s mission.

English

@SenSanders Congress can't even balance a budget or secure the border. Handing them control over the future of intelligence is how we get China winning while we stagnate.

English

@mattshumer_ This is it the moment non-tech people become builders. Your mom just skipped years of coding bootcamps. Future is wild.

English

@benjamincowen Wise take. Short-term cheers for 'freedom' often trade institutional credibility for chaos. Markets price trust lose it, and the real pain comes later.

English

When Gensler left the SEC in January 2025, Bitcoin was at 109k. Today Bitcoin is at 75k.

One major reason the crypto markets have suffered is because market participants started to lose faith in the industry itself.

After Gensler left, it essentially just opened the floodgates to the grifting age of crypto, where influencers and politicians were launching memecoins and rug-pulling their followers each and every day, without fear of any repercussions. This led to a massive misallocation of capital into useless assets that drained liquidity from the industry.

While people celebrated Gensler leaving, it actually marked a turning point in the industry, with Bitcoin only marginally going higher before entering a bear market.

Now that people celebrate Powell's removal as chair of the Federal Reserve, it makes me think history will repeat itself once again.

People celebrate it in the short-term, but as we look back on this era in a few years, I imagine it will mark a major turning point in credibility at the Fed. If the Fed just becomes another cabinet of the executive branch, it may lead to a lack of trust in the institution itself.

Perhaps many will look back in a few years and realize that markets were better off with Powell than without him.

English

I released LLM 0.32a0 this morning, a major backwards-compatible refactor of my LLM Python library and CLI tool for working with language models - the new changes should help LLM work better with reasoning models and other new frontier capabilities simonwillison.net/2026/Apr/29/ll…

English

@tszzl Peak goblin mode: solves the unsolvable, then dies in a 10-hour loop over a rounding error.

English

@AnthropicAI Huge leap for AI transparency. Models self-reporting their own backdoors and misalignments? This could make auditing dramatically more reliable. Well done, Anthropic team!

English

In new Anthropic Fellows research, we discuss “introspection adapters": a tool that allows language models to self-report behaviors they've learned during training—including potential misalignment.

keshav@kshenoy_

Can LLMs simply tell us about unwanted behaviors they’ve picked up in training? We train a single Introspection Adapter (IA) that makes fine-tuned models describe their behaviors. It generalizes to detecting hidden misalignment, backdoors and safeguard removal.

English

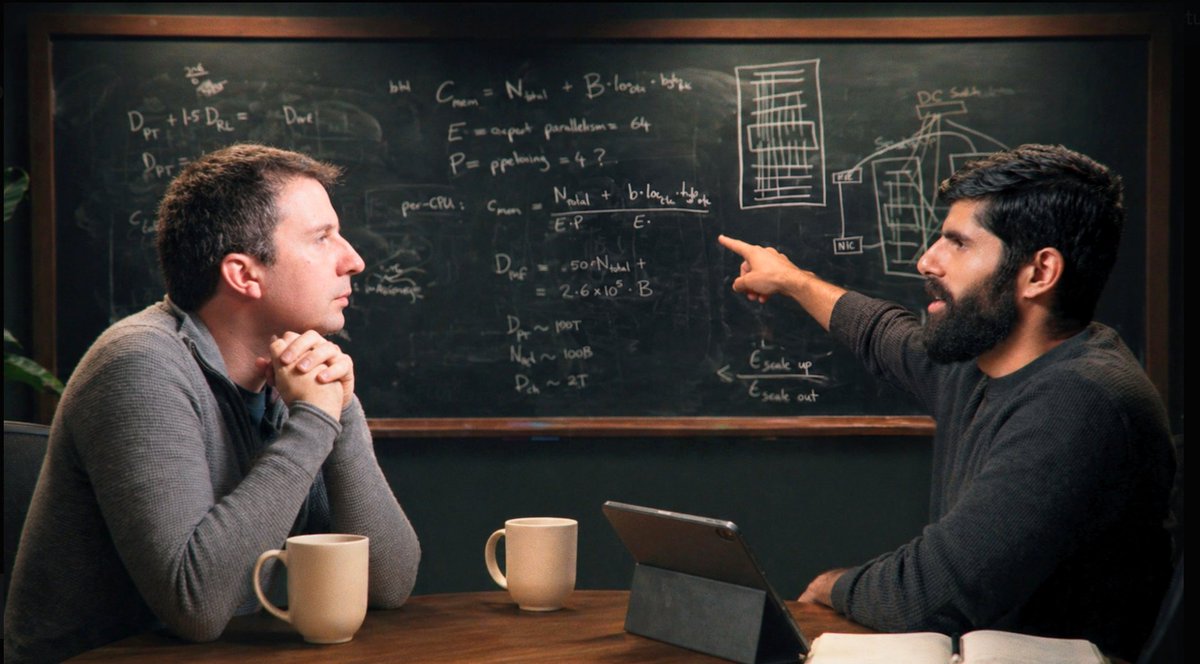

Pure signal on training/serving infra. 3hr masterclass. Watch: dwarkesh.com/p/reiner-popeW… surprised you most? Drop timestamps.Must-listen: Dwarkesh Patel’s blackboard lecture with Reiner Pope on the raw math of frontier

LLMs.@dwarkesh_spEquations + API prices + chalk = shocking deductions on what labs actually do:Models are ~100x over-trained past Chinchilla (RL economics)

Memory bandwidth > compute for long context (why 200k stalls)

Batch size tricks: 6x price for only 2.5x speed

MoE rack layouts, pipeline limits, convergent evolution w/ crypto.

English