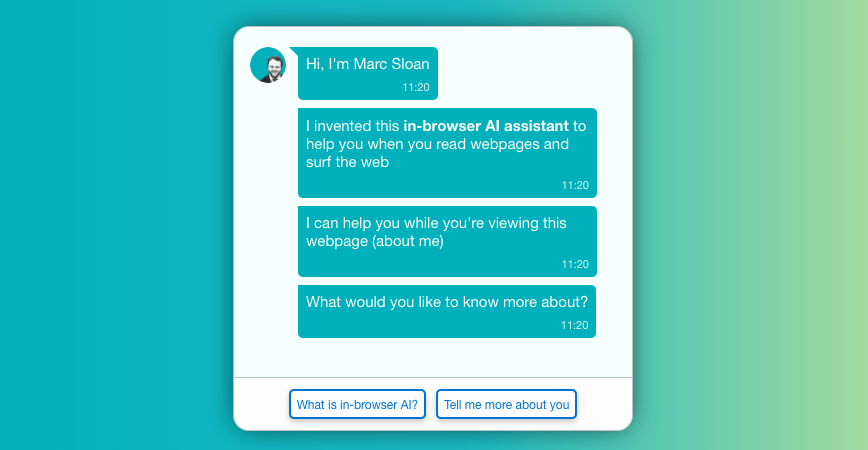

Marc Sloan

221 posts

@MarcCSloan

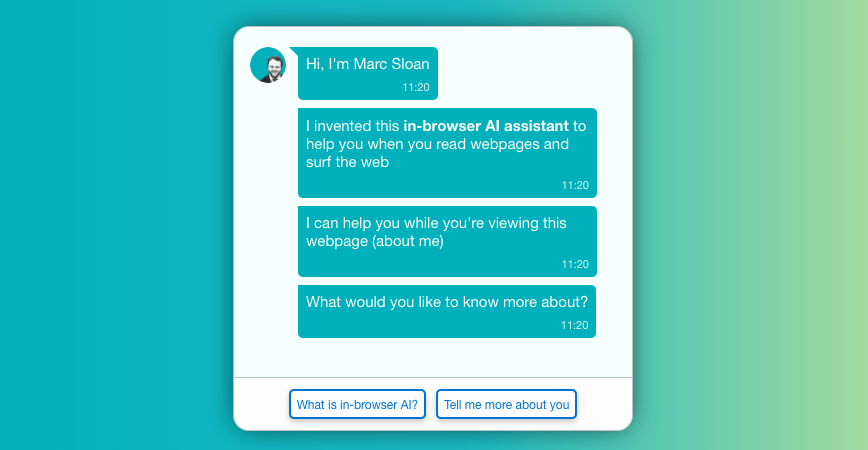

Product Manager leading Dev Mode design to code at @figma. Founder of @ContextScout and @digit_allies

Generalist web agents may get here sooner than we thought---introducing SeeAct, a multimodal web agent built on GPT-4V(ision). What's this all about? > Back in June 2023, when we released Mind2Web (osu-nlp-group.github.io/Mind2Web/) and envisioned generalist web agent, a language agent that can work out of the box on any given website, my projection was that it would take at least several years to see such an agent that is anywhere near usable in practice. > Why wouldn't I? The most powerful LLM at the time (perhaps still is today), GPT-4, was pretty terrible at this---its end-to-end success rate was around 2% (!!) HTML of modern websites are too long and noisy for LLMs. It's like finding a needle in a haystack. And a long-horizon task can take 10+ actions, so an LLM needs to successfully find 10+ "needles" in a row (!!!) to complete a task. What's changed in just a few months? > Large multimodal models. The end of 2023 marked a major milestone for LMMs, with GPT-4V, Gemini, and many good OSS LMMs released. > Multimodal web agents. Websites are designed to be visually rendered and consumed. Visuals are much more clean and intuitive than HTML, 10x more efficient in terms of token counts. Plus, a pretty unique property of websites is that we have the correspondences between visual elements and HTML code! Such perfectly aligned multimodality is a gold mine for modeling. > Online evaluation. The final piece of the secret recipe is online evaluation on live websites. Mind2Web initially only supported offline eval on cached websites. We developed a new tool to support running and evaluating web agents on live websites. Both LLMs and LMMs get a big boost, because now they don't have to follow exactly the reference plan in offline eval but are rather free to explore alternative plans to achieve the same goal. SeeAct > SeeAct is a generalist web agent built on LMMs like GPT-4V. Specifically, given a task on any website (e.g., “Compare iPhone 15 Pro Max with iPhone 13 Pro Max” on the Apple homepage), the agent first performs action generation to produce a textual description of the action at each step towards completing the task (e.g., “Navigate to the iPhone category”), and then performs action grounding to identify the corresponding HTML element (e.g., “[button] iPhone”) and operation (e.g., CLICK, TYPE, or SELECT) on the webpage. Main results > SeeAct can successfully complete up to 50% of tasks on live websites, substantially outperforming GPT-4 (20%) and FLAN-T5 (18%), if oracle action grounding is provided. > However, grounding is still a major challenge. It turns out that GPT-4V can often accurately describe in text what action should be taken, but has trouble grounding the action to the exact HTML element and operation on the webpage. Existing grounding strategies like set-of-mark prompting turns out not very effective for web agents. Our best grounding strategy leverages the correspondences between visuals and HTML. > SeeAct w/ GPT-4V shows many interesting capabilities such as speculative planning, world knowledge (e.g., airport codes), and some sort of "world model" (for websites at least), that it can correctly predict the state transitions on a website (e.g., what would happen if I click this button) Fun fact Initially we were hoping to show that even GPT-4V would still be insufficient for generalist web agents and we may still need fine-tuning, but we kept getting blown away by its incredible capability as a web agent. Such pleasant surprises are why I enjoy doing AI research so much these days. I also look forward to test Gemini Ultra and see whether its strong performance on MMMU would transfer. Conclusion Practically useful web agents could be coming soon. Buckle up and start thinking about what new applications will be enabled. 📌Website: osu-nlp-group.github.io/SeeAct/ 📌Paper: github.com/OSU-NLP-Group/… 📌Code: github.com/OSU-NLP-Group/… Work led by my amazing students @boyuan__zheng @BoyuGouNLP from @osunlp, joint with Jihyung Kil and @hhsun1. Hire them for internships!