Marc Ratkovic

94 posts

Marc Ratkovic

@MarcRatkovic

Professor. Statistical methods in political science, particularly machine learning for causal inference. Really getting into large language models.

Katılım Şubat 2019

67 Takip Edilen165 Takipçiler

Sabitlenmiş Tweet

I'm hiring!!! Grad students/Pre-docs/Post-docs. LLMs for certain. Causal inference is also likely. We're building a top-notch, supportive, kinetic, and downright awesome community @GESSuniMannheim Formal announcement coming, but email me MarcRatkovic@gmail.com w any Q's.

English

Oh wow. Super cool. Allowing different vertices from Chain of Thought to interact and cross over....This is getting awfully close to a thinking process...

John Nay@johnjnay

Graphs of Thoughts for Solving Elaborate Problems w/ LLMs - Models LLM generations as arbitrary graph - "LLM thoughts" are vertices - Edges are dependencies between - Can combine & enhance LLM thoughts using feedback loops - SoTA on a variety of tasks arxiv.org/abs/2308.09687

English

I've taken to talking about LLMs as "innovating" instead of "intelligent" or "conscious." "Innovating"=doing something I didn't train/tell them to, no muss no fuss. Hopefully this paper can give us the right words to talk about consciousness! arxiv.org/abs/2308.08708

English

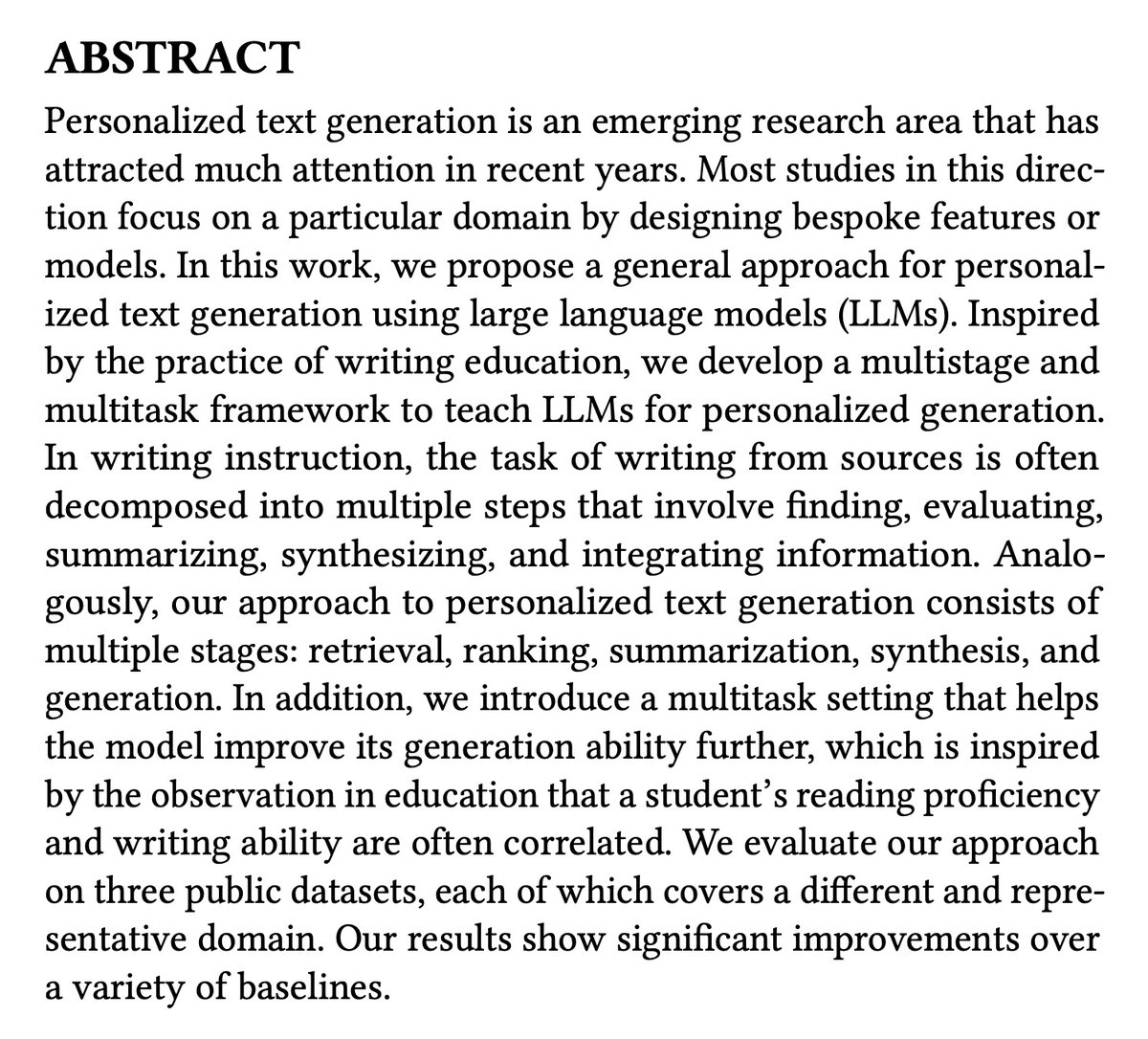

Writing is a process--training a LLM inspired by writing pedagogy. The idea that LLMs learn writing the same as us is a stretch, but there _must_ be quite a bit practical educators can add. huggingface.co/papers/2308.08…

English

Marc Ratkovic retweetledi

Nature Comms paper: Subtle adversarial image manipulations influence both human and machine perception! We show that adversarial attacks against computer vision models also transfer (weakly) to humans, even when the attack magnitude is small. nature.com/articles/s4146…

GIF

English

Marc Ratkovic retweetledi

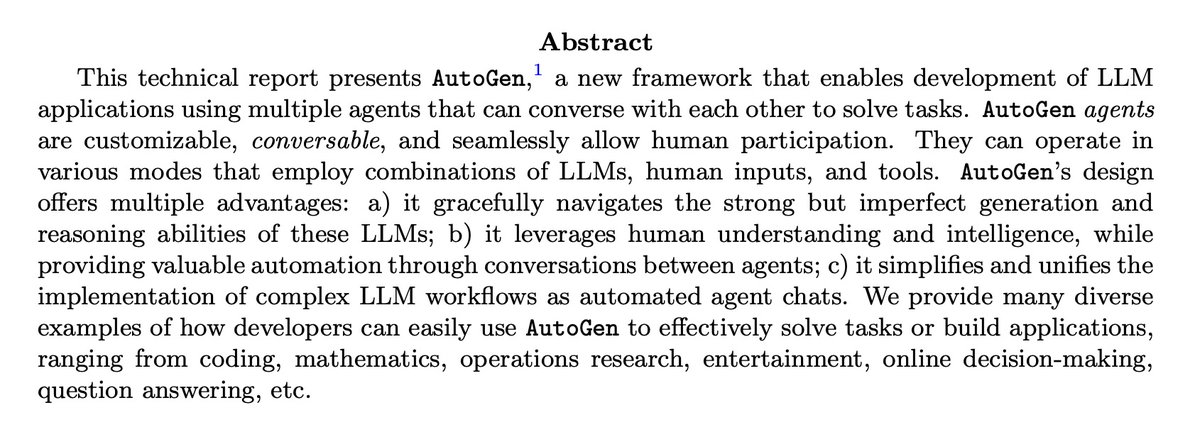

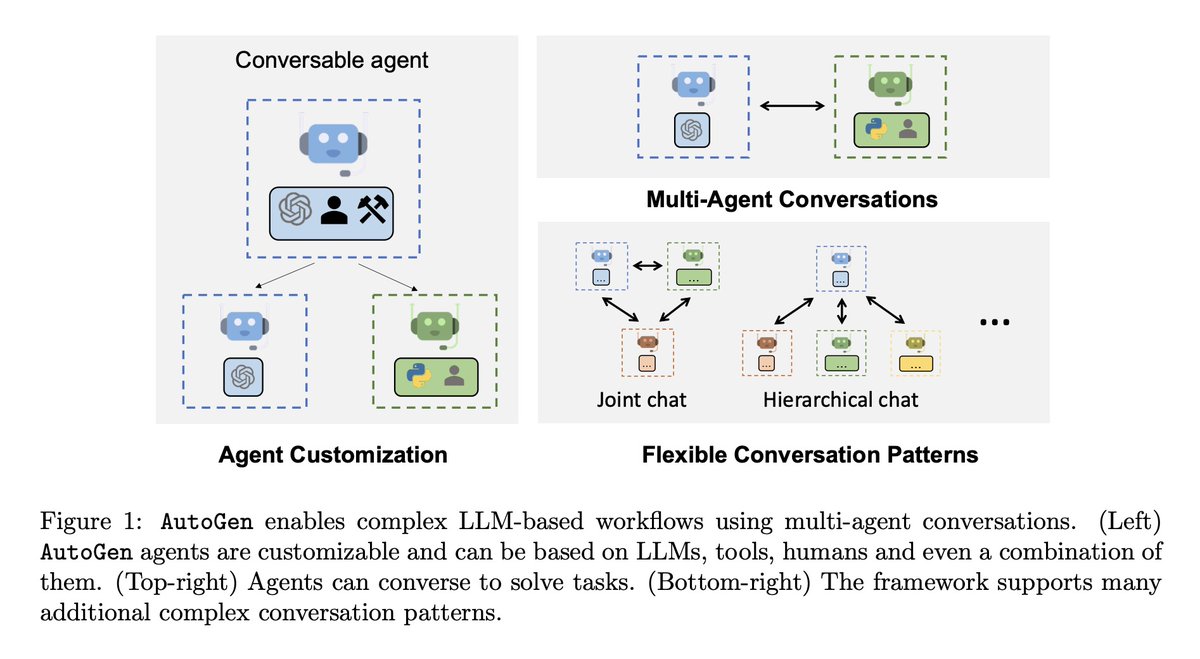

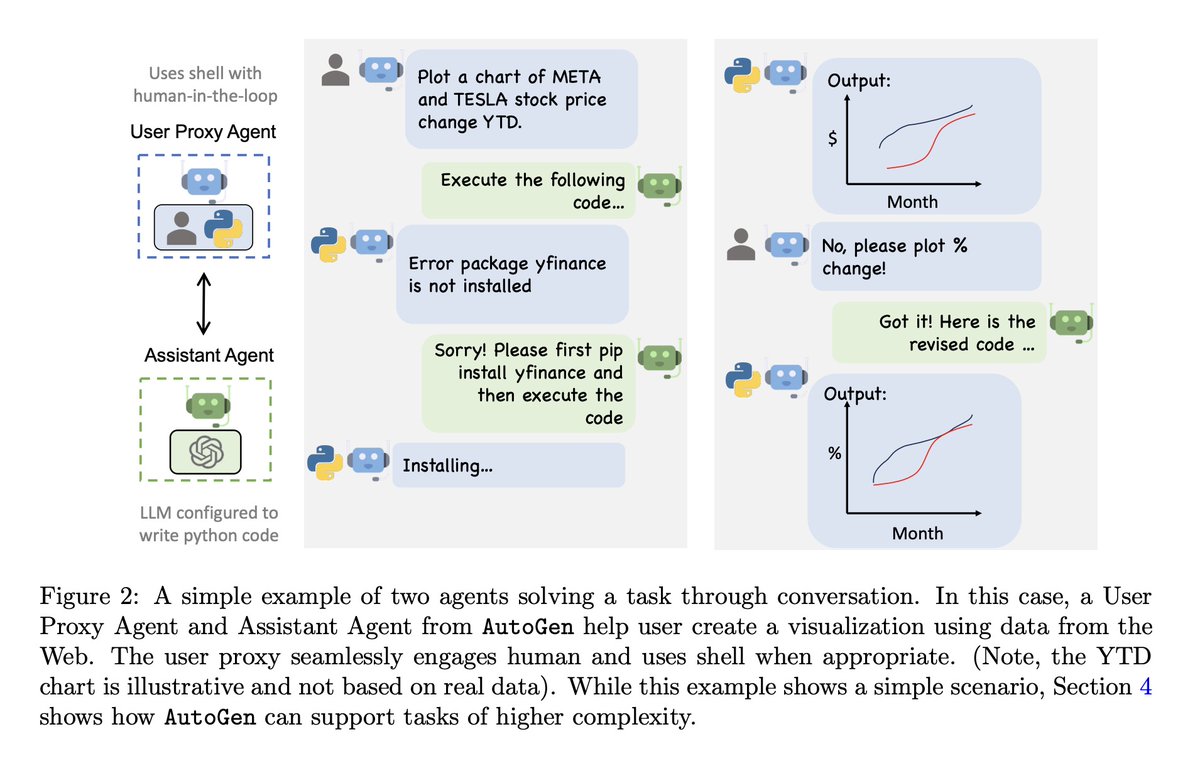

[CL] AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation Framework

Q Wu, G Bansal, J Zhang, Y Wu, S Zhang, E Zhu, B Li, L Jiang, X Zhang, C Wang [Pennsylvania State University & Microsoft & University of Washington] (2023)

arxiv.org/abs/2308.08155

English

@vithursant19 Cool stuff! And I like the idea at an intuitive level--there's strong pathways that need to be learned then more complex ones can be followed. Is it possible to put an L1 on the neurons? Or like a LARS algorithm?

English

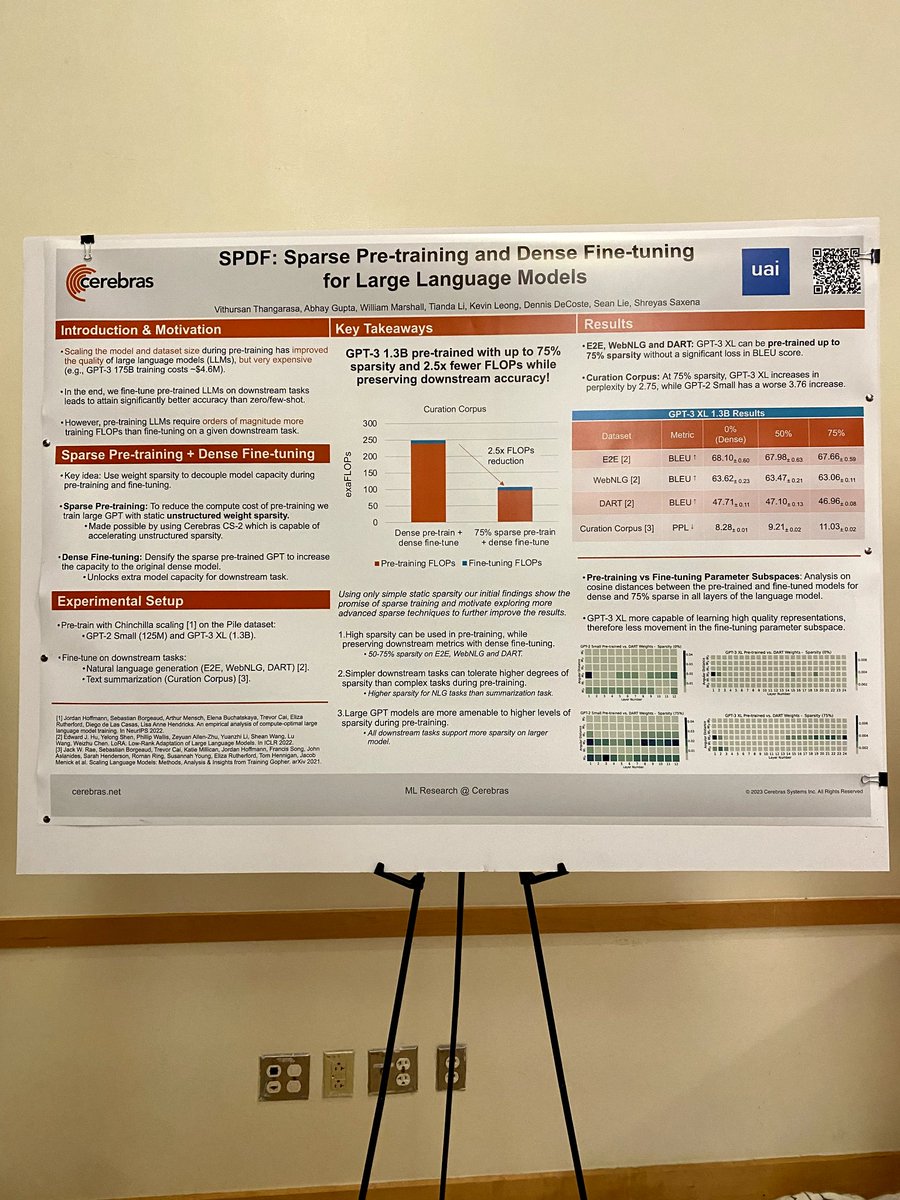

Also, check out our more recent follow up work on Variable SPDF that was presented at #ICML2023, where we show how a 75% sparse 6.7B Cerebras-GPT model can do as well as it's dense counterpart!

cerebras.net/blog/accelerat…

English

Excited and grateful to present our paper on "Sparse Pretraining and Dense Fine-tuning for LLMs" at @UncertaintyInAI! Engaging in deep discussions with brilliant researchers has been an enriching experience. Look forward to sharing insights and learning from others in the field!

English

Marc Ratkovic retweetledi

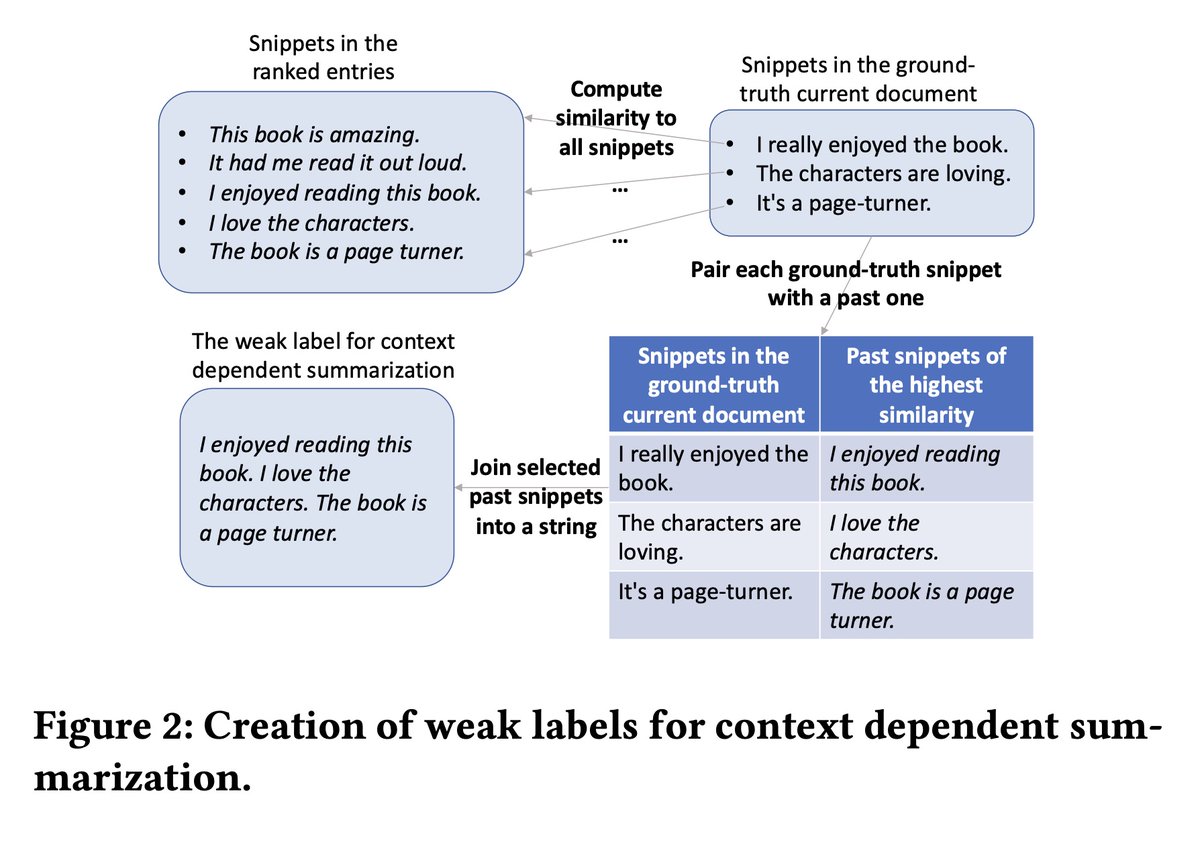

[CL] Teach LLMs to Personalize -- An Approach inspired by Writing Education

C Li, M Zhang, Q Mei, Y Wang, S A Hombaiah, Y Liang, M Bendersky [Google] (2023)

arxiv.org/abs/2308.07968

English

Marc Ratkovic retweetledi

There're few who can deliver both great AI research and charismatic talks. OpenAI Chief Scientist @ilyasut is one of them.

I watched Ilya's lecture at Simons Institute, where he delved into why unsupervised learning works through the lens of compression.

Sharing my notes:

- Kolmogorov compressor is the theoretical shortest-length program that produces a dataset. SGD is a practical approximation of the Kolmogorov search that finds an implicit program embedded in the weights of a soft computer, i.e. big Transformers.

- Unsupervised learning is about computing the conditional Kolmogorov complexity of a target dataset given an unlabelled corpus, i.e. K(Y|X)

- Theory tells us that optimizing for K(X, Y), the joint complexity, is as good as K(Y|X). So simply throw all data into the mix, and "just compress everything".

- Joint compression is maximum likelihood over the giant concatenated dataset.

- Ilya cites iGPT, Chen et al. 2020, to illustrate the ideas. iGPT is an image compressor that learns to predict the next pixel using a 1D sequence model.

This is a phenomenal lecture, very accessible, and sometimes quite entertaining.

YouTube: youtube.com/watch?v=AKMuA_…

Lecture page: simons.berkeley.edu/talks/ilya-sut…

YouTube

English

Marc Ratkovic retweetledi

Vector Databases for Data Science with Weaviate in Python twitter.com/i/broadcasts/1…

English

Original Chain of Thought paper from Wei et al 2022 openreview.net/forum?id=_VjQl…

English

Chain of Thought allows intermittent reasoning. Math problems can be solved better if GPT4 checks them with Python code. Cool stuff. huggingface.co/papers/2308.07…

English

Good to know!! RoT train on 4 epochs, so reuse training data. May depend on specifics of model, there's a scaling law, more thoughtful details in paper.

arxiv.org/abs/2305.16264

English

Doing more with less! A small model trained w/ wide range of prompts can outperform larger models (GPT3, but not 4). For constrained tasks, smaller-with-a-wider-variety-of-high-quality-training-types can hit the same performance on a single task. arxiv.org/abs/2305.16264

English

Marc Ratkovic retweetledi

❗️ Researchers often rely on third-party entities to field surveys. Therefore, it is important to verify the sincerity of their conduct.

In a project funded by the University of #Mannheim, @fraukolos & colleagues examined ways to detect falsified and fabricated interviews.

(1/5)

English

Using the right data is always better than using more data. But using more right data is even better.

Niklas Muennighoff@Muennighoff

How to instruction tune Code LLMs w/o #GPT4 data? Releasing 🐙🤖OctoCoder & OctoGeeX: 46.2 on HumanEval🌟SoTA🌟of commercial LLMs 🐙📚CommitPack: 4TB of Git Commits 🐙🎒HumanEvalPack: HumanEval extended to 3 tasks & 6 lang 📜arxiv.org/abs/2308.07124 💻github.com/bigcode-projec… 1/9

English

Marc Ratkovic retweetledi

For all PhD students in small labs: find all possible ways to collaborate with well-known open research groups like @AiEleuther @laion_ai @BigscienceW @BigCodeProject; apply to every single fellowship and look for connections. It’s not optional if you want to have a career.

English

The quality of training data matters! A lot! And feeding these models well-curated data (real or synthetic) _really_ helps. Also: pre-training loss is a great predictor of accuracy. arxiv.org/pdf/2308.01825…

English