Marcin Sendera

247 posts

@MarcinSendera

Ph.D. student in deep learning @JagiellonskiUni. Learning the machines how to learn. Also, working on enhancing the existing generative models.

@prz_chojecki I'm happy to give you some time to check that the error I've flagged is real. But extremely bad behavior to claim to have solved this problem, given that neither you nor anyone else has checked the solution's correctness, and that someone has pointed to an error.

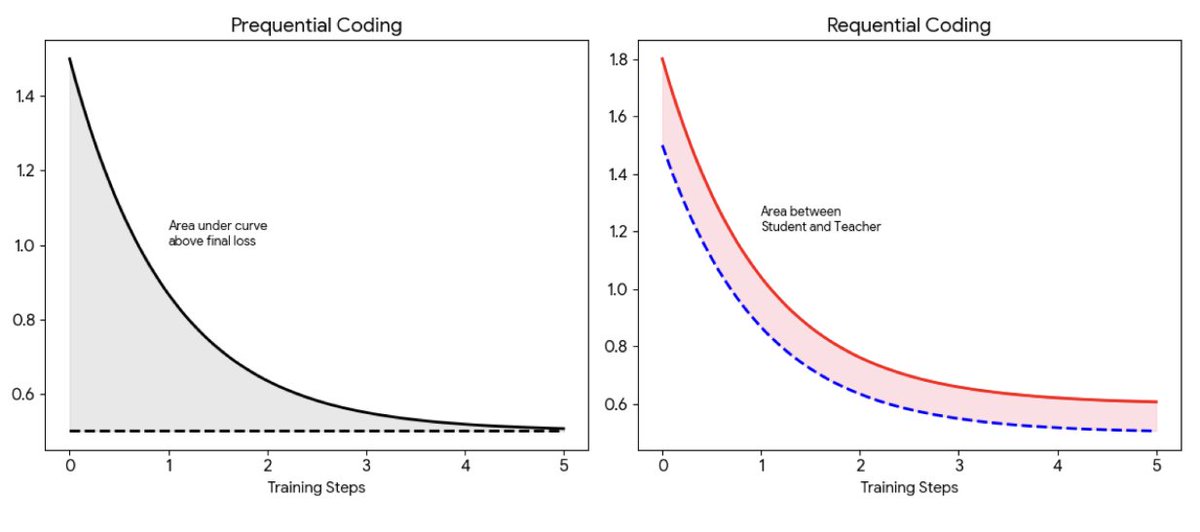

(1/3) Excited to give an oral presentation of PAPL at #ICLR2026 ! Camera-ready: arxiv.org/abs/2509.23405 We ask: Why do we train masked diffusion LMs to match reference dynamics which unmask tokens uniformly at random, when we don’t sample that way at inference?

Today we're launching the ARC-AGI-3 Toolkit Your agents can now interact with environments at 2,000 FPS, locally. We're open sourcing the environment engine, 3 human-verified games (AI scores <5%), and human baseline scores. ARC-AGI-3 launches March 25, 2026.

1/🧵 We are very excited to release our new paper! From Entropy to Epiplexity: Rethinking Information for Computationally Bounded Intelligence arxiv.org/abs/2601.03220 with amazing team @ShikaiQiu @yidingjiang @Pavel_Izmailov @zicokolter @andrewgwils

1/🧵 We are very excited to release our new paper! From Entropy to Epiplexity: Rethinking Information for Computationally Bounded Intelligence arxiv.org/abs/2601.03220 with amazing team @ShikaiQiu @yidingjiang @Pavel_Izmailov @zicokolter @andrewgwils

The end of 2025 marks the end of Hugo Larochelle's term as (Founding co-) Editor-in-chief of TMLR. It is an understatement to say that he was indispensable to making TMLR what it is today. Huge thanks to @hugo_larochelle for everything he's done!

Introducing the Mistral 3 family of models: Frontier intelligence at all sizes. Apache 2.0. Details in 🧵