Marcus van der Erve✨

238.5K posts

Marcus van der Erve✨

@MarcusErve

Applied Physics (B.Eng), PhD. Non-affiliated researcher testing morphology-sensitive extensions to metric analysis across cosmology, AI, complex systems.

What if the whole LLM thing is a false start? If the flaws are inherent systemic problems - if the compounding of hallucinations/errors can't be sorted out? If the capex build out is one of the biggest misallocations of capital ever? Then what? bloomberg.com/news/newslette…

I am increasingly coming to this conclusion - there NO SIGNS the problems with hallucinations are getting solved. In fact if anything it is now spreading to coding and the use of agents where it is hidden and could led to serious problems down the road. Therefore we can't really scale LLMs. LLMs are very useful tools, but you need to know about the limitations. Most of the investments in LLMs today assumes away these limitations.

Correct. People seem to have the notion that once AGI is achieved, advances in intelligence will stop. That is very foolish. Even among humans, there’s a vast difference in intelligence between people below-average intelligence & geniuses. ASI will also have levels as it advances

Sam Altman says today's models can already help scientists make small discoveries But the next class of models will help produce the most important discovery of a researcher's decade, or even their career "soon, one person with 100x GPUs will do the work of an entire software team"

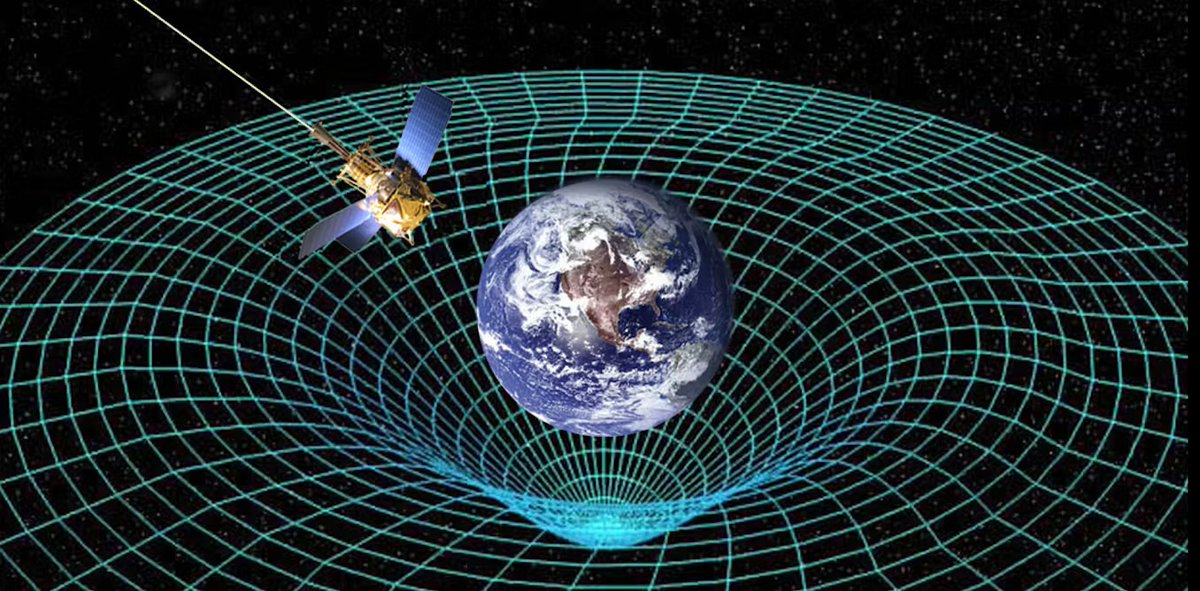

Nice reflection on ontology in physics with a clear clash of different branches of the wavefunction at the end