Massimo Bardetti

857 posts

Massimo Bardetti

@MassimoBardetti

After many twist and turns, I found may way home. CTO at https://t.co/uu1wd71Fbk, working on frontier language models based on cognitive science research.

RIP fine-tuning ☠️ This new Stanford paper just killed it. It’s called 'Agentic Context Engineering (ACE)' and it proves you can make models smarter without touching a single weight. Instead of retraining, ACE evolves the context itself. The model writes, reflects, and edits its own prompt over and over until it becomes a self-improving system. Think of it like the model keeping a growing notebook of what works. Each failure becomes a strategy. Each success becomes a rule. The results are absurd: +10.6% better than GPT-4–powered agents on AppWorld. +8.6% on finance reasoning. 86.9% lower cost and latency. No labels. Just feedback. Everyone’s been obsessed with “short, clean” prompts. ACE flips that. It builds long, detailed evolving playbooks that never forget. And it works because LLMs don’t want simplicity, they want *context density. If this scales, the next generation of AI won’t be “fine-tuned.” It’ll be self-tuned. We’re entering the era of living prompts.

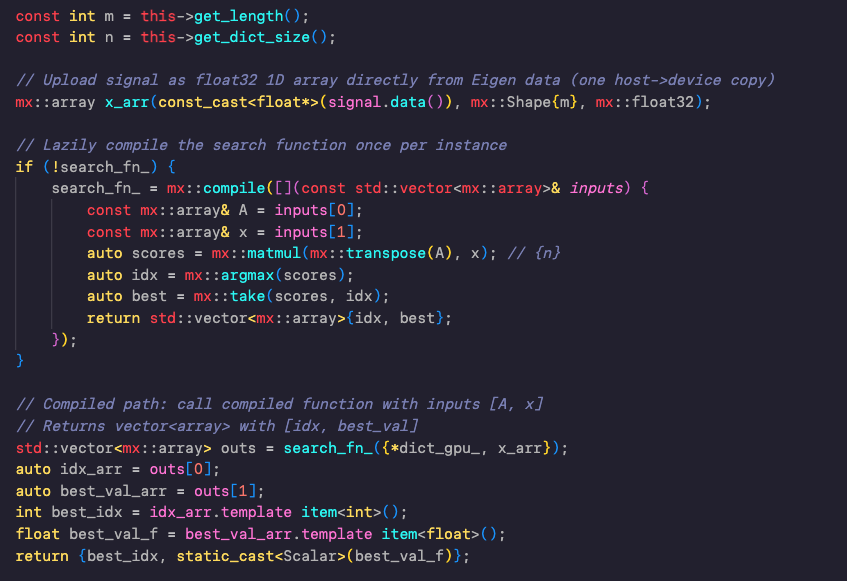

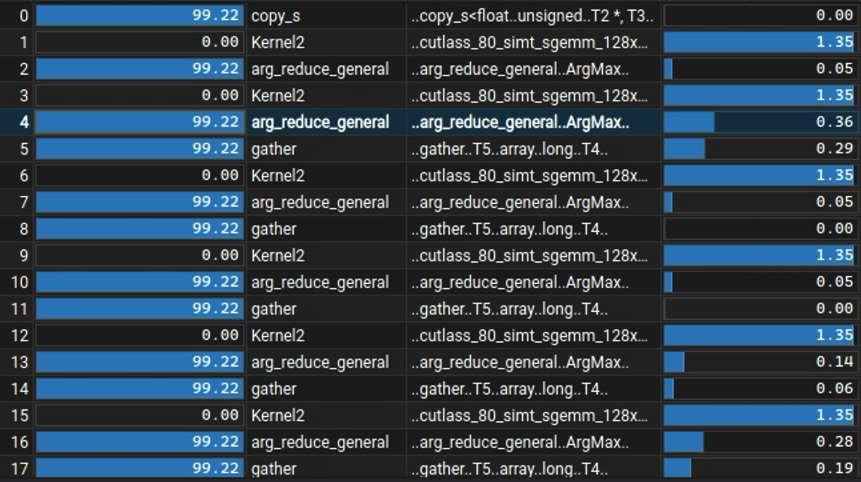

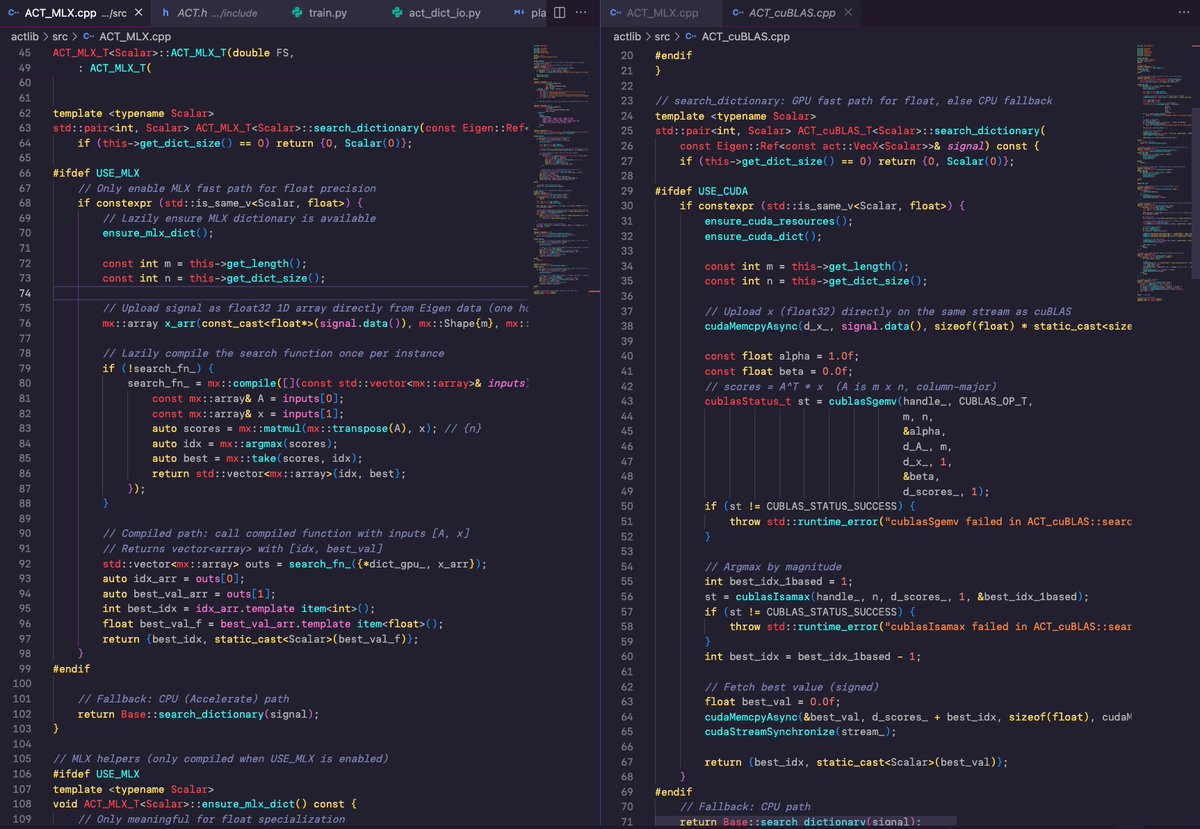

The Apple MLX library is very handy! It has Apple Metal and CUDA backends, and can be easily cross-compiled on MacOS and Linux. Here is my implementation of a matching pursuit dictionary search, which is a greedy inner product of a source signal over a multi dimensional matrix of atoms. The code compiled effortlessly on my Macbook Pro M1 Max, and a Ubuntu Linux Intel i9 with NVidia RTX4090 GPU, with the GPU branching fully working. I tested it successfully on a dictionary of 600K atoms (1.1GB in memory).