Mathieu Dagréou

379 posts

Mathieu Dagréou

@Mat_Dag

Ph.D. student in at @Inria_Saclay working on Optimization and Machine Learning @matdag.bsky.social

@jasondeanlee @SebastienBubeck @tomgoldsteincs @zicokolter @atalwalkar This is the third, last, and best paper from my PhD. By some metrics, an ML PhD student who writes just three conference papers is "unproductive." But I wouldn't have had it any other way 😉 !

Learning rate schedules seem mysterious? Turns out that their behaviour can be described with a bound from *convex, nonsmooth* optimization. Short thread on our latest paper 🚇 arxiv.org/abs/2501.18965

The sudden loss drop when annealing the learning rate at the end of a WSD (warmup-stable-decay) schedule can be explained without relying on non-convexity or even smoothness, a new paper shows that it can be precisely predicted by theory in the convex, non-smooth setting! 1/2

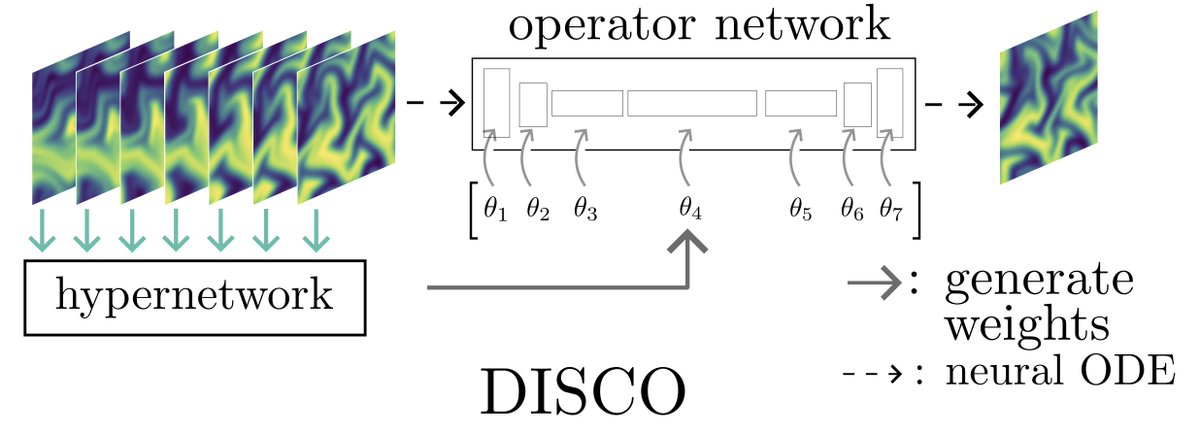

Curious about the potential of optimal transport (OT) in representation learning? Join @CuturiMarco's talk at the UniReps workshop today at 2:30 PM! Marco will notably discuss our latest paper on using OT to learn disentangled representations. Details below ⬇️