Sabitlenmiş Tweet

Matthew Farrell

45 posts

Matthew Farrell

@MattSFarrell

Special Postdoctoral Researcher at the Laboratory for Neural Computation and Adaptation, RIKEN Center for Brain Science

Katılım Şubat 2019

50 Takip Edilen137 Takipçiler

I created a utility for memoization of parameter sweeps called chunk-memo. It collects multiple runs to be written to single files for increased disk read/write efficiency (chunking) including parallelization. github.com/msf235/chunk-m….

English

@cloneofsimo Nova has been freely available on public channels for four decades. Maybe we should be asking, “why can’t we get these content creators public grants to supplement their ad revenue?”

English

What the actual.... why is this content free? Absolute wonder of era we live in we get to watch these resources for free

youtu.be/h9Z4oGN89MU?si…

YouTube

English

My paper with @CPehlevan is out now in PNAS! doi.org/10.1073/pnas.2… Sequences are a core part of an animal's behavioral repertoire, and Hebbian learning allows neural circuits to store memories of sequences for later recall.

English

@MattSFarrell Thoughtfully written, congratulations Matthew.

English

It’s become trendy to use trained neural networks as models for the brain. New theory is helping us appreciate the different approaches neural networks will use to solve tasks depending on initialization, optimization, and other details. Our review here: authors.elsevier.com/c/1hplN3Q9h2Ee…

English

@KameronDHarris I'm not sure what all the hype around threads was as the instagram influencers and coorporate accounts flood it with crap. I'm still holding out how for bluesky once it's out of beta

English

Here's a paper @CPehlevan and I wrote about neural networks that generate sequences: biorxiv.org/content/10.110…. Hebbian learning can store sequences as memories in neural networks for later recall. However, the tempo of the recalled sequence can differ from that of the tutor signal

English

@MattSFarrell @Dirivian Irrespective of the system in place, you need to prove yourself. You can offer a blog post as a contribution... and it might be accepted, but you have to argue your case.

English

@Dirivian Here is an interesting person who worked in a national lab in relative obscurity for a while, but his work is possibly quite revolutionary: en.wikipedia.org/wiki/Jean_%C3%…, tinyurl.com/hww53jss. I guess he somehow found a way to ignore brownie points.

English

@Dirivian Neural networks are essentially the most basic nonparametric hierarchical model that you can imagine. As such their ubiquity isn't really that surprising imo.

English

A long time ago I wrote a simple utility for managing the output of my simulations. I've finally gone to the trouble of cleaning it up enough to share: github.com/msf235/model_o…. This utility keeps a log of simulation parameters in a .csv file for simple but flexible memoization.

English

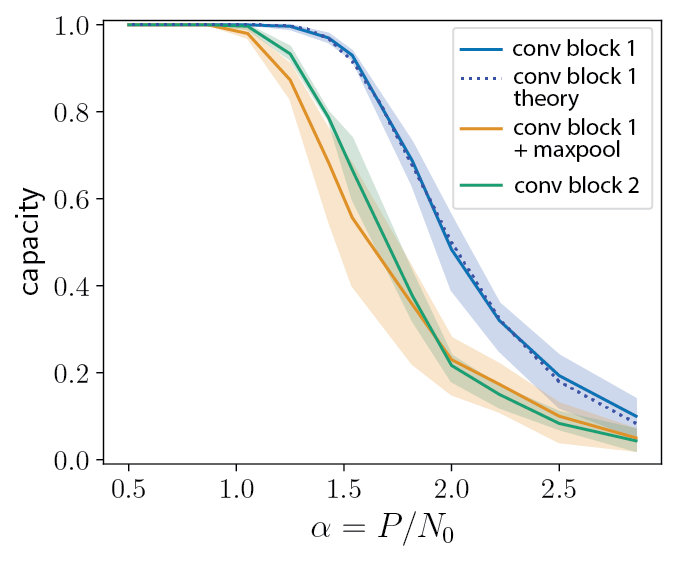

New preprint arxiv.org/abs/2110.07472. We look at the expressivity of neural representations that are equivariant to their inputs -- that is, as input objects transform according to a group action, the representations transform according to an action of the same group.

English